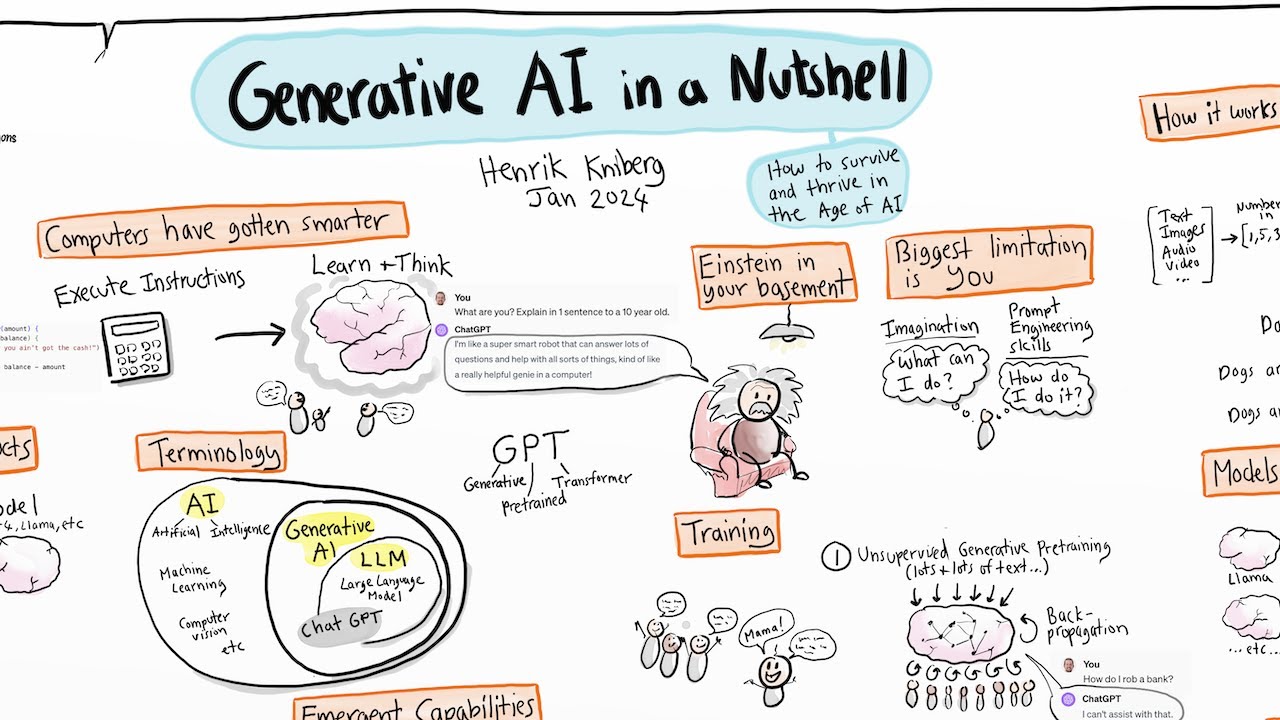

I'm going to share my screen um and just make sure that everyone can see that thank you yes all right great so I'm going to talk about generate AI um in the research process um I I think that there's a a lot of interest and concern around this topic at the moment um as I said I've been um looking at this quite uh in quite a lot of detail over the last two years or so um I have some opinions and positions that uh may Be controversial um so I will share some of that today

and uh you know we can maybe include that in in some of our discussion um I I'm also going to assume that everyone has um you know a passing familiarity with generative AI not that you've kind of spent an enormous amount of time with it um but that you at least are you know um familiar with what I mean when I talk about generative AI or chat GP um if not then you know throw some Questions uh into the chat um I won't be able to see the chat but you know somebody will be able

to draw my attention to that um I also like to start my talks on generative AI with uh with this slide um and and I really do believe that using generative AI to cheat on essays and assessment tasks really is the least interesting thing we should be talking about right now and I will touch on some of those uh um points throughout the the talk but if this is Something that is a major concern of yours then you know feel free to pick it up at the end and we can maybe have a bit of

a chat about that as well I think the main premise that I'm basing this on is that uh most of the assessment tasks that we give students um are based on their like a deficit model we we assume that there's something that they don't know and so we are doing a an a proxy um because the assessment is a proxy of the real world so we're giving them proxy Task and then trying to make predictions about you know where in the real world they're going to not be able to do the things that we care

about and I think that AI has just shifted that entire premise and we need to think very differently about what assessment looks like so I'm just going to do a very very brief overview of um what I think of as kind of the state of the art you know where we are um in terms of the first point generative AI being a next word Predictor that hasn't changed it still is a next word predictor and that's all that generative AI does um it takes the prompt that you give it the context that you provide and

it just predicts the next word in the sentence so all it's doing is just running constant uh probabilities on what the chances of the next word in the sentence being X and then it gives you that word and just as a point of Interest this is one of the reasons why they're really bad at Counting well we think they're really bad at counting um so if you ask it to give you a paragraph of 50 words or to reduce the amount of text in a paragraph by 50 words it really struggles to do that because

it's it's only able to ever see one word in advance and so by the time it reaches the 40th word in that um in that paragraph it actually has no idea how many words are left um before it finishes the sentence so it can't plan how many words it needs to um Insert in order to hit the 50 because it can only ever see one word ahead generative AI is also multimodal I don't know if you've seen any of the the recent news um on Monday openai released a big announcement for an upgrade to the

chat gbt um uh service so the gbt 4 language model that runs in the background um is now fully multimodal um so it's able to see here speak generate video um and then the next day so language models are now almost Completely multimodal which means we can talk to them and interact with them um in in almost real time just with natural language without having to uh type um generative AI is increasing in competence and there's two main areas where this is happening the the one is called retrieval augmented generation or rag uh rag is

quite a um a useful development that we've seen in the last few months and what rag it does is that it takes your prompt and it creates a Search query from that prompt goes to the internet pulls down resources and then then uses those resources to inform the generated response that it gives you so what this does is it grounds the model in reality so it's you're able to specify that it should only retrieve academic papers for example um and so it's able to provide citations that are accurate because it's drawing directly from those um

from those articles that it's pulled down so you know some of the Concern around hallucination and citation um is is definitely on its way out those are not problems that we need to worry about anymore um well I'll I'll cave at that in a couple slides uh we do still need to worry about it but it is getting much less of a problem and then plugins apis and gpts I won't go into detail about what those things are but they really allow us to connect Foundation models to third party services and what I mean by

That is that if we think of gbt 4 or um clae 3 from anthropic those are Foundation models those are The Cutting Edge really big really expensive models that all other things are based on um and the third party would be things like wol from alpha um physics engines uh so we we look at something like chat GPT and we say it's really bad at computation mathematical computation that also is becoming less true every day but even so we can use apis to Connect those Foundation models to something like wol from Alpha and now all

of a sudden CH GPT is incredible at mathematical and physical computation um so those kinds of uh competency improvements are happening all the time um and I think people who who run small experiments with vanilla what I think of as vanilla language models the the very very basic things that you just get out of the box using what are known as naive prompts so they're really really simple And superficial prompts uh you tend to get very simple responses back and people look at those responses and say oh well there's nothing to see here this is

not really that interesting um and I'm I'm going to go into a little bit of detail about why that is not true um generative AI is everywhere and then I can cave at that by saying not yet but soon so it's already built into Windows 11 um it's already built into productivity software across the board So you may not have seen this yet um I don't know if your institution has signed up for um the uh $30 per user per month upgrade to Microsoft 365 where this is built into every single um uh product in

Microsoft ecosystem we've seeing it in cars phones so essentially this is Intelligence on demand and I know that there's a cost available but um even the GPT 3.5 uh level models are are really really good um for most People's use cases and those have been free now for for quite a while um and kind of what I think of maybe is the biggest Advance is these context windows that enable more accurate responses to more and more complex prompts so the context window is every attachment that you upload to a language model and the entirety of

the prompt and its response in an iterative back and forth conversation so that context window is getting bigger and bigger and I saw that The the newer ones are going up to like 2 million tokens so just for a little bit of context um all of the Harry Potter novels are less than 1 million tokens so that means you can essentially um in the more cut Cutting Edge models upload every single Harry Potter book and then have a conversation with the language model about the entire world that gets described in that book or in those

series of books so those big context windows are really allow us to Give the models an enormous amount of um context that we can use to inform our conversations with them um it's surprising to me how many people still think that generative AI is the equivalent of search uh so in search uh you put in a series of keywords and the search engine retrieves that information from a database and points you to a source um in language models the responses are generated one word at a time that use the prompt as a starting Point um

this essentially means that language models have no ground truth um there's nothing that they can refer to as the source for their information now this is changing with things like rag which I talked about in the previous slide but essentially they've got no a priority model of the world um and this makes it in the beginning it made it very difficult for us to know what was real um people talk about language models hallucinating um the reality is That every single response that they give us is an hallucination according to the definition of that word

in the computer science literature um it just so happens that increasingly those hallucinations map onto our version of reality so when the hallucination looks like something that we believe to be true we say that it's true when it doesn't we say that it's hallucinating but the reality is that every single response is made up from scratch from The ground up and therefore is an hallucination uh data Providence uh has until recently been a very difficult problem because of this reason because the language models don't refer back to a ground truth they don't refer back to

a source um uh it made it difficult to um uh for them to site sources because they they literally had no sources they were making it up um again this is changing now um it's becoming less and less of a problem but even though there Were these problems um alims are nonetheless really really good at limited forms of World building um and what I mean by that is that the context that we provide them creates a boundary that they can use to kind of delimit the responses that they give us um and the more context

we give them the more complex those responses um turn out to be uh so this prompting is really important um it's not the equivalent of search um we've become accustomed to uh Breaking our search queries down into a series of keywords and then the search engine uses those keywords to find sources but um in uh generative AI prompting is actually establishing the boundaries of the world within which the model thinks so we actually have to provide more context search engines taught us to take complex queries break them down into very very simple constructs and then

use those simplified versions we'd reduce the complexity um In order to get the responses that we want with generative AI it works the other way around we have to provide complex queries to sech to generative AI in order for them to give us uh what we're looking for and contextual richness is a really important indicator of the quality of the response more context means higher quality output um I think I've got a slide a little bit later about our responsibility then in um getting weak responses I've mentioned The fact that this contextual awareness is always

increasing and one of the simplest juristic that you can use when you are using language models is this rooll goal instruct model uh you say you are and you give it a Persona you say I am that helps to uh establish what you need um and then you say I want you to um and that kind of framework you'll see all sorts of prompting Frameworks and you know everyone is you know trying to Position themselves as some kind of prompt engineering expert the reality is that the prompt is less important than your ability to keep

an ongoing illative uh interaction with the language model so that initial prompt does establish the boundaries that you're using for this interaction um but it's really then about how you can start to engage with the language model so I think a lot of people will look at it um look at the response and to say whether or not it's Useful it's kind of a binary decision that they make is it good or is it bad um what we actually should be doing is thinking of that as kind of the first step in in a in

an ongoing conversation so it's it's actually really really good if you can look at that response and say to the language model oh that's not really what I was looking for I need it to be a little bit more formal or I want you to give me the response in academic language um but maybe not so much jargon Or take all the jargon that you've just given it given to me and uh rewrite it um so that I can understand it because I'm an noice so that iterative um um conversation is really important and then

um the kinds of structured prompting that we can give language models mean and the complexity of those prompts means that they can start to approximate agents and the the difference between a language model and an agent is that the agent is kind of a Collection of models where you have one kind of Master model and that model is able to hand off tasks to other um smaller models that are um fine-tuned to achieve certain tasks so rather than learning how to prompt models we will become um uh almost like project managers and hand off complex

pieces of work to agents and those agents will then break down those tasks or break down those large projects into a series of tasks and then use those tasks to Generate prompts that they give to other language models and we're already seeing um the start of this kind of thing with um code um code completion agents um and one of the most popular ones is called Devon um if you want to look at that piece of work um the advice that I give most people is to treat generative AI like an expert and to think

of it like a person um so we're starting to see that generative models have expertise within and across professional domains so you May uh be an expert in a certain domain but your and your expertise may be very deep but it's going to be very narrow and the more time you spend in Higher Education and Research you kind of increase that that Focus um and so your domain of expertise becomes increasingly narrow but increasingly deep what we're seeing is that generative AI is it started out with kind of superficial expertise across most professional domains but

it was very kind of um Shallow and what we're seeing is that every every day um these models are just increasing in the depth of their expertise across all of those professional domains so we can see this in things like um gbt 4 being able to pass the New York um uh licensing exam uh it has has already passed in the 90th percentile the um United States medical licensing exam um and so I think there's a list somewhere where you can see all of the kind of High level regulatory body examinations that gbt 4 has

passed and almost all of them are in the 80th and 90th percentile which means that they're performing better than 80 to 90% of the people who take those exams so we're really seeing this deep expertise developing um very very quickly they are language models and so it makes sense that they are expert communicators um and so we can ask them to explain Concepts to us in very very very diverse ways uh you can Um one of the examples that I had a colleague show me was to explain mathematical concepts for the UK GCS GCSE exams

using the metaphor of Formula 1 racing because his son um was a big fan of Formula 1 racing um but was struggling with mathematical Concepts and so he was getting language models to explain those Concepts to him using um the analogy or the metaphor of Formula 1 racing um you can say take these um take these five papers of this really Advanced topic that's right at the edge of my expertise and explain it to me as if I was a 10-year-old and then once you wrap your head around that explanation say okay now explain it

to me as if I was a first year university student now explain it to me as you would to an expert and it's able to take you progressively through those different explanations there's different levels of explanations so that communication skill is I think an underappreciated um Feature of language models a lot of people are still concerned about the accuracy of answers and I say that the way around that is to not ask it for answers but for ideas they're really really creative and if you think that creativity is this special human thing um then you

haven't really been playing around with language models at all um all that creativity is is the ability to make novel connections between uh seemingly unrelated uh Concepts and I Mean people who are kind of experts in creativity will say this all the time um all that creativity is is that abil ability to connect um ideas across domains language models are able to do that um way better than than human beings um when you start treating them like like human beings like people you start to evaluate its responses differently so we don't think that any person

um is able to give us a response that's 100% accurate we assume that Every every response that someone gives us is taking place on a spectrum and we assume some level of error and we adjust our expectations accordingly and so we don't ask someone a question and expect them to give us a response like a computer would and with that level of accuracy and so when we look at language models we can start to calibrate our expectations in a different way if we think of them as people I know that they're not um but it

helps to to change The way that we think um and when we start interacting with language models in a collaborative engagement we start to realize that we are both responsible for weak outputs so when I get a bad response from the L model it's not that it isn't very good it's that we haven't yet figured out a way to work effectively together and so the more time you spend with language models the more effective you become at using them to increase the um the the accuracy and The relevance um and the strength of the outputs

that you're getting from the language models so now I'm going to shift um a little bit and start talking about some specific use cases in the research context um we know that the research process relies heavily on this idea of data Providence so we can take the conclusions of our research we need to be able to map that conclusion back through the data through the Interpretation through the data collection and Analysis through the the instruments through the design all the way back to the initial problem question and M and that's that data Provence that that

link that we look for um across the whole of the research project um so now we've already said that language models don't do that data Providence very well they are getting much better every day but not all of the research time rely on that very kind of strong link um From one thing to another so I'm going to give some examples of isolated things we're not able to yet give language models a research problem and then ask it to build a um uh to actually do the research but um I just as an example yesterday

I put together a research proposal um from scratch uh in about 2 hours um it was about 10 pages of work um and I did almost every part of it with um with Claude uh Claude 3 is a language model from anthropic and I Basically started off with a prompt where I described everything that this project needs to do um uh the the problem I wanted to address the aim the kind of design I wanted it to use uh it gave me an outline and then I worked with it iterating through that outline to prepare

um the full research uh proposal um and now that's become the first draft that I've shared with the rest of the research team something that would have taken me two or 3 days in the Past um took me about about 2 hours we can have a conversation later if you think that that's unethical um well I I think it's not but you know some people have different ideas um okay so let's talk a little bit about some of these examples so these are all prompts that I've used for projects that I've been busy with over

the last couple of months um your mileage may vary um I've also simplified these prompts usually my prompts run on to a couple hundred words Um sometimes you know up to a thousand sometimes many thousands when I'm attaching documents that might be 20 to 30 pages long so depending on your specific use case um you you might uh you you have to experiment is what I'm trying to say um so in this example I needed to put together a CPD activity that was evidence-based for some of our external Partners in the local NHS trusts so

um it was going to be about the key features approach to developing Clinical reasoning uh we don't need to get into the details of that I'm familiar enough with with the topic that I felt confident in my ability to judge the responses from Claude um I went to uh Google Scholar I just downloaded the four articles with the highest citation counts for um the for the key wordss key features approaching clinical reasoning um I just looked at the titles made sure that they were kind of in the ballpark of what I was looking at uploaded

them To Claude said tell me why this approach has Merit why it might be useful for me I wanted to use that as a kind of a an executive summary for the clinicians who are going to be looking at this I asked for five key takeaways a set of principles for incorporating the concept into assessment tasks because that needed to be included in the um the CPD activity and I've just taken the first line from each of the responses or the first line from each response to the Questions that I gave it um this was

a a really really uh quick way to to build out the CPD activity um and then uh I think there's an example a little bit later for a um an additional component that I asked for um and idea generator um it's really difficult to come up with um ideas for uh phds especially when you're not working at The Cutting Edge so you tend to have to spend an enormous amount of time reading um what's happening in The Cutting Edge for you to make a decision about what kind of question you might be interested in in

exploring so this was just something that I um you know took off the top of my head um all of the questions that it suggested are you know really really good questions if you're doing a PhD and you're interested in this topic generative Ai and scholarly practice you know it gave me I think what are there four um four suggestions here um one of The things that's worth noting is that it's as easy for it to generate 20 questions as it is to generate five so um you know I was saying earlier like we we

think that humans are really creative when you start pushing language models like that um you know don't ask for three responses ask for 100 and it will give you 100 um now you can go through that and you can throw away 80 of them because you know you decide that they're not very good 20 of them are going to be Really good and five of them are going to be phenomenal and way better than you would have been able to come up on your own so this idea of using language models to push the boundaries

of creativity um to find gaps uh to find personal interests I think this is a really useful um area summarization is just phenomenal um I use this multiple times every single day um so I upload uh articles reports um and I just say summarize summarize this Thing um you can say summarize it in a thousand words 5,000 words summarize it and pull out these features um where in the document do they talk about this um and so it's very rare that I sit down and open a document and start reading from the beginning um um

I I basically bounce around I asked for a summary I picked three or four things from that summary that I think are useful and then I start having a conversation with the with the document through the language Model um I've mentioned this idea of explaining something to me as if I was an noice um sumarize meeting transcripts so if you have meetings with your with your team with your uh with your supervisor with your students um just pull out those transcripts um and just say summarize these include that in your notes um it's really really

good for for video transcripts um and you can say I I use this all the time with teams because my University doesn't pay for the 365 integration with co-pilot but I still get the transcript I get the transcript and then I say you know what time did blah blah say this what did they when did they discuss who said what um so it's really really useful um again don't think of it as this kind of once off interaction think of it as a conversation that you're having with the the language model um this is a

a little piece of Work that I did with a team that we are working on um for uh we're doing a focus Symposium and workshop at iom in a couple of months um and so we want to develop a survey for Workshop participants um so I basically took an initial set of responses that people had given us uploaded them um to Claude haded generate a um uh like a framework um where I said you know from all of these responses from these participants tell me all the things that were top of Mind um in these

questions and then from this build a survey that we can use pre- and post Workshop that we want to run um I gave it the outcomes I made it clear that I wanted to do kind of a comparative analysis so only give me questions that would allow me to do that uh include a range of different question types that was just experimental I wanted to see what kinds of questions it included in the survey it gave us about 15 questions and now we can use that as The the beginning of our um kind of planning

um this also is a little bit controversial um well I don't think it's controversial but uh but some people do um uh Dawn mentioned earlier um about submitting an article and the uh the journal wanting to uh for for you to disclose whether or not you've used generative AI the first example I've seen of an editorial board was the New England Journal of Medicine um AI um Version of that journal they encourage authors to use generative AI in their writing and the reason that they give is that it levels the playing field so reviewers tend

to place unconsciously they tend to place a lot of value on your ability to articulate your ideas using good English um weirdly not everyone in in the world speaks good English and so um the uh the editor the editorial board said that what they care about is scientific ideas and so um if You can uh if you can use generative AI to articulate your ideas better then science improves and and that should be the goal not how well you can write so um I think that's a really useful way of thinking about things and I

think about that a lot when it comes to assessment we can talk about that at the end if anyone has any questions um so I had I was working on a book chapter with a colleague um each of us had been working on for some obscure reason we had this We had two different versions of the chapter that we were going to submit um we wanted to um collate those two um those two chapters but we also had a separate document about 15 pages of notes of Concepts that we wanted to include in the book

chapter and you know it was all kind of messy and and very um kind of ad hoc and all over the place very halfhazard and we wanted to kind of align these three documents where we had the one version of the book chapter that Include all the relevant Concepts from the notes and from these two drafts that we had been working on explained what the book was going to be about um there were specific definitions of this concept of compassion um I really wanted it to make sure that it used that definition not to come

up with its own definition so I specified I wanted it to pay attention to that definition analyze the documents take the high level ideas as a framework for A draft that I want you to write um we were also writing this for a non-academic audience so I said I want you to use an academic um so you're an academic writer but I want you to avoid stuffy jogg field writing and that's the exact prompt that I used and generative AI knows what that means um and so it wrote this in a really accessible way but

it was still kind of more slightly formal in how was structured and I just said I want you to Begin by laying out the a of the chapter and blah blah blah um and basically it it took all of those documents and so it would take chapter this uh paragraph two from the one document which aligned with paragraph s in the other documents merge those two paragraphs and then compress them so that there wasn't any repetition or duplication and then that gave us a starting point where we could now start working on this new piece

of U this new draft um and Move forward with that I've never done this personally but I've got no doubt that this is the way forward um so for grant writing a lot of grant writing is formulaic and box ticking um so funding bodies usually have very specific Frameworks and outlines that they want you to adhere to so they give you a set of guidelines and a template that you use to fill out that document um you can actually upload those guidelines now you can take a narrative description of the Project that you want to

do so you just write out everything that you want to do um using kind of your just normal language so you get together with your your team you write out what you want to do and then you upload both of those documents to the funding body's proposal guidelines and you say take the work that you've already done and just format it according to the requirements of the the funding body um you can also upload past Successful applications if you have access to those um you can upload success UC F applications that are not related to

this funding body um but which are nonetheless you think you know well described um so you can say take the take the tone and the language of this other successful Grant application but replace it with all the content of this new application and it's able to do all of that sort of thing um so I wasn't I didn't attend this Workshop but I just Pulled out some of their kind of marketing around it I thought this is a really interesting approach um I've got no doubt that in the future all grant writing applications will be

done with AI and uh funding bodies will be using AI to evaluate the at least the first round of those grant writing those Grant applications so we'll have this weird thing where we're using AI to generate work that is then being evaluated by AI I mean this is already happening in Journals now so the bigger journals are already using AI to help editors um make sense of the Avalanche of applications not applications submissions that they're getting um this is kind of still a little bit controversial I know that the supervisors in the room are kind

of you know having a massive intake of breath now um I think that the data analysis component is not quite ready yet for a first round I wouldn't use generative AI To do my data analysis but just as an experiment I took a transcript of a focus group discussion to uh generative AI I uploaded it it was anonymized um just also the terms of service of most of these companies allow you to make sure that what you upload isn't built into the training data so that's I know that that's something a lot of people worry

about but it's not real um the terms of service do prevent them from taking what you've uploaded and Incorporating it into the training data I think with chat GPT there's a little toggle that you have to switch in the settings um by default it's on but you can switch it off Claude um doesn't collect any of that data and include it in the their training runs anyway um so you can upload that documentation I still would make sure that it's um anonymized if you institution has um a co-pilot uh if you've got an Institutional license

to Microsoft for Their co-pilot language model uh you can do this um in a way that none of your data leaves the premises so it all just sits on your institutional servers you don't need to worry about it going back to anyone else anyway so I took this transcript uploaded it to Claude um and I just said analyze um so I think the the original prompt was a little bit more detailed um so I think I might have asked her for a thematic analysis or or something like that now you can get even More specific

and you can say I want you to do an analysis in the style of and then you can upload a a PDF an article and say I want you to do the analysis that's described in this framework in the article that I've just given you um and in that case I've got no doubts that it will be a really really really really good analysis now we need to decide as a community whether or not we want our PhD students not doing the analysis um if you think that that's a controversial Question then bear in mind

that invivo and Atlas TI are already building this into the next versions of their software and so I would suggest that it's not going to be that much longer that we are going to um be asking students to do or even researchers to do any kind of data analysis we use SPSS to do the more complex mathematical or statistical um analysis for um for quantitative data we've never had software that's been That's allowed us to do the qualitative data analysis that's going to go away very very soon um so you know that's also something that

we can talk about um for me I it's it's not an issue um I think for this kind of come back comes back to what I was saying earlier we have to ask ourselves what matters in the future um outside of universities no one cares no one cares how you solve the problem all they cared about was that you solve the problem when someone Solves cancer nobody's going to say how did you do it how did you do the analysis how can we be sure um the only thing that matters is that you solved cancer

um and I think that we are shifting in that direction um and again we can that might be a controversial claim I don't think it is um but we can talk about what that means so in summary um language models are next word predictors they can generate multimodal content content they're improving Incompetence every single day they have uh give us access to expertise creative ideation through natural language which we've never had in the past um though we acknowledge that there are biases and limitations I think that there's a wide case wide range of use cases

across all aspects of research there are still some ethical uh implications um but I think that those relate largely around to a um a paradigm that is uh rapidly getting old um I think we still need to evaluate Outputs critically and I say for now because I think in 6 months time we we are going to trust these outputs um more than we trust uh things like Google Maps um so yeah um that's my that's you that's my pitch um and and now hopefully we can uh this time for a few questions thank you very

much Mel I think you've given us so much to think about and so much to explore um um so I see there's already a a question but there will now be opportunity for Questions Rena you you you had your hand up first you can go ahead hi morning everyone um thank you very much for that I think that was a very insightful and thought-provoking um uh after we we attended um a program this week and yes there was um there was a section about Ai and um I decided to play around with it uh so

I used chat GPT and um I actually coincidentally um asked to help me with gr writing so I didn't you know Use any guidelines or anything and um it gave me like all these different sections and it obviously helped me thought about you know different ideas of how to sort of position certain things but then I asked it to um provide reference for certain sections so um uh can you help me with you know substantiating these um things that youve provided and it said because I'm not um uh what was the word but it could

improvise references so um I just wanted To maybe hear some input from your side on that yeah I mean that's that's you know a common concern that people say it doesn't provide the references so um so there's a couple different facets to that I mean the first thing I want to say is that two years ago these Technologies didn't exist and like we we're complaining about the fact that it can't it can't do the work that a human being um is able to do and yet you know the amount of progress that we've seen In

in all this time is is phenomenal um I I would use something called perplexity so there's another language model called perplexity if you search for perplexity aai um they from the beginning have used this approach called rag retrieval augmented generation and they do provide citations for all of the outputs so it's a very very very good language model um it's kind of used a lot in in the academic Community for that Reason um so uh yeah um again I I think that this problem is going to go away very very soon um on Monday uh

open AI part of their announcement was that the gbt 4 model would be available for for everyone for free um and I mean gbt 4 is an uh order of magnitude better than gbt 3.5 which up until now is what most people have been using on the free version um and so you're going to have access to gp4 for free it's going to be connected To the internet so it will be able to go away and find those resources that you were looking for but perplexity does that today um so you know uh personally I

still use my own references because I I like to find my own references um uh but I don't think that that's going to be an issue moving forward I just see there's a question about using the PA paid version of Claude as of about two or three weeks ago I did use the paid version um and I I do this kind of thing In some of my talks where I talk about Intelligence on demand and that's exactly what this is um I don't pay for these language models at all except I have a very very

very busy month um and I have an enormous amount of content that I need to get through as part of that we just had a whole bunch of work that needed to be done and I didn't want to hit the um the limits of the free version of clae um so I paid for it for a month because I just don't want to Have to care about um you know hitting that limit so now I just have it running all the time and I just use it for everything but at the end of this month

I've already canceled my my subscription um so it'll get to the end of this month when my workload dies down a little bit and then I I'll just keep using the the free version all of the examples that I've given you bar one today um were on the free version of clae um not the paid version Thank you and then Marlet also has a question yes good morning Michael and colleagues um as you know we're sitting in a lot of meetings where we need to take minutes so I was wondering if you use a recording

of these meetings and ask AI to generate the minutes however my question is regarding the because sometimes we discuss very sensitive issues what happens to this information and it's it's it's yeah is it safe is really safe because some of these things Are really private and um yeah so that is my question regarding minutes um right so that's a great question um transcription is built into teams already so I'm pretty sure that your recordings all automatically get transcribed is that true yeah yes it's true yeah yeah so so what I do now is I download

the transcript which you get in a Word document I save the word document as a PDF then I view the PDF in a browser um and then co-pilot is built Into Edge uh so I don't know about your University you're a Microsoft University so so are we um we have an Institutional license for co-pilot which is a GPT 4 level um language model so a copilot is built into Edge view the PDF in um in edge browser and then ask co-pilot for a summary and you can have that conversation with that and the benefit of

that is that all of that interaction the PDF the prompts the responses all of that sits on University Service and none Of that goes to Microsoft um so it's it's completely secure you should actually have a little tick box a little green tick in the top of your um co-pilot window that just says that this is protected okay okay thank you thank you for that Michael that's good to know thank you that's also a lovely idea Marlet to to have the minutes quicker hey um and Michael seems to to be uh you know you can

just give the solution about appropriate uh gen a to to you so Michael I think we might need to do a practical session with you as well but I see I'll just to just to comment on that um I I use this for everything there is nothing that I don't integrate generative AI for anymore yeah yeah I have a question about that but I'm going to hand over to Prof hanom first hi Michael thank you for for sharing some of your ideas I I am aligned with what you're thinking my question is just um around

the role of Universities going forward and I know there's you know I mean this has prompted us to start thinking about you know what are the what are the outcomes that we are actually trying to um facilitate specifically at higher order and PhD students um because it almost seems as if you can if you if you write the right correct prompts you actually don't need you know the the traditional skills if I can put it like that for a PhD student so I was Just wondering if you have any thoughts around that X I've got

lots of thoughts around that and they're not very comforting for academics and universities unfortunately um exactly um okay so in the mediate term um I think that universities need to shift the idea of PhD and and postgraduate programs completely um so when you start using these language models um oh let me go back a little bit um there's a there's a professor of Business at the the warten School of Business in the US he says that if you haven't had three sleepless nights filled with existential dread about the implications of generative AI on uh the

work that you do then you haven't really understood it um and and I really do think that that's true um if you think that this is just a just a tool this is just something like word that we're going to integrate into business as usual um I I think that you've missed The point um and that that's not because like there's anything wrong with you it's that we've never before had to deal with something that has this level of implication for um the work that we do for society actually um so I think that this

is massive in a way that like nothing that has come before um has been uh this important in my opinion so moving forward uh I think that postgraduate research needs to shift entirely Um away from things like proposals and protocols and um the kinds of problems that we traditionally look at um I think all that matters moving forward is actually solving problems in the world um and so I had a question interestingly I I actually gave the same talk to the um to the medical school at St B University on Tuesday um and one of

the questions was around research proposals um and how do we make sure that students aren't using generative AI to produce Their research proposals and basically I said why do you care like we generating research proposals now is so easy and so trivial that anybody can do it like it used to be this really specialized skill now like a child can generate research proposals so we need to shift our expectations massively away from the generation of the proposal I'm now trying to make the arguments in my institution at the undergraduate level that if language Models are

giving us kind of superhuman capabilities across all knowledge domains we can no longer expect our students to write essays or to complete mcqs we have to change the Baseline expectations so that we massively increase what normal looks like so for me I want to see my undergraduate students actually implementing um health promotion programs and then directly measuring the impact of those programs in their Communities I'm not interested in them writing a proposal about what a health promotion campaign should look like they actually have to implement it and then demonstrate the effectiveness of that so let's

take childhood obesity that's a big problem in some areas that that I'm working in I want my undergraduate students to implement health promotion campaigns that demonstrably reduce that problem um in that community so there should be building apps they should be Building businesses they should be building interactive websites they should actually be doing things in the real world and using AI in any way that we want um not everyone agrees with me most people don't um but that's for me that is the only way forward I I don't see I don't see how we can

move forward because we cannot reliably detect the use of AI um by students so we either have to assume that they're all cheating Or we just have to make it normal that everybody uses AI for everything all the time because that I I don't see a future where that isn't the case sorry I don't know if that answered your question um Prof no it does I mean I don't think it's a conversation that we can have here I just think that we need to start thinking because I agree with you in terms of this is

part of our Lives it's almost like the invention of the wheel or fire it's going to be Existential in terms of disruption we're already we're already seeing businesses that invest in generative AI models to support their um uh employees productivity um they're increasing their investments in generative AI over time and their productivity goes up profits go up um throughout every other sector people are embracing this and showing improvements in outcomes that they care about uh higher education can't be any different Um we need to embrace this uh 100% for everything all the time um in

my opinion thanks we can continue this discussion thanks by yeah I I think that's a very relevant and very needed um discussion to have and and how we should adapt and and change um and also to prepare us for for that change um adno you are next Prof umer is having a big conversation here in the chat profer I'll give you an opportunity After after Adel has spoken um thank you everyone thanks I'm Michael nice to see you again um the the I completely agree with what what was said earlier on and I've took bits

and pieces of um um uh what we what we with what we just said um and I think some of our questions were related to grant writing and um postgraduate students and maret was speaking about uh minutes and etc etc I maybe would like to share a little bit but also my experience with Because I haven't used it much I happen to use I think it's called po um and I and I had a limited time where I had to do a teaching session with a second years to catch up on some work that they've

done and I want to my comment is basically linked to the creativity um so I gave it a prompt in terms of I've got so many so many hours and these are the the ideas or the topics or the outcomes that I would like to use and it gave me a framework on uh for how to plan my Session um and then obviously I used the outcomes to bring it back after the summary it also gave me a summary you know summarize something and these are the things that you must check whether the students understood

um I hope I hope the students will be able to use um false risk out outcome measures a little bit more on the clinical platform next year um but it it will be surprising how engaged they were um it was a I think I had a 4 Hour slot for this SketchUp um And most of the time they were engaged and the things that came back from them and it it's I think for me it it linked back to what you said about creativity um uh uh uh in simple language guiding um so the creative

idea was there it was created for me and then I went further and I used my um my brain and I uh uh uh you know slotted things in so yeah I think I've I've also listened to a a previous seminar on the use of AI and I agree with um your thoughts and what Susan also said and what Maran spoke about here in the in the in the chat that we must normalize it I think if we normalize it we may end up with the students not using it because we be saying that they

must try and use it but as they do um but yeah it it can be a very great it can it can be of great help it saved me some so much time um yeah just in my experience with teaching I use it uh also where uh I had a group of students that were really disengaged Very passive and for every lecture I was going back and saying like I'm I'm trying these things uh it's not working students are very passive give me some more ideas about how I could um engage them more effectively um

and you know the students remained disengaged um so but the language model was giving me ideas that I hadn't thought of so I really found it useful for that yeah and that idea of normalizing it I think is important but not without in parallel Also changing our expectations for assessments um I think that that's really important if we're going to carry on giving them the same assessments that we've been giving them for the last 500 years um and let's be honest we haven't changed anything really um I think that we need to uh massively shift

our expectations for what those assessments need to look like thank you thank you so much Michael do you Still have a minute or two yeah okay Prof Maran do you want to add anything I I also have a question or two but I'll give you opportunity to add no thanks Michael in the chat I'm not sure whether you've read it yet you know I'm I'm FL gasted that in today's day and age never mind AI just the digital competence um is so starking absent from our professional competency Frameworks and you know and it sort of

begins begins there um if you know but So that's the one thing I wanted to say the other what's really difficult for me I mean I love it it has saved me an enormous amount of time but when the pressure is on and you need to deliver something and you struggl I mean I'm a novice and I really struggle to prompt correctly then together with limited you know when you upload documents that they need to include in they thinking about providing me with the solution and maybe that's a mistake I'm making it actually Has that

information I don't need to give it the information um yeah so I'm still struggling with that so sometimes I say okay let me just go back to the old way of doing because I'm a little bit more confident there you know so the uptake as much as I love it I find I'm doing it more just for personal and selfish reasons rather than yeah so again um if it becomes more um normal more acceptable um and we actually because I think it's fantastic if Students use it uh because it helps them to think but we

have to our J job is to check that they are actually thinking and they do actually understand what it offering because otherwise it's also useless you know then we start getting zombies um AG know a lot but actually don't know what to do with it yeah yeah no thanks good to see um I think that idea of um uh what you said about prompting and getting comfortable with uh with the Technology I think that it takes about 10 hours um from what I've seen uh 10 hours of use to feel like you have a good

sense of uh of the eccentricities of the models they do have personalities and um not like a human personality I know that they don't really um but they all respond in slightly eccentric ways um one of the things that I did the other day uh was that we we had to put together a funding proposal presentation for the charted Society of physiotherapists and I took their quality assurance framework and their standards framework and asked language model to develop a an line of that and then to map our vision for the future of physiotherapy education that

was just what the project was called and we had to submit the application so we had a vision and then I wanted to do a mapping exercise where I took that vision and then looked at the Quality Assurance framework and Basically the prompt that I gave it was quite complex it ran on to several hundred words but it was you know these are the documents that I've attached this is what each document is this is what it describes I want you to review the document and make sure that you understand it then I want you

to and I just took it through this step a series of steps that I would have had to do um but I just told it to do those exact same steps and so I would say take the Outcome of step one and feed that into step two take the outcome of step two and then make sure that you include that in step three and so it's almost like giving a research assistant a very clear set of um guidelines around how you want them to complete this piece of work and it did everything 100% it took

me a long time to build the prompt because I had to think very carefully and clearly about what it is that I wanted it to do but once I said go oh and then you can Also say nobody does this but you can also say ask me clarifying questions if there are any pieces of information you feel are important in order for you to move forward and when you do that it will often come back to you and say here are three questions that will help me in giving you a better response and so again

treating it like an assistant where you kind of make yourself available to provide support for it in order for it to achieve the outcomes That you wanted to do Aran you will muted there but we saw the thumbs up uh and we have a hand from Eugene Eugene you may go ahead yeah good morning everybody Michael I'm very happy to see you again good to see you too how are you fine thank you doing very well at St University okay um I want to comment about the use of the this language model I remember when

they come in people are Still concerned about the students they are saying that is going to take their the level of clinical I mean critical thinking and all those kind of things but one thing I think have realized that this language model is what you give you give in that's what you get in out so you give the labish in rubbish out so I think what we should do as lectur academ academician we should still work with this language model but still guide the students about how do we use them how do We use our

critical thinking to use because you need to think is it really this at the level of what you want to be so that's the law of you as lecturer I'm just talking about now undergraduate part of it not the PHD level so you need to give the students the guidelines that okay re this is what the outcomes I want but then you use the IR I mean whatever the language model to help you to generate those idea that's is my comment I think so it's a matter of knowing how To interact with them I I

tell my students to use it to replace me and that's an uncomfortable thought for a lot of people but I I said to them the kinds of questions that you would normally ask me those are the kinds of questions that you should be asking language models so you wouldn't ask me to write your essay so don't ask a language model to write your essay but you would ask me to give you feedback on a piece of writing Now language models Are phenomenally good at giving feedback on writing and so like I give language models my

pieces of my work all the time I say tell me why I'm wrong tell me what assumptions I built into this tell me where my bias is showing through tell me where the argument is weak um and so I upload pieces of my work all the time and ask it to give me feedback and then I ask my students to do the same thing so why should they get one round of feedback From me where I'm rushed and I'm not able to give them as deep a level of feedback as they might need when they

can submit to a language model 3 4 five times 100 times get feedback and then to submit that piece of work to me for for um for assessment um so I think all the kinds of questions that they are comfortable coming to me for those are the kinds of questions that I want them to be asking language models because the language models are available for free 24/7 you can use them as much as you want they have infinite patients they never get bored and tired or sleep um we can really be effective in teaching students

to use language models to support their learning way more effectively that's true well I think we'll take one more question because I don't want to keep Michael for too long I'll rather make another appointment Michael for for in future like I say I think I need to learn a little bit more About the practicalities about asking the right questions to get the right responses so um Marissa you you are the one who's going to ask the the last question over to you hi hi Michael it's lovely to see you again and as usual um mind

blown I really enjoy your sessions um I actually have two questions one is pretty silly but do you like um ask it please and say thank you because I've Seen that happen and oh you do okay because I'm also quite polite and it's very you know um nice in as response as well um and then secondly do you have a resource that teach the students how to prompt um you know just getting them skilled in promp okay so um I think just to kind of cave at the first point I do say please um but

that's because the original research that was coming out actually Demonstrated that when you are polite to language models they tend to give you better responses that is less true now I think you can be more abrupt they've trained that out of the models um but I still just out of habit I say please a lot um I do provide students with examples of prompts um so so there's a few different ways of thinking about this um I have a I have an AI policy for my classroom where I actually provide Students with a policy documents

that says in this classroom in this module this is how I encourage you to use AI these are the models this is where I want you to use it this is how I want you to use it these are the things I don't want you to use it for so I'm very explicit in um in terms of what I want my students to do um the university kind of is tinkering on the edges of uh some kind of a policy document um but I think it needs to be specific to each lecturer Um and so

in that document I do establish some of the boundaries on the context for students um we also have a project in the University uh we've got three working groups on AI and one of those is operational the other ones are Technical and strategic and the operational group um until recently I was actually chairing um but then step back for for other reasons um the point of the operational group is to just experiment with with a load of different Prompts in all sorts of areas um that students might be using them for so a lot of

non-academic prompting so um things around uh I don't know simple things like like you don't want to go out and exercise because it's raining you normally go running what are like 10 exercises that you can do in a 2X two space cuz that's how big your bedroom is and using body weight as resistance like really really kind of out there non-academic proms like how how how do I Clean my Apartments I've never had to clean place on my own what are all the kind of materials that I need to go shopping for in order to

clean my apartment so we're just experimenting with loads of different kinds of prompts then testing those prompts with our University infrastructure and then trying to provide them at scale to students just saying here are a load of ideas for ways that language models can uh can help you um but then also just Teaching them the very Basics so that a rooll goal instruct euristic is very very useful um so providing them with specific examples of areas where they can use it in especially around non-academic use cases because students are actually really worried about being accused

of cheesing and so we're trying to get them used to language models by using them in non-academic contexts where the question about plagiarism and cheating doesn't matter sorry that was a Long answer to your question no it's perfect thank you thanks so much Michael as you as you can see we we have a lot of questions I still also have couple of questions but um time is is running out and um I think we've just we've just learned so much um from you today especially with regards to practical application but also with regards to to

just um the principles of application and also maybe thinking about where this is going to take us um In future and I think that is probably the most or this second most important thing that other most important thing is how to use it effectively um so I will definitely be in contact with you uh so that we can take this discussion um forward in another time slot but we we really enjoyed it it was really engaging um and it was just such a wealth of of information so thank you so much and um let us

know when you are in Cape Town Again and then we can get a personal get together and have a glass of I'll definitely do that down wi thank you thank you for the opportunity I really appreciated it yeah thanks so much and there's lots of chats going on in the in the chat box and just saying thank you to you so thanks so much and enjoy the day it was a pleasure good to see everyone again keep well bye-bye bye-bye byebye Michael keep well bye