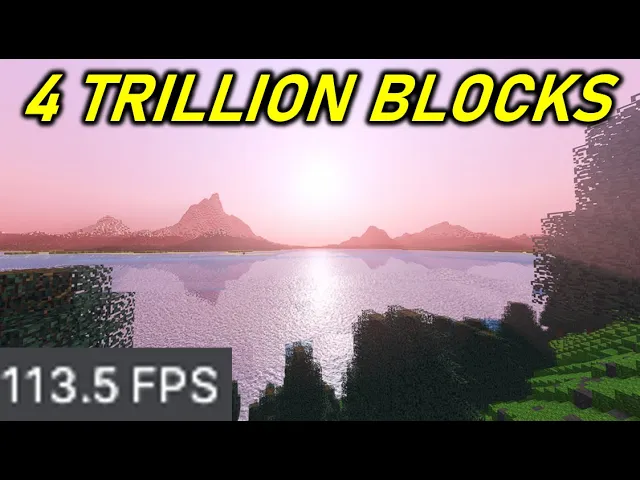

4 trillion blocks. The amount of blocks currently perceptible with the naked human eye. Compatible with shaders and multiplayer with non-existent lag on my mid-range computer.

And it all begins in this brand new Unity project. The block part of the block game was straightforward. Step one was manufacturing a script to summon millions of blocks for the terrain.

Unfortunately, the game part of the block game was hard. This is literally unplayable due to the sheer quantity of objects. The solution was copious optimization.

Luckily, I knew a guy who watched a guy who knew how to do [Music] this. Instead of instantiating one object per block, blocks are simply stored in an array of data. This data is used to generate a single mesh, which is a three-dimensional model made of vertices joined into multitudinous triangles.

Less triangles means higher performance. So, the code adds four vertices only for faces that aren't obscured by solid blocks. Each vertex also incorporates its own information.

Normals help create believable shading by specifying which direction the face is facing, and UVs are coordinates specifying which area of a texture the face should use. The completed mesh is then assigned to a chunk object to be rendered. The result is our first chunk, but more discretionary faces could be extinguished with frustm culling.

Faces beyond the field of view are disregarded. Further culling is accomplished by splitting the mesh into one piece per side so that any sides facing away from the camera are disabled. But besides reducing the vertex count, we could also optimize vertices themselves.

The default vertex consumes five thermodynamic entropies of the sun of unused data. These are eliminated by customizing the mesh even further. And since this is a block game, vertex positions tends to be whole numbers, meaning they can be stored as the smallest data type byes.

Now I could effectuate hundreds of chunks without causing an FPS singularity. But instead of using Minecraft's chunk system, I incorporated cubic chunks, meaning the world is infinitely explorable in all dimensions. Therefore, the height limit is non-existent.

But when I continuously spawned new chunks around the camera, I was jump scared by copious lag spikes. It appears that while chunk rendering was optimized, chunk generation was abysmal. So it was time to commence profiling.

This involves spamming stopwatches everywhere to time wall operations to locate the biggest time consumers. But no matter what optimizations I used, the lag spikes prevailed. The limit now was the language itself.

C Rust, a memory safe compiled programming language that delivers high level simplicity. employs numerous hidden systems to ensure the program functions safely. This causes overhead which is essentially lag from behind the scenes.

This could be solved by abusing the burst compiler. Burst creates highly efficient programs by disabling safety measures, meaning more responsibility falls onto the programmer and I was punished by Iicaso hectillions of massive memory leaks and unexplainable crashes. I rewrote half of the entire project to satisfy the burst compiler overlords.

In return, chunk generation and rendering became 10 times faster. Both were now imperceptible to the human eye. But how does this perform on much higher scales?

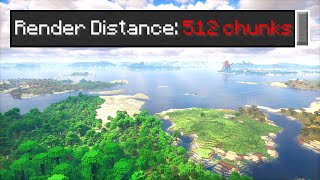

The current render distance is four chunks. The goal is to acquire the ordinary visibility on a clear day, 8 km. So instead of writing faster code, I now needed smarter code.

Rather than generate stuff faster, I would generate less stuff. Small details such as singular blocks are practically invisible beyond a certain distance. Therefore, farther chunks could get away with generating one block per 8 cub m.

Even farther chunks shall have progressively lower resolution, eventually reaching the single block per chunk. Low detail chunks are then combined to reduce the total number of objects. All of this was implemented, but I was still unsatisfied.

We had to go even further with the fanciest optimization yet, greedy meshing. The idea is that adjacent squares can be combined into larger rectangles to reduce triangle count, but the implementation was extreme demon difficulty. Most tutorials were massive yappo eclipses discussing abstract math and theoretical theories.

I found actual code on Reddit of all places which I used as inspiration for my own algorithm. After a few unexplainable disasters and bizarre catastrophes, the greedy measure was now functional. By combining all optimizations so far, I could finally exhibit stupendous quantities of terrain.

But while I had technically achieved my goal, the sheer quantities of chunk generation at the start of the game caused abysmal lag. This is because all chunk generation runs on the main thread by default since frame updates also occur on the main thread. The game freezes until all other operations are completed.

This is single threading and its consequences. But by abusing Unity's job system and C's task system, chunk generation was banished to a different thread. While the results were sent back later to be rendered, the result was that the main thread was cured of lag.

If only there was actual terrain. Currently, the height at each point is determined by Unity's default pearl and noise function. But this was a poorly made function.

Therefore, I fabricated my own by plagiarizing code from Wikipedia's pearl and noise article, which has been expuned for absolutely no good reason. A symptom plaguing all Wikipedia articles. My pearling noise could be improved by adding more pearl and noise.

Each one having higher scaling. The result is more rugged grasslands. But instead of making the height equal to the pearll and noise, it could be two to the power of the pearllin noise.

This exponential function creates a mix of grasslands and mountain ranges on the distant skyline. This could be further improved by effectuating trees to obtain vast landscapes of forests stretching into the distance. However, this is still barely a game due to the disheartening lack of gaming.

And what better gaming to add than the mining part of Minecraft. This requires knowing which block is in front of me simply from my facing direction and world data. For this, I sought help in the ninth layer of the internet.

Forums, programming forums are rather infamous for harboring internet micro professionals, a close cousin of the internet micro celebrity. But there is the occasional real answer. This individual procured several essays on finding which blocks overlap with a line of sight until he tragically vanished.

So I continued this theory to assemble my own skits post also known as pseudo code a helpful stepping stone to visualize ideas before making the real algorithm. Now that mining and placing were invented the trifecta was nearly completed. I only needed a game now.

Now introducing this 1. 152 cub m convex polyhedrin game object. Player movement was easily affectuated.

But adding gravity caused disastrous consequences. I needed collision detection between players and blocks which was accomplished with the same block detection methods used for mining. But after some contemplation, I realized the conspiracy theories linking factorial and software engineering were factual.

But now that I had a gamer, this begs the question of whether it was possible to have multiple gamers using multiplayer. The server didn't need to be anything fancy. My server is simply a constantly running program that sends and receives data to ensure all players are synchronized.

However, only ones and zeros can be sent through the network, meaning the server must convert outgoing game information into bytes, a process known as serialization. Clients then des serialize incoming data to update their game. Syncing player positions was rather easy, but attempting to serialize the entire world would consume 13 Samsung T7 portable SSD external solidstate drives worth of data.

On the other hand, games like No Man's Sky accommodate 18 quintilion entire planets while storing very little data on the server by only saving changes. This inspired my proposition. The same world seed makes the same world every time.

By only sending the seed, players could generate the rest of the world on their own. Chunks with changes are the only other things sent. This allowed multiplayer to function with a ridiculous render distance.

But the one thing I have neglected was rendering itself. This game possessed the hideous asset flip aesthetic, and I was clueless as to how so-called AAA games achieve their graphics. That was until some random Discordian rambled about ambient occlusion.

A quick search reveals that it gives shading to edges, adding this required configuring something called the render pipeline, which is essentially the five tetrail step process responsible for converting game objects into pixels on your screen. But it was also incredibly configurable. Special effects could be injected near the end, including ambient occlusion.

The result is a surprisingly considerable decrease in awfulness. And another render pipeline feature to add was the god ray effect, technically known as volutric lighting. While most volutric lighting effects use expensive ray marching calculus blah blah blah, this simple 50step tutorial uses a shortcut.

Simply create a white picture obscured by all viewable objects from the camera and apply a radial blur effect. The final texture is then simply fed into the pipeline to be combined with the screen. But in order to control how objects themselves were rendered, I necessitated shaders.

These were easily created with shader graphs, a Scratch Club version of shader programming. Nearly everything was configurable now. The shader is then assigned to a material which is applied to whatever objects I pleased.

Shaders were needed to obtain realistic water. and I found this Pro Godhacker tutorial covering 59 tetrail steps per second to create this overly complicated shader after applying it. The result is that shallow water refracts whatever is below while deeper water reflects whatever is above.

Speaking of what is above, shaders could be applied to the sky itself. With enough trial and error, the real life atmosphere was recreated with various mathematical functions. Values in the shader could then be updated constantly from outside code to simulate a daytime cycle.

Fog is then applied to fade further terrain into the atmosphere. The final missing feature was shadows, but the default shadows were inadequate due to their minuscule maximum distance. This can be circumvented with several shadow cascades.

Each cascade is a ring of increasingly farther shadows, each having lower resolution to help performance. The graphics were now comparable to Minecraft topine shaders while maintaining a tolerable performance despite having mediocre hardware. But you may have noticed this profoundly discordant graphical predicament, the jaggedness of everything, also known as aliasing.

The solution to this was surprisingly easy. Left-clicking this option to enable temporal anti-aliasing. This sounds less fancy than the other options, but somehow has higher quality for reasons I cannot disclose.

Finally, this game meets my graphical expectations, but this video has only covered approximately 25% of the actual effort spent on this game. Development shall probably continue, but do not expect a public release in case this catastrophically fails. So goodbye.