Previous generations of the model have been weak reasoners but they do reason in the same way that hallucination was an existential threat to this technology no we'll never be able to trust this stuff there are hundreds of millions of people using this techn now and they trust it it's actually useful for them we're making very good progress on the hallucination problem I think we'll make very good progress this year and next on Reasoning here we are again another episode of mlst today with Aiden Gomez the CEO of coh here now I interviewed Aiden in London

a couple of weeks ago just after their build event and after Aiden did his presentation I sat him down for an hour and I gave him a grilling and he was such a good sport for being so transparent and authentic this is the difference with Co here they say it like it is there's no there's no digital Gods there's no super Intelligence they're just a bunch of folks solving real world business problems their models are incredibly good for a tree vment generation for multilingual but they also have some serious challenges that they need to overcome

we spoke about the AI risk discussion where the language models take away our agency how he's dealing with policy right so on the one hand he's talking with governments trying to get them to allow startups to be more Competitive uh to innovate but at the same token he's also very concerned about some of the societal risks of AI so that's quite an interesting burden and and JX position that he has to hold in in his mind we spoke a lot about the company culture at cooh here now I'm very impressed with cooh here all of

the people I've spoken to been very smart just really nice people and how has he cultivated that culture he was very Frank and transparent about some of the Mistakes he made early on as a CEO so yeah plenty to get your teeth in I hope you enjoy the conversation for coh here I think we're a little bit different than some of the other companies in the space in the sense that we're we're not really here to build AI what we're here to do is create value for the world and the way that we think we

can do that is by putting this Tech into the hands of Enterprises so that they can integrate It into their products they can augment their Workforce with it and so it's all about driving value and really putting this technology into the hands of more people uh and driving productivity for Humanity Aiden welcome to mlst it's an absolute honor to have you on thank you so much appreciate it there's a bit of a Last Mile problem with large language models you folks have created this incredible General technology but when Enterprises Implement it they have a whole

bunch of you know legislative constraints security constraints yeah yeah I think there's loads of barriers to access privacy to to policy to just the familiarity of the teams with the tech it's brand new and so they haven't built with this technology before and they're still getting up to speed with the opportunity space uh what they can do with it that being said people are so excited about Ai and its opportunity That the motivation and the will is there to overcome a lot of these hurdles so we're trying to help with that as much as we

can whether it's like our LMU uh education course to help General developers get up to speed on how to build with this stuff or us engaging at the policy level to make sure that we have sensible policy and not overregulation or regulation that hurts startups uh or encumbers industry in adopting it so we're trying to pull the Levers that we can to help accelerate the adoption and make sure that it it gets adopted in the the right way but yeah no it's it's definitely the past two years have been a push there there's a lot

of stuff slowing down adoption that I would love to see eased I think the tools need to get better easier to use more intuitive more robust you know prompt engineering is still a thing it shouldn't be right shouldn't matter how you phrase Something specifically it should be the model should be smart enough to generally understand your intent and take action on your behalf reliably so even at the technological layer I think us as model Builders we have a lot to do to to bring the barriers down but I'm optimistic like the pace of progress is

fantastic yes on that um kind of prompt britness thing I wonder whether you think that we are on a path to have I mean in an Ideal World the model and the Application would be completely decoupled so you could swap the model out or when you folks bring out a new model we can just swap it out and nothing breaks but at the moment that that's not the case but as the models become increasingly better do you think they will be robust in that way they should be right um there will always be quirks to

different models because we're all training on you know we hope different data uh that focuses on Different aspects or uh elicits different Behavior in the model so there will always be quirks to the behavior of models uh the personalities of models uh what they're good at and bad at but in general in terms of like following an instruction we should be quite robust to that universally um and so the ideal is that yeah you can just take a prompt and drop it into any system and see which one performs best and then move forward with

that one in reality the status quo Is a prompt that works on one side system fundamentally does not work on another and so there's this Rift uh or these walls in between systems that make them very very different hopefully that'll start to lift there's a lot of effort going into Data augmentation uh that makes these models more robust to changes in prompt space uh we're doing a lot of work on that it's driven a lot by synthetic data um and finding doing search to basically Find the prompts or the augmentations the changes to prompts that

break the model and then training to fix that break so I'm optimistic that sort of brittleness is going to go away interesting so kind of finding problems with the model and then robustifying and robustifying in in doing so how does that change the characteristics of of the model does it make it less creative or less capable in some sense I mean what do you have a feel for the Tradeoffs that yeah I mean I don't I don't think so I think that that's orthogonal the process of making it more robust is orthogonal to creativity there

are aspects of the posttraining procedure that do reduce creativity or um you know some people like to say like lobotomize the model um so it's it's definitely a problem it's something that we watch for um and we're trying to prevent I would say that one of the most disappointing aspects of the current of Building these models is that a lot of people train on synthetic data from one source right like just from the GPT models and so all of them all of the models that are being created they sort of speak the same they kind

of have the same personality and it leads to this collapse into a lot of different models looking and feeling the same um and that makes them boring because like you you have the Same shortcoming across models uh rather than if you have a diverse set of models that have different failures in different places you can much better address you know the preferences of much more people um I've noticed due to synthetic data taking off just a a total collapse in terms of the different types of behavior models exhibit and a cohere because art our customers

are Enterprises like that's That's who we sell to it's not consumers it's not you know anything other than Enterprises who want to adopt this Tech and they're very very sensitive to what data went into the model and so we exclude other model providers data you know very aggressively of course some will slip in as we're scraping the web Etc but we we make a very considered effort to avoid uh other model outputs and so if you talk to our model when we release Command r R plus one of the things I kept reading on Reddit

and Twitter was it it feels different like something feels special about this model I don't think that's any like magic eoh here other than the fact that we didn't do what the other guys are doing which is training on the model outputs of opening eye I I agree with you so um when people you know see chat gbt or whatever for the first time that they're Blown Away by it but there are Motifs that come up again and again and again unraveling the Mysteries you know delving into the intricate complexities of blah blah blah blah

blah and when you start to see these patterns and these constructions you just start to think I don't I don't like this very much because you start to see through it it's a little bit like you know you when you start to see through someone they're not interesting anymore and I haven't seen that with coh here but I have seen it With many of the other models now my intuition was always I don't I haven't really formed this very well but I thought that maybe it could come from just the kind of data sets that

we're using or maybe it could come from the the preference fine tuning but are you saying that that monolithic effect is because they're kind of eating each other's poop yeah no yeah yeah it's some sort of like human centipede uh effect I I think um yeah they're they're training On the outputs of a single model and so it's all collapsing into that model's output distribution and so if that output distribution has quirks like saying the word delve uh a lot then it's going to just pop up all over the place and people will take it

for granted that oh I guess llms just behave like this but they don't have to they don't have to it's interesting how subjective creativity is as well because a lot of people thought that it was creative a Couple of years ago and then when you see it everywhere it's not creative anymore so it needs to be novel to be creative but um I mean you folks have just released the command R series of models and you've blown everyone away um tell me about them but if you wouldn't mind also why did it take Q world

to catch up and and get State of-the-art performance yeah we spent a lot of 2023 lagging I think that that is accurate to say what we were doing was Sort of re reorganizing internally we were rebuilding the company rebuilding the modeling team the the tech strategy and preparing for the runs that led to command R it was clear to us that the process that we had used to build the First Command and generations before that it wasn't working it wasn't going to scale and so we just rethought the entire Pipeline and it took us a

while to rebuild things um run the experiments that we needed to run in order to make Decisions on what the design of this new model building engine would look like um and then it takes time to do the runs we spent a lot of last year doing that but I I think you know the results speak for themselves and also with command RNR plus it's just the first first step in a series of new models that we want to produce we're very excited to lean into specific capabilities and so while the general language model improvements

they're super important They're crucial right like the models have to get smarter they have to get more capable um and we'll continue to press on that direction we care about narrowing our focus a bit more and so for 2024 even yeah like with the command R series we focused in on drag and Tool use I think you're going to see a continuation and extension of that Focus really making these models robust at the key capabilities that Enterprise cares about That will drive productivity that will automate really sophisticated processes that today as Humanity knows it is

only the domain of humans we really want to go after that um and give our models the ability to to help in those spaces is it fair to say that I don't know whether you feel that the um the general large language models are saturating maybe you could comment on that first but if you do Think that is does does that give me a bit of a read that there's a move towards specialization of of the models I don't think they're saturating I think they're getting so good that it's hard to see the incremental Improvement

um but increment that that incremental Improvement is extremely important so once it's once the models are smarter than you it's really hard to in a domain right like in medicine like coher model it knows more than I do about medicine For sure like just absolutely and so I can't really effectively assess whether we're improving in that Dimension yeah I can't tell anyone it's smarter than me I trust it more than I trust myself to you know diagnose symptoms or you know process medical data and so I'm not equipped to do that instead what we need

to do is create data sets or go out and find people who are you know still better than the model at those domains and they can tell me whether it's Improving um but for for us like the general population at some point we kind of stop seeing Improvement between model versions it's harder to feel um and you need to really zoom in to a place that you're an expert and that you know previous generations failed to see the progress um I I think about it sometimes as like you're painting in a a canvas of knowledge

and at some point the the holes in the canvas become so small you Have to take it a microscope to actually see it and paint it in we're sort of in that part of the space for these models and so Improvement becomes much harder for us the model Builders um but it's much harder to feel and see for users who aren't um diving in very close to analyze performance I don't think it's saturating I think it's still making we're still making very significant progress I do think the past 18 months maybe a little bit less

than 18 Months yeah the past 18 months 12 months we've been compressing so we built these massive giant multi-trillion parameter models which were just extraordinary artifacts of intelligence and and capability and we realized it's impractical you can't actually put this thing into production right like it takes 60 A1 100s to Ser like it just we could not Productionize this the economics don't work out and so then we spent the year compressing those massive models down into much smaller form factors um there's very likely going to be a series of reexpansion and and scale um both

on the both on the model scale in terms of parameters but also data scale uh and data quality uh and that's being supported by much better synthetic data methods that find much more useful Synthetic data um that are quite compelling at search to discover to automatically discover weak points of models and then close those gaps um so I think we've over the past year gotten very good at making models more efficient and we've created new methods that let us sort of just like plug in compute and data and have the model continuously improve you've used

words like smart and capabilities and if you if you think of Smart as knowledge I completely agree with you I I think knowledge is a living thing we are all improvising and we are generating new knowledge all the time and it just increases exponentially and there's no reason why language models can't become more and more and more knowledgeable because we just acquire more and more data and in in that sense a medical doctor is smarter than me in that domain because they have the knowledge that that I don't have but Some people could say that

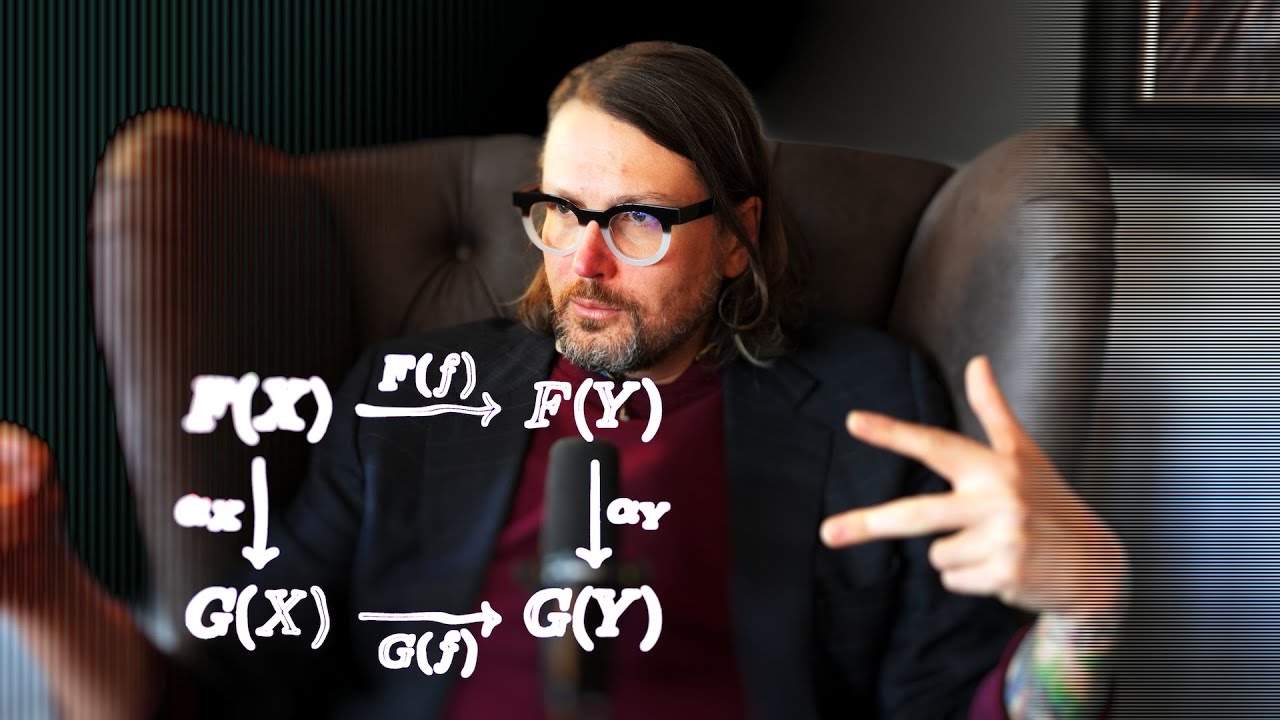

intelligence is something a little bit more abstract than that you know it might be the ability to build models um it might be the ability to reason it might be the ability to plan and this is when we get into the kind of the AI um thing so so how do you Dem those things are you saying that the models are becoming more knowledgeable but they're not necessarily becoming more intelligent like we are I think Reasoning um reasoning is crucial to intelligence I think that these models can reason and that's a controversial claim I think

a lot of people would debate that uh a lot of people would make the claim that the architectures we're using or the methods we're using don't support um that sort of behavior I think that previous generations of the model have been weak reasoners but they do Reason and it's not a discreet does it have this capability or not it's a Continuum of how robust the reasoning engine inside these models is we're getting much better methods for improving reasoning generally we're getting much better methods of eliciting that behavior from the models and teaching them how to

do it um and apply it to many different domains whether it's math whether it's decision making task breaking down tasks planning how to Execute them um th those were key missing capabilities that were quite weak in previous generations of models which are now starting to emerge in a significantly more robust fashion and so in the same way that hallucination was it used to be a existential threat to this technology no we'll never be able to trust this stuff there are hundreds of millions of people using this Tech now and they trust it It's actually useful

for them they use it because it's useful to their job we're making very good progress on the hallucination problem I think we'll make very good progress this year and next on reasoning I think it's just a a capability a skill uh that the model needs to be taught and we're we're building the methods and data and techniques to to support teaching teaching these models yeah it is interesting how you can kind Of break intelligence down to all of these things and some you might argue are missing now like planning um creativity is an interesting one

um agency is quite an interesting one and presumably as as a thing has more understanding and it has more autonomy it could in in principle develop agency at some point in the future but you you think of these things as skills could you give any hints to how you've moved the needle on this so the knowledge Thing it seems to me that you would just get more data and curate and refine the data but could you give any hints on how you've made it better at reasoning for example yeah with knowledge I I think it's

about augmentation with Rag and better modeling techniques cleaner data sets so that you remember the the right stuff and and don't retain the less relevant stuff um those are the techniques that move the needle there with reasoning there are like circuits Uh that you really need to bake in to the model you need to show it in demonstrate it how to break down tasks at a very low level um think through them and that's stuff that's not actually that abundant on the internet so it doesn't come for free using our previous techniques of just scrape

the web and and train the model and scale up people don't usually write out their inner monologue right they usually write the Results of that inner monologue and so it's something that the model has been missing a a view into I think synthetic data will go a long way in closing that Gap and supporting building multi-trillion uh token data sets that actually demonstrate how to have an inner monologue how to reason through things How to Think Through problems make mistakes identify mistakes correct them and retry that sort of long thought process data is actually Extremely

scarce it's very rare it's very rare it's really hard to find you can find it on the internet of course there's stuff like um um forums where people help each other with homework and sort of break down this is how I arrived at this answer um but when you look at the internet in totality those are like pin Pricks on the surface of this thing and so pulling that forward emphasizing it augmenting it producing more of that data should be a key priority if you're Going to actually teach these models to to exhibit that behavior

is there a trade-off between I mean for example we could use the real engine to generate lots of visual training data for a you know for a vision Foundation model or an alternative would be we could have like some kind of hybrid prediction architecture where we somehow encode naive physics into the architecture itself which means rather than memorizing lots of generated data we Just kind of build you know a hybrid architecture is that is that a trade-off that you're kind of thinking about on like specifically with the video side of things where physics is relevant

I think that's you know a totally fine strategy I I think that yeah a lot of the physics engines that people have built are they're flawed right like video games still don't look like reality they still don't behave like reality and so Training off of that data I think will leave you in a really unsatisfying place like there's just still some uncanny valley weirdness to it um I think like we have tons of actual video data of the real world where physics is definitely implemented and being represented completely accurately and so that should be our

go-to source I I think trying to use simulators uh at this stage is the wrong approach I think you should take as much Data from The Real World as you can and use that as a bootstrap to then build synthetic data engines that help you iteratively improve it's what happened in language as well right like we didn't go to synthetic language rules-based synthetic language generators to teach our language models the basic principles of language using our linguistic models that we've built in no we threw all that away we took actual language data from humans trained

on That and then use the models that were the output of that to improve it iteratively uh and Via experimentation I I think the same will be true in in Vision that's a really interesting um Point actually because with the Sora model from open AI it does look a bit weird it looks like it's always flying and it looks very game engine like and the language example is beautiful but what about something like mathematics are there examples where rather than Kind of you know perturbing or mutating what already exists you might just start from first

principles and rules totally yeah like um mathematics is so explicitly rule driven and so explicitly verifiable it's like the perfect example of synthetic data generation I I think it's uh it's definitely one of the domains that will crack first and and on top of that um code right you can completely Synthetically generate code verify it does it run does it produce the outputs that you want uh on a test set um so when it when it is That explicit and verifiable it's perfect for synthetic data gen like just ideal yeah but um I I guess

this is kind of what I'm thinking about that with code you can actually constrain it way more than language so you could just rather than using an existing self attention Transformer you know you might want to have something That only works on trees or whatever and and maybe that would work better for that particular thing but then I guess we'd have to have some kind of mixture of experts and not have a single model yeah I I think that's uh behind the scenes actually a lot of the strategy you you'll likely have Ane where one

component one of those experts is going to be an expert in code very highly specialized to that heavily upsampled on synthetic code data and real code data Math Etc um and that expert will act as a general reasoning engine and will be very good at logic and and those sorts of components you might have a medical expert uh which which has dramatic upsampling along that axis um yeah I think that's that's a very effective path towards even more efficient models so if you're in the medical domain or the math or code domain you can then

pull out that expert and use it independently and You don't need to keep around this huge uh monolithic model you can just take out a subcomponent and and deploy that um yeah I think that architecture already exists yeah I wonder if you can talk to that a little bit because I'm very excited about that because it it now seems that maybe we could call what you just described an agentic distributed AI system where the agents can pass messages to each other and um you know One one of them might be an expert in mathematics one

of them might be an expert in coding or so on but then you've got this problem that you kind of send a message into the Nexus and all of the models are kind of passing messages to each other and it's kind of unbounded in runtime as opposed to one of the great things with a language model is it just does a fixed amount of compute per iteration you know you just put some prompt in and you get the answer Straight back out so does that kind of unboundedness introduce problems um I mean a language model

could just generate infinitely and not produce a stop token and you would you would go on forever so I think the problem already exists and models are quite well behaved in terms of um you know if you train them to give up and to say I need to respond they will uh they tend to so I I'm not too concerned about like runaway processes That never end that would just not be useful as well hugely computationally expensive and yeah it seems like models can produce stop tokens and I I think that even in a multi-agent

scenario um discourse between agents will conclude itself in a reasonable amount of yeah interesting and I think even now with your multi- um Step tool use that's basically what you've done you could in principle do that recursively and you could constrain the Computation graph so that there's no cycles and it comes back in a in a fixed amount of time yeah we terminate we terminate execution after some number of failed attempts right uh so it's so it's easy to solve that way it's a little bit unsatisfying I think our multihop tool use right now it's

our very first pass it's like the negative one um and so it's not that good at catching when it's made mistakes it's not that good at correcting its mistakes even if it's Caught that it up um and so I I think we're still very early there but those systems are going to start to become extremely robust and reliable uh and I'm very excited for that amazing so um I'm interested to know from in your own um words how how is I mean we were just talking to you folks have got this um forward engineering team

you're Enterprise focused you've you're helping Bridge the last mild problem and really embedding yourselves in into large Enterprise which which is an amazing differentiator but other than that there's always the question you know lots of people say these models are just kind of interchangeable and you know you're just kind of playing the token game at some point I just wondered like what's your plan there I agree with that sentiment models are way too similar I think there's going to start to be differentiation between models like I Was talking about before with command R and r+

we're going to start really focusing in on key capabilities the general language model game is you know there's a lot of players um and it's pretty saturated um I think people are going to start to have to Branch out I think that consumer language models are going to separate away from Enterprise language models Within Enterprise there's going to be a lot of specialization into specific domains um and so for cohere what I want to see us do is in product space push into more tailored capabilities for particular problems um we want to drive value for

Enterprise and different Enterprises operate in different spaces and they have different needs the tools that their models might need to use look very different from one another and we want to make sure that we're serving Each of those niches particularly well or uniquely well and that will be our value proposition differentiated from others um so that notion of specialization uh or enhanced capability in particular domains is something that we definitely want to explore at the product level and start to offer um because like you say the you know dollar per token space it it's super

we're not going to stop that it's important for The community right like folks need to build on top of this they need access to good models at fair prices and so we're going to continue to give that to the world um but we want to create differentiated value and I think that's going to come from focusing on the actual problems that enterprises want to tackle and getting extremely extremely good at them interesting on on that are you planning kind of horizontal um products or vertical products and the The reason I say horizontal is I I

know a few startups now that are building kind of low code you know appdev platforms with large language models and they're making it incredibly easy in the Enterprise to compose together different models and to you know deploy applications on phones and it's really democratized because it's so it's so much easier now for people to do artificial intelligence that would be a good example of like a horizontal one I Guess so I think our product right now is super horizontal yeah right it's like General language models embedding models rerank models it's a platform that's Deployable privately

on every single cloud you can deploy the model against any sort of data whether it's medical Finance legal it doesn't matter like it's the most horizontal product and platform you can build what we're going to start to do is more towards verticalization um and so specializing Models at particular problems or objectives that exist in the world um and offering offering a product that solves that for the Enterprise would you ever go be beond the model and kind of plug a little bit deeper into the platform in the Enterprise so for example building operating models or

you know one approach would be to just fine-tune the model on lots of data from a particular domain but it's still a language model the interface of coh here Is the same or another one would be to let's say build um something a little bit like data bricks or snowflake or something you know like a an enterprise-wide Suite that allows you to um deploy discover create share artificial intelligence in in an Enterprise the only reason I say that is uh you know Azure an AWS they give you free credits you know they want you to

to get on their platform because they know you're never leaving you know Because you've got now you've got something there which is not easily replaceable people learn how to use it they love it um would that be a potential future yeah I it's definitely still going to be a platform customizable something that the user which for us is an Enterprise can adopt and sort of bring into their environment hook in their data their tools their you know whatever they want to plug in the verticalization is going to come from Investing in the model to be

good within a particular domain that might mean fine-tuning on data within that domain it might mean um making sure the model is very good at using the tools that employees operating that domain would use but that's our focus is starting to get more specific and and focused on the actual use cases that enterprises care about um and not just doing you know version 3 four five of the same general uh General model so um I saw you Tweeted about Nick bostrom's uh future of humanity Institute shutting down do you have any thoughts on that yeah

I think it's it sucks to see any sort of um academic Institute collapse I to be honest I know nothing more than what's public there so I I don't know if there was some internal issues that cause the philosophy Department to pull funding um but I I've been a pretty vocal critic of X risk and the idea that language models are going to take over the world and kill everyone um but despite that I still want people thinking about that I still want academics thinking about that I don't think that regulators and policy folks should

be thinking about it yet because it's so far away and remote and uh potentially completely irrelevant but that's the domain of Academia is to pursue those long Horizon high-risk projects and make progress on them and So um I certainly don't want to see the academic front um of that effort get defunded um that being said like I think that those organizations have really been trying to get their hands into policy and impact private sector public sector in a way that is threatening to progress um misle misleading uh and um so I I think that we're

starting to have within our community like the AI machine Learning Community a bit of a correction uh those people were kind of Given a lot of power were listened to a lot um and developed what I think we all recognize as too much influence um and it started to produce bills and talks about policy that would totally collapse progress in the space very very prematurely about theoretical long-term risks that might be an issue and so fortunately I think there's a cultural correction happening where even even the legislators and Policy makers are starting to say you

know this is not um it's not appropriate um the level of influence that this one group is having and we should listen to a much more Broad and um diverse set of opinions so I'm I'm still concerned about that and I'll continue to speak out against that when I see it but at the academic level I don't want to see uh you know professors lose their funding I think that they should continue to to pursue those ideas yeah I Think bostron blogged that he was trying to resist the anthropic forces of the philosophy department for

several years and eventually he he lost but I'm I'm in two minds as well so as you know I've hosted many debates with you know like Connor lehy for example and Beth Beth jzos and and a bunch of different people and one thing that strikes me is how ideological it is I really thought as a podcaster I could have an honest and open conversation and it's never gone Well and and I've put a lot of thought into trying to understand why that is and I think philosophically you can trace it back to things like paternalism

and safetyism and utilitarianism and consequentialism and long-termism and you know these are ideologies that um that make one believe that even though it's just a subjective probability that I know better than you I can predict the future better than you and they've become much more pragmatic in recent Years so rather than talking about the old school Bostrom super intelligence they're now talking about uh you know memetic risks and bio risks and things that I think are designed to get more people on board with it and I agree with you that they've had a lot of

a lot of influence but why is it so difficult to have a rational conversation yeah no I I think it's what you say it's it's very ideological um there are camps and positions and um it's for some reason It's become very cult-like on both sides um obviously there's the a movement uh which formed a cult-like environment of adherence to those principles and their recommended behaviors and and actions you know what you should work on in your life uh they have dating apps for EA like it's very insular and then there was uh an ironic I

think uh although it's increasingly not clear an ironic counter movement which was eak yeah um and that has spun out into something That is very not ironic it's very libertarian accelerationist um which are ideals that I don't hold either and so both of these camps I find um really unappealing I don't want to be associated with either of them yeah I I found you know e EA was very dominant for a long time and so when eak came out it was like refreshing finally someone's like calling them on their but at this point it's just

mind-numbing and like completely uh Not of interest to me yeah we we've had Beth on the show he's a really nice guy actually I invested in G's company oh did he yeah yeah I think he's he's brilliant like he's a really really nice person um I'm proud to see Canadians doing doing great things um the be thing I found super funny and I think eak was necessary uh I now believe that both EA and eak need to be dissolved we've we've seen them through to their uh logical conclusion and now we're Starting to get into

territory that's uh very strange um from a philosophical perspective how do you kind of see the role of AI in society mean I'm quite interested in how it's affecting our reality how we how we interface with technology um is really dramatically changing over time I mean what what do you think about that yeah completely true I think I I view it in the same way I view the computer uh or the CPU it's a tool It's something that we're going to leverage that we're going to build on top of and use to make our lives

better to make us more productive uh to make things cheaper more accessible um I think all the good that came from the computer and the internet is going to be dwarfed by this the the democratization of intelligence and having that always is at your disposal at any time that's something that you know 50 years Ago you couldn't even dream of it right it's it's surreal the amount of progress that's been made in half a century and so I'm I'm really excited for that I think it will do a lot of good uh and alleviate a

lot of ills um I think the Human Experience Our Lives will be dramatically improved by having access to much more intell in our lives I'm really excited as well but are there particular things that that you are concerned about I mean for Example people say that uh language models might enfeeble us they might lead to mass manipulation and and persuasion I mean yan yan Lon tweeted the other day he said where's where's the mass manipulation where where's the persuasion it might be something that just happens gradually over time but are there things that that you

do worry about of course yeah of course it's a general technology and so um it can be used in a lot of different ways many of Which are I I think abhorent and and ones that we should avoid and make very difficult to do um I I'm much more of an optimist than I am a pessimist but on the side of things that are risky I think that misinformation is high up on the list I think that we're already seeing social media platforms start to build in the mitigations I think things like human verification are

going to become crucial um if I'm reading a Poster talking about whatever Canadian elections or politicians I want to know that that's a a voting Canadian citizen I want to know because I I I want to know what my compatriots think right even if they're on the opposite side of the fence to me like that's that's fine I want to hear what they what they think but I don't want to hear what some foreign adversary has spun up a bot to push into the discourse and so human verification uh I think is is crucial That's

the one that's top of mind for me I I think some of the more remote risks like um bioweapons and and this sort of this sort of stuff I'm less concerned about enfeeblement and becoming dependent on the technology I think folks said that about calculators and we wouldn't learn how to do basic math I humans are intrinsically curious we want to know things and we can't ask the right questions of machines without knowing things uh and so we'll continue To be really well educated better educated more knowledgeable than we were before without that technology yeah

because I mean if you look at the enfeeblement pie chart a calculator is a very small part and a general AI is quite a large part which is a little bit concerning I guess but um I I agree with you that that you know maybe like the jobs one has spoken about a lot I've not seen a lot of evidence of that yet but it's so pernicious it might happen Slowly over time um Daniel dennet wrote an interesting article and and um Rest in Peace by the way Daniel dennet uh called counterfeit people which he

published in the Atlantic and he was kind of saying that when we have all of these Bots and generative um you know video models and so on at some point they'll become indistinguishable from reality and that will lead to a kind of acquiescence where we don't trust anything we see and you know I think That that's quite interesting and and I also think that these models might kind of affect our agency in quite a weird way but it's so difficult to understand now how that's going to affect society yeah I I think even now um

people have been taught to be extremely skeptical of what they read and see um there's a very strong prior inside of us for any media that we consume that it's been skewed or manipulated or um produce to propagate an idea and I think it's Good to have a skeptical populace I think it's good to be skeptical about what you read um regardless of the medium and I think people will do what they've always done which is filter towards sources they find trustworthy and objective um that'll happen even with ml in the loop you know disinformation

and misinformation campaigns manipulation campaigns they existed well before uh models existed um and so it's not like a Novel concept uh and it's always been a risk um and the question is how much more prevalent does a technology make that that risk um I'm optimistic that we're quite robust um and that we'll find ways to make it very hard for Bad actors to exploit the technology my rough take is that the more agency the AI has the more of a risk it is because if it is just doing supervised things then at every step of

the process it's being aligned And constrained and steered by humans if we ever did create agential AI then there's this kind of you know weird Divergence and all sorts of um scary things might happen but I wanted to talk a little bit about policy and regulation so you spoke to that earlier you said that potentially there are some quite damaging um policy changes being considered could you speak to that yeah I I've seen ideas floated um I don't think any seriously damaging policy has Actually passed fortunately um but within what's being considered there are ideas

that they will destroy Innovation they will destroy startups um and so you'll just entrench power with the existing incumbents um some of those examples might be uh fines um which if they're a hundred million fine that's going to wipe out and Stamp Out a startup but for a large you know big tech company it's like 10 minutes of Revenue it just doesn't Matter it fundamentally is irrelevant and certainly a cost they're willing to take to capture a market um and so very disproportionate consequences for the same punishment overregulation in that way that it's thoughtless uh

will have the exact opposite effect of what I think all of us in the public and in government want we want competitive markets we don't want oligopolies um and we're starting to see olop emerge and so there needs to be Fairly strong action pushing against the entrenchment of those oligopolies and we need to preserve the ability to self-d disrupt because if you can't if you have an oligopoly and you have entrenched incumbents um the likelihood of self-d disrupting of the the new winner emerging within your Market being one of your players goes down and so

you're going to be disrupted from from outside it's a huge risk and so you need competitive self-d disrupting markets And um it seems like some of the policy folks are just acting non-st strategically and not considering that um but fortunately what has been passed seems sensible can you can you comment in particular on the the EU AI um legislation and and the the the Canadian I probably can't say anything too specific I think the Canadian legisl um hasn't gone through yet okay uh the EU AI act has but fortunately it was reigned quite far back

from its initial Initial position you know I think all of those Regulators we're in conversation with all of them um when you talk to the folks they want to do the right thing they're under a lot of pressure from different parties with uh conflicting interests um but they're trying to do the right thing they're trying to make sure this technology gets out into the world in a safe way that there's uh oversight that um we don't entrench the incumbents and we you know ideally Actually sort of uh biased towards disruption and the creation of new

value and Innovation um new players um so I think they all want that but it's a tight RPP it's a very difficult you know line to walk I think one of the issues is that not a lot of people certainly in the government understand how this technology works it seems like magic and many people um I mean even in in the AI space I mean Lacon and Hinton for example you know People have very different opinions about it but I'm also interested in your views on the kind of health of the startup scene so we're

in a bit of a downturn at the moment it doesn't seem to have affected the llm space But even in the llm space I've noticed a trend that many people started kind of rapper companies where they did an llm but it didn't really do anything um you know that couldn't easily be replicated and what are your thoughts there do do you Think we're going to see a trend towards startups doing something that is you know very differentiated yeah I I mean I think we're in a moment of churn so I I think um there are

going to be some players who started a while ago who fold or go into other companies get acquired that type of thing um but there's a whole new generation emerging I I know a bunch of people starting up um I've and I we we invest in startups and we're seeing an Uptick in the number of AI startups that are coming out um it's sort of like a a reformatting there was a bunch of folks building at one layer like the llm layer the one layer above that um tooling Etc the players have kind of been

set in that space it seems yeah of course I'd be happy to see new players emerge um but we now need a set of of ideas and products and and companies building up the stack uh so more abstract Concepts stuff like um end user products and Agent companies they're they're all starting to pop up and create really interesting new ideas uh and then that will sort of settle and we'll have our players at that layer uh so it's a it's a continuous cycle um yeah I'm really excited about the AI startup space it feels like

we're we're finally starting to get our feet on the ground a little bit I mean for example with the tool use with the rag it's it's starting to look A lot more like traditional software engineering so what we're seeing now is is people kind of rolling up their sleeves and actually building out these very sophisticated software architectures that compose LMS in interesting ways and they're not just kind of you know just building a simple llm with a prompt on the top mhm yeah it's definitely getting more sophisticated and as the tools get more robust and

reliable it's unlocking Totally new applications uh and the utility is starting to be seen and felt in the real world I think last year was very much like the year the world woke up to the technology and got their footing with it so got familiarity um this year is when things are going to start hitting production they're going to actually start to hit our hands and we're going to be able to use this as part of our part of our work part of our You know play the the products that we use um it's going

to become a much more fundamental part of our daily life um so it's very gratifying like we we've been building coh here for four and a half years now we're in our fifth year um and I think for a long time we were out there sort of preaching this is really cool please care about this you know like this is going to be an important thing um and folks would Pat us on the back back and say nice science Project you know um but finally we're actually starting to see the fruits of all that all

that labor um and so it's it's really gratifying to see real world impact and I think that's what we exist for is is really trying to accelerate that and um make more of it happen faster and uh in the best way possible and what was your biggest mistake I mean do you have any advice for other startup Founders you Know what what did what did you do that perhaps they should avoid I uh up constantly at every every stage of the company um I think I guess just like admitting that you you've messed up um

and trying not to be in denial about it and fixing it as quickly as possible has been the most uh important thing to cohere continuing to to Thrive and exist um but yeah I this is the first company I Started um same for Nick and Ivan and so the whole founding team uh we were fresh into it and we made potentially every mistake you could possibly make um fortunately we were good at listening to others who had done it before and had seen a lot more than we'd seen and so I'm sure we've we've dodged

some mistakes but it feels like we've we've made them all yeah if you don't make mistakes you're not learning I guess but Just final question how do you you know because it's such a large organization now and there's this problem of vertical information flow so that there might be a problem that some of your folks have discovered now and it takes a while to filter through to you um but obviously it needs to be scalable so you need to delegate how how do you deal with that yeah I'm I'm very close to like the ic's

I'm very close like I'm not someone who works Through their reports or follows the chain of command I uh I just talk to the people who are actually doing the work um and so information flows quite freely um I'm sometimes I think I'm mostly annoying people at this point because I'm like pinging them every day how's that run going you know have we tried this experiment um I'm very deeply involved in stuff uh so we we haven't had too much I I don't feel Like there's an information flow issue at coh here with scaling collaboration

between teams especially when you're a global company and you're not sitting in the same office as a person um that's very difficult I think remote work is really really hard it's not easy it's not easy and I think that concentrating teams to geographical areas or at least time zones is very important uh and and leads to a lot more productiv ity and um Effectiveness it's part of the reason I I moved here to London is to be closer to a good chunk of our machine learning team Phil's here Patrick Air um like I want to

be present and involved in the ml component of the company as much as possible um yeah I think as we've scaled there's been systems that we've used that have broken down at each phase stuff that worked for the first 10 of us didn't work for the next 20 um didn't work for The next 100 uh now we're yeah we're pushing 350 I think um and there are people at the company who I don't know which is insane um a super weird experience um but we've hired really fantastic people uh and we continue to do so

and I think you just trust that people will still make the right decisions going forward and that you've you've set standards high enough um that you don't need to approve every Single hire you don't need to know what every single person is working on um and you just trust your colleagues yeah I can attest that you hire very well um it's probably the best culture I've ever seen in in any in any company actually thank you so much final question I mean just out of interest do you get like micro CMS in the different offices

I mean do you see like different mini cultures oh yeah totally 100% like the the London office compared to the Toronto office compared to SF New York um The Vibes are very very different like so different uh London London is so nice it feels really tight it like it still feels like a startup um which we are a startup but it still feels like a tiny 30 person startup where you go out for you know beers with your colleagues after work at the pub like regularly um everyone knows what everyone else is working on inside

the office calls on each other for for help London is super Tighten it I I think the culture here is like um I can't pick favorites uh but I really like the culture here um in Toronto it's our biggest office yeah and so it's much broader um but the culture there is amazing too super hardworking stay late and like grind very passionate there's there's like different groups that are close with each other there um New York is new but that city is just so much fun it's just like such an incredible City so much energy

always Awake you know work hard play hard um SF I I spend the least time in I'm not a huge SF fan to be completely honest um it's our second HQ uh but I I just haven't gotten into the city I think in the way that others have um I I think SF compared to like New York Toronto London I feel like New York Toronto and London are real cities there are artists there are you Know people just doing a very diverse set of things and um across all the different fields that are going on

there you have some of the best people in the world um SF is much more it feels more homogeneous to me it's a lot of people doing the same stuff there's sort of one topic of conversation there's you don't bump into someone with a categorically different worldview than you or perspective or Experience um and so I like visiting because I I meet like brilliant people in our field in Tech M um but to live there would be really difficult for me I I would feel like I'm sacrificing whole pieces of my My Life um but

I love visiting it's a great place and I love the folks who who are there and uh most of our investors are there and so um it's a really cool environment very intense and like competitive and those are really good Things it's very motivating to be there but I think I can get that just by visiting I don't need to commit myself full time Aden Gomez it's been an honor and a pleasure thank you so much for joining us thank you so much for having me [Music]