Hello and welcome to tonight's Commonwealth World Affairs Program. My name is Quentin Hardy, and I am the moderator for this evening's program. I am a writer who spent 30 years in journalism before working at Google. Now I am independent. A few reminders before we get started. Tonight's program is being recorded, so we kindly ask you to silence your cell phones for the duration of the program. If you have any questions for Renee, please fill them out on the question cards that were on your seats and, if you are joining us online, through YouTube chat. Now

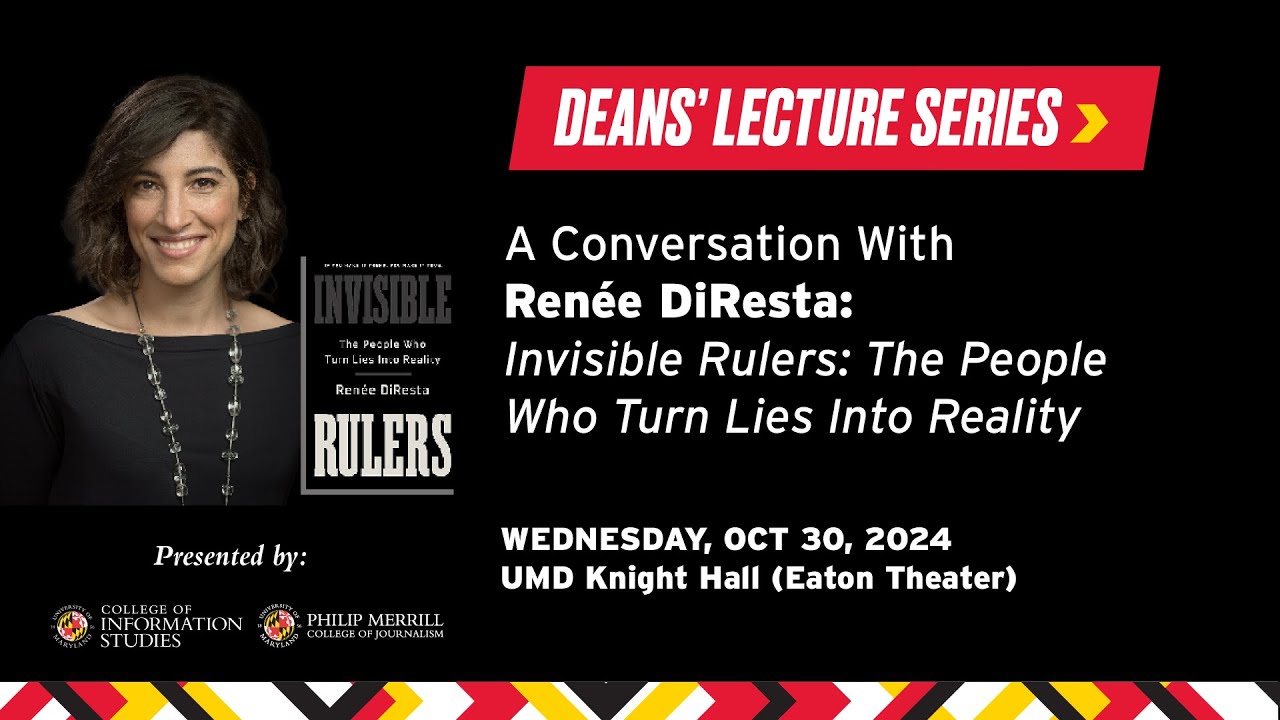

it is my pleasure to introduce tonight's guest. Until very recently, Renee DiResta was the tech. Renee DiResta was the Technical Research Manager at the Stanford Internet Observatory, Contributor to The Atlantic, author and author of Invisible Rulers: The People Who Turn Lies into Reality, which will be the subject of much of tonight's talk. Rene's work examines misinformation, rumors, propaganda and conspiracy theories in the digital age, both domestically and abroad. It's a tough job, as her book details, it has made her the subject of wild rumors, online harassment and investigations by congressional committees who seek the truth

by gathering outrageous misrepresentations of people's work, feeding subpoenaed material to other Partizan conspiracy mongers, and then reporting back their so-called findings. That about right? Yeah. Okay. The terrible thing is it seems to work. As many of you know. Last week, we learned that her contract at Stanford was not being renewed and that the Stanford Internet Observatory has collapsed under the weight of the attacks. This is definitely something we'll be getting into tonight, as it ties into the subject of her book and her years of work studying the effects of online disinformation. Renee, welcome and congratulations

on the book. Thank you. One of the things I admire about this book is the way you show how Many of today's actions may be shocking to us, may seem outrageous to us, but they don't, they are not without precedent or context. Human nature is not changed by social media. We are who we are, and social media is not the first time a new communications technology has challenged society. Early in the book, you talk about Martin Luther and the printing press bringing on the Protestant Reformation or the 30 Years War. The English Revolution. In modern

times, Father Coughlin, who had wildly popular fascist radio speeches in 1930s America. The propaganda work of Edward Bernays, also known as the father of public relations. It's riveting stuff and leads us into the rise of influencers, algorithms and crowds, and the dynamic of the rumor mill and the propaganda machine, the incredible effects of virality, and how this is being exploited by an unprecedented number of sources. Well, that's the jumping off. So perhaps you could talk about what's the same and what is special in today's very dynamic information world. Yeah. So I wanted to write a

book about how propaganda had evolved. And I came to this field through computer science. And so my my background is in CSS. And then I was just, I got really into data analysis And trying to understand how people formed opinions on the internet, in part because, I when I became a mom, suddenly became inundated with anti-vaccine content, sort of pushed me in a lot of different directions. And I thought, why is this so popular? But what is happening here? So I, I started off trying to understand the mechanics of it and got really into like

network analysis, right? Which is the way I think a computer science person might approach it. What are the nodes in the network? How does the information traverse across the environment? What is the process by which It gets from social media platform A to social media platform B? But what you lose when you do that is you don't understand. Like why does it resonate? So then that turned into a whole, you know, attempt at understanding why did certain types of rhetoric work? It's not that this is new. Anti-vaccine narratives actually haven't really changed much since the

1800s. So why did they work and why did they persist? And so I spent a lot of time trying to understand that just that kind of basic communications theory. What are the ways in which people come to form opinions? What are the mechanics by which that happens? Then how do we think about how that's changed in the age of the internet? Ultimately, I tried to write a book that was less about social media and more about the relationship. Like what social media empowers people to do, and particularly crowds of people, how social media has transformed

the way that we assemble and crowds and think about ourselves as members of a group. And most of the old propaganda literature going back to the 1920s, you see it again in the 1960s. The 1980s is talking about how in a particular technological structure, there is this component of finding people where they are and reaching them as members of a common identity. And I thought in this day and age, nobody does that better than influencers. And so it kind of became a book about how influencers and crowds interacted in the modern era. Yeah. speak about

influencers a little bit in the rise of influencers, because when you first got into it, they weren't, as they say, such a thing. So the, the, the term invisible rulers, that that phrase comes from Edward Bernays in the book propaganda in 1929. And he has this, if you've if you ever read the book, it's very interesting because it's not a study of propaganda as we think of the word today. This is pre-World War II, so it's not a pejorative actually. It's just sort of, an idea that this is how it references actually the, term kind

of by the Catholic Church, the propagation of the faith. Propaganda means to propagate. And it's a particular verb form that is an exhortation to propagate. This must be propagated in the way that Carthage must be destroyed. You know, it's sort of a this is a thing we're going to do. And that's where the word comes from. and so when Bernays is talking about propaganda, he is arguing that actually it is the responsibility of the government to propagandize to its citizens that in fact, it is the invisible rulers, the people who are behind the government, who

are actually this unseen power that shapes how people come to see themselves in their group and their role in the world. So it is actually the obligation of the government To bring these disparate groups of people with their different senses and different experiences into one common, shared American identity. And you see this rhetoric from Bernays. You see it from Walter Lippmann at the time, and you see this sort of, this idea which we see is very, very paternalistic today. But the idea that people think and feel and different worlds, and it is the mission and

responsibility of the government to propagate good information so that the people can understand the role in the world, what America is, a notion of a shared national identity, etc., etc.. And that's where that that's where that phrase comes from. So then you get to the influencers. When Bernays is writing about propaganda, he's writing Not only about the idea that you're propagating an ideology, he's also writing about creating demand for a product. So he has this, project that he does where he needs to convince women to smoke cigarets. Right. He's been hired by a cigaret company

in the late 1920s, early 1930s, maybe. And he comes up with this idea of torches of freedom because at the time, women, you know, women were wanting to be more liberated, right? They they wanted to do all of these, you know, they wanted to, to have more agency. And so he reframed cigarets as torches of freedom and says that, like the empowered woman smokes. And so you and your, you know, your identity as an empowered woman, it creates demand in the individual by referencing their role as a member of a group, a group identity, and

to create demand for the product. That's how he goes. That's how he goes about doing this. And so the book describes this in a lot of different ways. And I thought, okay, what is this? This is a great metaphor for what happens today where you're the person that you see as an avatar of particular types of opinion is actually kind of, you know, is the influencer, right? This is the way that that political engagement happens on the internet, but also the influencer arrives on the scene as a character, you know, a sort of a, a

figure on the internet, Because brands realize that these slightly more popular people are actually really great for convincing people to buy shoes or detergent or, you know, baby food. And so what you start to see is actually the rise of, of influencers as, oh, it's like a peer to peer sales channel so they can be selling a product, or they can be selling a candidate, and the process is largely the same. Yeah. And this is a decentralized system. Right. So instead of one identity, you can foster a thousand identities. You know, I'm an influencer about

gardening. I'm an influencer about space aliens controlling the financial system, you know. And so it's the same person. Sometimes it will get into Amazing Polly in a sec but one of the other things the new media does so well historically is hijack the old media. Yeah. Bernays did Torches of Freedom, but he also let all the press know there's going to be this great feature story at tomorrow's parade. These women will be walking around waving these cigarets and demanding liberation. And of course, it gets written up and it turns into its own little information bubble

to follow. Yeah, he spends a lot of time talking about, the invisible ruler's role In creating a moment and then making sure that the moment is seen. And so, again, it's that that same conceptualization that you have on the internet, if nobody sees it, if it doesn't go viral, did it even happen? You know, so you have to have both of these components. And that's how it begins to reach the public. And also fast forward to today, so much of the press will take a tweet as an example. Say something on the internet. Someone on

the internet is saying, you know, and as if that's evidence of anything, but they're also... Right. Are they complicit or was it just that I think it was outrageous so it would sell? You know, it's an interesting question. I, I spent a bunch of time with the book Manufacturing Consent. Right. Chomsky's book in the 80s and the phrase manufacturing consent references Walter Lippmann talking about the obligation of the government being to manufacture the consent of the governed by what he means use propaganda to get people to, to subscribe to the thing the government wants to

do. This process of persuasion and the line between propaganda and persuasion has always been a little bit fuzzy. You you don't quite know where it is. So what you start to see is, when Chomsky writes the book Manufacturing Consent, he's gone and he's taken a phrase from Lippmann and he's pulled it about 60 years into the future. And the thing that's so interesting about that book is people remember the part about how media is complicit in a propaganda ecosystem. And I think if you see the book referenced on Twitter more often than not at somebody

darkly, you know, manufacturing consent or manufacturing consent, right? And it's used to sow distrust in media when what the book is actually about is it's a book about a system of incentives. It's a book that says that because of these things, these financial incentives, these human relationships, These professional relationships, this is the type of content that we get. So he's not actually anti media. He's not telling you don't listen to the media. What he's saying is this is how the incentives and the structures of the system lead to this particular type of output. And I

feel like you can do the same thing with, you know, with understanding influencer culture on the internet. Nobody is saying don't follow influencers don't partake in this. The system is evil. I think it's the you know, my goal with this was actually much the same. It was to say, here are the inputs, here are the incentives, and this is what we get as a result of that. So when you see it, when you when you engage with it, it's not even so much a question of complicity as this is what is incentivized. And that's why

we're getting what we're getting. And you do call it a new system of persuasion. I'm interested. What do you see as the chief values of this system is are people influencers for money? For, to build community? For virality? For all of the above? What? I think it's a mix. I think you get some who start out because they're just, you know, you realize, hey, wow, I, I just had a viral TikTok. I think I talk about a couple of people in the book. there was, you know, some kind of coverage of folks who became wildly

famous from TikTok, which was like a brand new platform. Charli D'Amelio, this, a woman who's a dancer, who's down in LA, she happened to be an early adopter of the platform. She just started posting herself dancing. She's very girl next door. You know, there's just kind of charismatic. But not not like movie star charismatic. But she winds up amassing an absolutely massive following because she's seen as so relatable and so enjoyable, and she's just out there kind of doing her thing, But she does kind of make fun of herself, actually. She says, like, don't worry,

I don't get the hype either is like her bio for a while, because when people begin to hear about her, they go and they're like, what? What is special about this? Like, I don't get it, you know? But there's that interesting mix of relatability for her. I think she has a hedge fund now. I think she's like an angel investing fund. Right? I'm not a hedge fund. Sorry. like a venture fund. like, she's like angel investing. And there's a, she makes an incredible amount of money. Yea you said Mr. Beast is almost a billionaire? Yes.

So, Mr. Beast, I think the theory is that he'll be, like the first creator billionaire is, where the kind of, like, current trajectory is. But people are, I mean, they're more than earning a living. Obviously, this is the cream of the crop, right? These are the people with hundreds of millions of followers, not the people with tens of millions of followers or tens of followers. but you have these, these other people. So Charli D'Amelio becomes this luminary on TikTok, right? She is like nothing. And people then begin making YouTube content, discussing her rise so that

other people can try to replicate it. So there's this entire kind of creator class that is creating content about the creator. And then you have people who are like, oh, I got 3 million views on one TikTok, which is quite good. And then they kind of like chase that high right there. They decide that, you know, one woman works at a pizza place. She gets her 3 million views, she quit her job, but then she can't actually replicate it. She's kind of like, one hit wonder equivalent, right? Oh, and then she, like, can't quite do

it. But you see, people chasing either... However fast the treadmill goes that your new normal. Yeah. So they're chasing fame, they're chasing money, they're chasing clout. And then what you start to see is, as people realize that this can actually turn into real political power, you see the rise of the political influencer, right? The people who are there to be, quite explicitly, I would say modern propagandists. Right. Like selling an ideology is that is what they are out there doing? Yeah. There's a step in between which takes us to Amazing Polly. Yes. Spend a minute.

She's a got a gardening blog. I don't know. Show of hands really quickly in the audience. I can't see your faces, but I can probably see this. How many people remember that there was, like, a viral conspiracy theory that Wayfair was selling children? Like pretty good. Five people, of whom two are extremely online. So the, there is this moment that happened. I'm trying to remember the year. Honestly, I’m a little bit jetlagged. I’m still on East Coast time, but, but there's this woman named, She goes by Amazing Polly, and she's a creator on YouTube. She's

got a Twitter account, at the time. She winds up losing these. But at the time, she had them, and she's a QAnon influencer. No, but before that, she's pretty innocuous or, like, a gardening thing? She she kind of starts off, she has this this trajectory, right? She's, she's kind of producing content, but she becomes a QAnon influencer, and that's where she gets her. Like, she's not getting, like, no reason of you. The gardening content there. There's a view, right? But it just takes off. Yeah. So that's the thing to feed. 100%. So she's got her

thing that she's feeding. And one day she's like on the internet and she notices that they're the furniture company Wayfair. Wayfair.com. You know, I think I bought a lamp from there once. You can buy furniture and it turns out that there are industrial filing cabinets on there that cost between 15 and $30,000. Why they cost that much? I do not know. but they have they're named the model numbers are names and they're human names. So female names in particular. And so she decides that this these are not just filing cabinets. This is actually Wayfair as

a hub, excuse me, for trafficking children. So she decides that what you're really buying when you order that filing cabinet is a girl with that name. And then this is where people on the internet pick this up and they start searching Wayfair, which has millions of products. Right, because it's also kind of a drop ship or, you know, it's a huge, huge online store. And so, of course, some of the names of the filing cabinets match the names of missing children by virtue of statistics and name commonality. But this becomes all of a sudden all

of these different people are on the internet posting the faces of missing girls next to the filing cabinets and this story goes wildly viral. But what starts to happen is like, as it's kind of funny as it is, this is all happening under the hashtag Save the Children. Except there's a real organization called Save the Children, which saves actual trafficked children, which is all of a sudden finding itself in a place where it has to be putting out appeals, telling people to stop calling it about Wayfair cabinets and so. So the actual child safety and

trafficking organizations are out there saying, please stop doing this. Like, please like stop the conspiracy theory. Please let us do our jobs. We need our tip lines open for actual crises like. But of course, anything you do that stops the virality, right in the eyes of some who are actually incentivized to conspiracy makes you complicit. So Twitter eventually this is old Twitter. This before Elon bought Twitter. Twitter throttles the trend, right? Kills a trend like it tries to, you know, the the tweets are still going out. It's just no longer showing Wayfair and trending. Then

they lose their minds because Twitter is censoring them. Twitter is in on it. Also, maybe who knows. and then one of the girls actually was a runaway, it turns out, and she says, hey, that's my face. I see my face trending. I just wanna let you all know, like I'm home and I'm fine. And then they attack her and they say, like, we know you're lying. We know your captors are making you do this right. And so it becomes like. And then they begin to attack her. Right? People begin to say like, are you a

plant? Are you like, the one who really did, you know was just a runaway and went home? But all the other trafficked girls or, you know, are still in the filing cabinets or what have you. Right. and so this becomes like a it's like a pretty major internet moment and the Washington Post is trying to stamp it out, you know. Kind of inadvertently, Polly has created this crowd And crowds don't know how to take themselves apart. Right? I only know how to sustain. They can't imagine. Oh, we were wrong. We should go find something else

to do. Why would that be, folks? Nobody says that, right? It's just about sustaining the group. And so, I'm not sure the social. Where the social media companies kind of ready for this, you think? Have they been surprised? I was really struck that as late as, 2017, 2015 On Facebook, anti-vax was an ad targeting choice. Yeah. You know, and there was no kind of pro-vaccine targeting choice. This was, I think it actually took I want to say maybe it was ProPublica that that did some of the work that shifted that. So I was, part of

this group of moms and, and kind of community members called Vaccinate California in 2015. And we were trying to grow a pro-vaccine movement on the internet. And so we made a Facebook page. Then we had to figure out how to run ads on Facebook. So, you know, we're in there. We have like Like maybe like $2,000 is our budget for this entire, you know, project. So we want to make it go very far. And I was responsible for running these ads. So I was like, okay, let me figure this out. So I go to Facebook's

ad creation tool and I start typing in keywords because, you know, this is what you do. And every time you put in the word vaccine, you get, you get anti-vaccine ad targeting. So it's like, you know, it's anti-vaccine vaccine conspiracies as one, you know, you can ad target to people who have interests in these things. So Facebook has intuited that there is a sufficiently large audience base That is interested in vaccine conspiracies to make it actually a thing that you can pay to target, and you can reach those people by spending a couple hundred bucks

and that's who you'll reach. But then I was like, well, okay. Where are the pro-vaccine?Yeah. I wanted people, like the goal of our group at the time was to get people calling their representatives in support of a bill to strengthen vaccine requirements. This was around the time of the Disneyland measles outbreak. So we want all our our entire goal effort, all of the, you know, the money that we were spending on this campaign was to try to encourage people to call their representatives, And we were based and, you know, each of us was like scattered

in a different city. We had to grow this California statewide movement. So we're like, okay, well, Facebook ads are how we're going to do it. and there was absolutely no way to do that. So we actually wound up looking, we realized that you could tag every conceivable medical profession under the sun was also listed as an ad targeting category. And so I just went down selecting like, every ‘ologist in the.... hahahahahah. But you're pointing, you're pointing to something really important in this, which is it's very hard to build a crowd around normality. Yes, yes. There's

nothing very exciting about it. Well, and it's hard to build a crowd around just straight up fact based truth. It's much easier to build a crowd around a conspiracy or people withholding information from you or some other myth it seems. We realized this when we were doing Vaccinate California, in part because we realized that, you know, we had a very unfailingly positive message. Right? And, and we were, you know, we were talking about public health, community infants, kids who were immunocompromised and wanted to be able to go to school. But there's a, kid with leukemia

who was in the community and, when testified a couple times in front of the California State Senate. but the other thing that we Noticed was that where the real energy was, was the anti anti-vaxxers. Right? So it was the people who were on the internet who hated anti-vaxxers. And we're like it out there just on a, you know, who really just wanted to troll back. Right. And who saw it as like, we too can be vitriolic jerks. And so we will go off and do that. And we tried to figure out, like, what we were

supposed to do with that because it wasn't quite right for us. It wasn't right messaging we wanted to put out. And yet it had an incredible amount of energy behind it Because they saw it as like trolls from all over the country. And a lot of them were trolls. That isn't absolutely 100% accurate description of what happened in that campaign. There were a lot of extremely nasty people, anti-vaccine trolls from all over the country were insert were threatening California legislators were, you know, were sort of harassing them or you were doing all of these things

to try to make them not want to pass the bill. This intimidation to drive your opponents out of the conversation was very much beginning to happen in 2015. And so we didn't want to be like that. And yet at the same time, here were these people and their memes were funnier, they got more attention, They were just more engaging. They got more pickup because they were giving people something to rally around. Right. Which happened to be attacking the other side. And so it was very interesting to watch that dichotomy between us trying to grow a

positive movement and then realizing that, the anti anti-vaxxers were having much more success, right. And well, a big goal is to own the opposition. Yeah. You know, to jump around just a little bit. The as you say in the book, the far right, social media sites don't do very well because there's no libs there to, to own. So they're just with each other talking about how bad the libs are. And that's kind of boring. You know, you have to outrage somebody. You have to have a kind of, I don't know, is it a sense of

negativity going on? I think it's I think we've created this norm where the thing you do on the internet is, is you fight with people. Yeah. You either you sort of like, what is the thing that is going to outrage me today? And I remember I used to open up Twitter, and you know, gradually over time, particularly after Elon bought it, I just felt like, okay, what kind of asshole am I going to see today? And it started to just feel exhausting. I felt like I had to, like, mentally prepare myself for what kind of

terrible interaction am I going to have when I say this? Do you? I don't know how many people remember this, but, there were there are so many of these moments. There was one where, like a woman tweeted how much she loved having coffee with her husband in the garden. How many people remember this? And okay, see, I see at least one person. And it was like a genuinely like, you know, a woman who just really loved having coffee in the in the garden with her husband. And she was talking about how how happy that made

her feel. And the internet attacked her. And there were people who were like, you're so privileged! Why aren't you thinking about, like, people who don't have husbands? People who don't have partners? People who are lonely? You know. People who don't have gardens? people who don't have coffee? Right. You know, and so it just turns into, like, the entire internet attacks this woman for what was like a completely innocuous tweet. And you look at this and, you know, you sit there and you're like, there, but for the grace of God go I right? I remember... And

as you say, social media becomes a barometer for social norms. Yeah. And this is probably. Yeah, I remember one day I was it was during the pandemic and, I had ordered a, like, a fancy French rib roast, and I'd never made one before. And so I posted a picture of it because, like, they're pretty, you know, it was like a, like a crown or whatever. Anyway, it was like a, it was a around a holiday and and like a mob of people were like, you're posting dead animals. Meat is wrong. That roast cost this much

money, you know? And I was like, Jesus Christ, I was trying of I was proud of my dinner. Yeah. then you think before you do it, the next time you're like, will the vegans come for me again, you know? Right. Unless you want to, unless you're the person who wants to engage in that, don't get near it. But somebody else wanted to engage in that. And that is, foreign governments. Yeah. Which picked up on this pretty quickly. Yes. They were they realized very quickly this was like terrorist organizations and then foreign governments was the sort

of order that one. And once people realize this is a tool of political power, right? A tool for galvanizing crowds, I think, what you start to see happen, like the propaganda literature goes into this a lot as well. By the 1960s, they realized that, like, you're not necessarily trying to persuade people, you're trying to activate them. So how do you activate them? Well, you just find the people who already believe the thing and you entrench them in it a whole lot more. You make it absolutely immersive. Their entire identity becomes this issue, and you reach

them where they are. They are already there. You don't have to move them from here to here. You just have to kind of like dig them a little bit deeper. And that's how you grow a faction and that's how you really galvanize change, right? That's how you start revolutions. And so this is what what you start to see, on the internet is... Everybody thinks that like, the Russian trolls are out there trying to persuade people. This actually been kind of like a pet peeve of mine and some of the media coverage about it, where, you

know, you see coverage that reports things like, a very small number of people saw the content. That's absolutely 100% true, But there are people who were receptive to the content already. Right. And so it is doing that work of entrenchment. It is not trying to move people from being Hillary Clinton supporters to being Trump supporters. They do not bother with stuff like that. They get they work to get Rubio supporters and Cruz supporters to move to Trump in the primary. So you do actually see with the Russian influence campaign, that effort to to drive people

to their preferred candidate, that little bit of persuasion happens. But other than that, they're just sort of meeting people where they are and pitting these different identities against each other. You're a veteran. You're, you know, fellow veterans are homeless. They have no benefits. They're struggling. They're suffering. Why are we giving money to the refugees? Yes. And then to the page that they had running about Muslims. It was like, the U.S. hates you because of Islamophobia and so on and so forth. And so you start to see the, the way that they kind of connect the

dots between these things, you know, pitting these different groups against each other. But they spend most of their time just reinforcing pride in serving your country or being a muslim. And so as you Kind of reinforce that identity, then you kind of pit them against each other and that's how they are actually doing their work. We have questions from the audience and I'm going to have to speed up so we can get to them because several of them are about the, Stanford Internet Observatory. Not all positive. And we'll get to them in just a sec,

but I also wanted to ask about, you know, people who put out disinformation or unsubstantiated, things, that... RFK Jr.: 5G networks are causing untold damage. Nicki Minaj on her cousin. Yes, you know. That’s a good one. Her cousin took, the vaccine. And I think it was like her cousin's friend, her cousin's friend took the vaccine. Am I allowed to say it here? I'm trying. And her his testicle swelled up, and, And so it literally became this viral tweet about...Kind of the way you do if you have an STD, which means...Yes! And so the man presumably

tells his partner that the problem down there is because he got vaccinated, not because of the STD. And what I like about this was there's precedent in the 19th century, The first smallpox vaccines people said gave me syphilis. Yes, yes. And it was, there's some there's some really funny, ways in which you do see that. Like, you see people who have other diseases say, I went and got vaccinated for smallpox, and then I got syphilis from it, and, yeah. Or, you know, the president talking about bleach and people drinking it, or Joe Rogan with, ivermectin.

There's just so many examples, like Vice President Harris talking about how I don't trust a Trump vaccine. Yeah, I know those on both sides. Right. Why don't people pay any price for putting out ridiculous things and just saying, oh, I'm just putting it out there. I'm just getting it into the discussion. Let me take the ivermectin one first, because that actually highlights something that was very unique about Covid, which is a lot of the vaccine stuff, like during Vaccinate California in 2015, the measles vaccine is unambiguously safe. It has been reinforced over and over and

over and over again for decades. Studies done globally all over the world, you know, this is as close to settled as we get here. The Covid situation is very, very new and there are new vaccines there are new treatments. Nobody knows what is happening. And so the science is evolving as people are doomscrolling their phones, right? Some of them are very angry and upset. They're out of work. Some of them are some of the other people have family members in hospital. It is a lot of different things that are happening with people's individual experiences of

Covid. And something like ivermectin comes out and people are very hopeful about it. Hydroxychloroquine is another one. They're very hopeful about it. And so the early days, the early excitement for several of these treatments does not emerge in the United States. It actually emerges overseas, because these these treatments are used for many, many other things that are sort of, you know, hydroxychloroquine is quite common in areas with high malaria counts and things like this. And so they're talking about will it work on Covid. And you see this excitement. And then you see in other places

you actually see it decline as they realize that, no, the studies are indicating that this is not particularly effective. In the United States though it becomes like an identity marker. And they are trying to keep these cures from you. And so it's the problem is not that people are hopeful that a new treatment Is going to work, or that they are running experiments or suggesting more experiments or what have you. Right. The problem is not the undecided facts. The problem is that it becomes such an identity marker to say the vaccine is going to kill

you, but the ivermectin is going to work, even as the science is increasingly showing that the opposite is true. So what you see is like an entrenchment where depending on which side of the political aisle you're on, your trust in the research itself is shaped by, by where your alignment is and by what your influencers and media are telling you. Okay. Well, some in the audience have a different view, and I'll turn to this. You discussed propaganda as creating demand for a product. SIO's primary objective censoring compelling narratives, how to treat Covid, censored valuable treatment

option narratives while promoting the almost vaccinate narrative. Do you admit you were what you were doing? Wasn't it self-promoting propaganda? We weren't doing that so that's the problem, right? So the virality project was a project to highlight the most viral vaccine narratives that were going on week after week. And so what we did was we had a bunch of student analysts who sat there and they looked and they cataloged what are the most viral vaccine narratives week over week. And we publish that. We put it up on PDFs on our website, and anybody could subscribe

to receive those emails. And so we had people who were public health officials. We had people who were frontline doctors. And what they did was they took that information and they created counter content for it. And in some cases, when the the kind of, content seemed to violate a platform policy, every now and then they would tag the platforms and they would say, you can go ahead and you can look at that. And that was it. And then the platforms would make a determination about what they wanted to do with that particular narrative. That is

literally the entirety of the project. But some people, like sub Stackers, decided that what we had actually done was run a vast censorship operation, and they never actually lay out the facts or the evidence for what it was we were supposedly doing, or how we supposedly censored these narratives. If you've heard things about ivermectin or hydroxychloroquine, and yet you've also heard that we censored all of those narratives, these two things are inconsistent, and that becomes one of the real problems with this allegation that we were like running some vast cabal. Well, this this comes in

even sharper on that. Why did the SIO feel it had the expertise to censor what they call narratives that were actually scientific studies by the likes of distinguished Stanford professors like Jay Bhattacharya? Did I butcher his name? We never talked about anything Jay Bhattacharya did. And John Ioannidis, the former, having sat on an FDA approved panel for vaccines. But again, we didn't censor anything. So the premise of the question is flawed. But as far as Jay Bhattacharya, Jay Bhattacharya is best known for something called the Great Barrington Declaration. And since we were only studying narratives

related to vaccines, the Great Barrington Declaration was out of scope for us, and we never wrote or said anything about it at all. So again, what you read on Substack isn't necessarily true. And yet well-meaning people continue. What do you do? Well, I mean they believe that this happened. And what I say to them is what is the evidence that you've seen that it did happen. Where is the emails that we sent demanding that something be taken down? There is this crazy narrative that we, I talk about this in the book, that we censored 22

million tweets. That is a staggering number. Where are they? Which ones were they? Where is the list of the 22 million tweets? The people who ran the Twitter files had access to all of Twitter's internal systems. Where are the 22 million tweets? Where are the emails demanding the takedowns? They don't exist because it didn't happen. And so what it comes back to again, over and over and over again is where is the most compelling piece of evidence you've seen, and why would we do this as the other piece of it? When we were publishing again

weekly on our blog in real time every week, that briefing went up on our website, and that's because we were sending it to the office of the Surgeon General, was one of the people receiving the emails. And we knew that any time you send an email to a.gov email address in the executive branch, that email is subject to FOIA, which means that no matter what we did, it was never going to be a secret, even if we wanted it to be. You could simply FOIA the government and obtain the records. So we just put them

up on our blog because we thought that if we put them up on our blog, people could just see them for themselves. But the trope of the secret documents and these censored narratives Is so compelling that people desperately want to believe it was true. And that's because the people, the people who write these things about us, sell this serialized alternate reality for $9.99 a month to their Substack subscribers. And that's what's happening. And there's a similar question, but I think it introduces an interesting wrinkle here. How did the Stanford Internet Observatory get around the research

rules regarding human subjects? Your subjects were people in the public square platforms who your group deprived of their First Amendment rights. I mean, again. I don't understand how. So there's so many layers there. Well. First, we aren’t the Government, right? Yeah. Second, we're not the platforms. So we if you take the strictest interpretation of censorship, which is the government deciding that it is going to suppress content for some sort of viewpoint based silencing, which I think is a bad thing to be clear, we weren't the government. Second, if you use an expansive definition of censorship,

meaning a private company has decided to moderate something, then again, we are not a private company. We made no moderation decisions. Whatever Twitter or Facebook or anybody else labeled, took down or throttled was not in our power to do so. This idea that we censored anything ascribes to us a power that we didn't have, and a motivation that we didn't have. And I don't know what I can say beyond that in that front. And I think it also introduces another dimension which, you point out and it's kind of different from what has come before. Free

speech is not free reach, as you say. Like you can say things that, the man who, originated that phrase is right there in front of me. Oh, yeah. Way to go! It's a good line. You know, the, Well, I mean, even beyond that, The idea of human subjects research means that we would have, like, been engaged. It's got a kind of, Tuskegee experiment vibe. But it's the kind of thing you see on the internet where a people, like, seize upon these phrases, studying narratives, using public data on the internet, meaning just reading public data on

the internet is not human subjects research, no matter how much people who want to spend things in a certain way might like it to be, it's just not right. Stanford IRB will tell you that as well. Okay. Well, I think we're now in that part of our program. Let's talk about Matt Taibbi, Michael Shellenberger, Jim Congressman Jim Jordan, and Congressman Dan Bishop and that whole dynamic because....Great guys. Better back up for the home audience and talk about all this. Matt Taibbi, well known journalist, was brought in by Elon Musk. And I think that's kind of

yeah, yeah. Stories. sure. So in, God, that was December of 2022. This project called The Twitter Files begins to happen. And at first I thought, like, oh, this could be interesting, right? Because one of the things is a person who studies social media is where I was where I was like, oh, how are the platforms moderating? You know, we only can see what's happening from our point of view. And we keep track when we, you know, when we see things that, either seem to violate a policy. One thing also let me add with like the

Election Integrity Partnership, as we were looking at election narratives, anytime something both violated a platform policy and we thought, okay, this rises to the level where it might be worth tagging it for them, we kept track of whether or not they actioned it, and 65% of the time they did not. And about 20% of the time, it got a label and I think 10% of the time it came down. Maybe 13% of the time it came down. And so it was very interesting to us about that. Was it even things that seem to clearly violate

their policies actually stayed up? They did nothing. And so on. Okay. Interesting. and this I think, is one of the reasons why people feel like moderation is so unfair because some people get actioned and some people do not, and then you get a lot of debate about why did my tweet come down when your tweet stayed up? And this creates a lot of resentment. So what happens with the Twitter files is, these writers are given access to these internal Twitter Twitter emails. Again, that's what I was referencing like the 22 million tweets, Like, where are

they? So these writers are sitting there and they have access to all of this data. And so they begin to write these stories about platform moderation. So they discover that, the aforementioned, you know, my esteemed colleague at Stanford, Doctor Jay Bhattacharya, they discover that he has been put on something called a trends blacklist. This actually an interesting fact, right? Why? I don't know but he was. And so you start to see these, like, glimpses into moderation. But unfortunately, they're also very cherry picked research projects. They're out there searching for things like, What did the Biden

campaign demand, come down? Or how was Hunter Biden's laptop censored? And in these sorts of very specific, kind of angles on moderation, and they never really write the story of anything systematic. Right? They're not saying this person is on a trans blacklist. Okay, that's a fascinating anecdote. But like, how many people are on that trans blacklist? What percentage of users are on the trans blacklist? How are they geographically distributed? Are they ideologically tagged? What does that co-occur with? Like the kinds of things that you would look at If you were a scientist with access to

that? Or you mean context? Yeah, context, exactly. Which is tough. Where like my crazy idea that, you know, that maybe you should contextualize things. But so what winds up happening is, you know, they get some they're catering to a very particular audience who believes that Twitter moderation has been biased against them. They write these stories, and these stories go viral. And, this becomes a launching pad Taibbi gets, I think, a million followers, a million new Twitter followers in like two days after he puts out the first Twitter files, and, One of the others, Michael Shellenberger,

launches his newsletter. I think Bari Weiss rebrands her newsletter. She was sort of quickly off the project, just to be clear, she did kind of one Twitter files and then, I think she didn't really feel very comfortable with some of the terms there. And she got in an argument with Elon about something. And so she, got kind of persona non grata I think. And then the rest of them, though, begin to write these stories. And what winds up happening is Jim Jordan, November of 2020 2nd January of 2023, he gets gavel power. He launches this

committee on the Weaponization of government. And so what you see Him do is he invites in the Twitter files, researchers, writers, whatever to come in and to tell his committee about how conservatives have been viciously censored by Twitter. Again, they have no systematic evidence whatsoever. But they have a couple of these anecdotes and they wind up coming in. And one of the things that they talk about is the 22 million censored tweets. And the thing that's fascinating is that they don't ever reference any of that in their own research. They're not talking about these are

the things we saw at Twitter that corroborate this. Instead, what they're doing is they say, like, this guy over here wrote it on a blog. This guy from the so-called Foundation for Freedom Online wrote that Stanford censored 22 million tweets. And so they regurgitate it under oath. They make that statement under oath. They write it in written testimony, but they're not actually citing it to anything that they themselves have had access to. They're just pointing to yet another right wing blog that said it. But it doesn't matter, because now it's in the Congressional Record, and

two days later we get an email from Jim Jordan, letter requesting all of our emails, communicating with the executive branch of the United States and with the tech company, Going back, I think to 2015 was the original request. We point out that SIO didn't exist until 2019. They they re-scope the letter, and then it, then they get mad because some of the things that they're requesting are emails and documentation from students. So Stanford begins to negotiate around what they're going to turn over. This is a thing that happens in a subpoena process, sorry. And in

this like this letter process. But then they, you know, kind of very like with significant outrage, escalated to a subpoena. And, and then the subpoena process has begun. And you say in the book they then use the subpoenaed information In a rather novel way. Yeah. So in a novel way, we wound up getting sued by Stephen Miller. He of the hahahahahahahaha. So, so we get sued by, by America First Legal, which is which sues us on behalf of the Gateway Pundit, the sort of, right wing you know, kind of, blog that actually is now declared

bankrupt, recently departed, declared bankruptcy because it wrote a bunch of election lies. As I wrote about a bunch of lies about two election workers, Ruby Moss and Shay Freeman, claiming that they had done, I try remember the specifics, either brought in ballots or gotten rid of ballots. But they allege that they somehow interfered with the vote count. Donald Trump tweeted this. Rudy Giuliani went after them also, and they had to flee their homes because of death threats and things like this. So when we did our election research gateway Pundit was one of the, kind of

repeat, you know, repeat spreaders who is very remarkably effective at making things that did not appear to be true go viral. And so he had appeared in some of our writing. But there was also this anti-vaccine activist that we just never heard of. So all of a sudden we find out we're sued on Breitbart by somebody we've never heard of and Stephen Miller's firm is, is is conducting this, this lawsuit. And I can't talk about the pending litigation, but what, what winds up happening is that Jim Jordan takes the material that we've turned over under

subpoena and give some of it to Stephen Miller. So normally, the way a court case works is your lawyers negotiate what you're you know, what you're going to turn over and what's going to be eligible for discovery and who's going to get deposed and all that other stuff. But in this case, because we've turned over material related to our research, to Jim Jordan under compulsion, congressional subpoena, he just goes and it gives it to him. And, that is a remarkably unprecedented state of affairs. Like that is an astonishing breach of norms. and procedure and everything

else. And I think a lot of people, including us, we're actually there's not many things that surprised me. I was like, whatever we turn over is going to be leaked. Whatever we turn over is going to be fodder for the conspiracy theory media machine. But to take material obtained under subpoena and give it to the plaintiff's attorney in a lawsuit is really something else. Yes. And it becomes this self-sustaining loop of outrage. And as I was saying, you know, the new system plays jujitsu with the old system, which I think that's a defensible example of

that happening. and it also takes us to Stanford, Because Stanford has now not renewed your contract. Kind of like getting fired, I don't know. Quiet fired. Yeah. And, they're disbanding the Internet Observatory. It's all gone now. They don't know if they're disbanding the Internet Observatory. That's one of the interesting things about this. Well first they did, and then they didn’t. Right. The thing that's interesting about. So several of us, our contracts weren't renewed. This is, you know, in the Washington Post's a matter of public record. I think that they, they don't know where they want

to be in this environment. And that is because the lawfare runs up costs, it runs up financial costs. Your students get targeted. Like I said, the Twitter files, boys who got emails that, you know, this relatively innocuous stuff that we sent to Twitter just released them on Twitter, which is fine if you redact them. We're not you know, nobody is complaining about the substance. Like, these emails are pretty innocuous, but they didn't redact them and they framed them in weird ways. Like they just cut certain emails in half, again, removing all of the context, And

then highlighted certain sentences that they thought would outrage their audiences. And they left the names of undergraduate students who had sent the emails up in the field. So all of a sudden, we had to deal with documents like 20 year olds getting doxed. I mean, this is actually this is actually like it's inexcusable. Really. Like it's gross. There's no nice way to put it. Like going after kids is just, you know, it's obscene. And that’s what they did. And the institution acted like it was 1994, and soon the internet would be here. They acted like

a traditional institution. And that that was I think the thing that surprised me most was that the institution did not understand the rules of the game in the modern era. They did not understand, in my opinion, what was going to happen. And so when I made the point that everything we turned over was going to be leaked, I didn't anticipate it being given to to Stephen Miller. That was a I was, you know, new to me. but I was like the, the rest of the internet is going to get it, you know, that was where

again, my, my point in expressing that was, you should redact the names of your students. You should protect your students and things like, you know, and because it was ultimately student run projects, like I said, it was students Who were pulling together the material that went into these briefings, students who were engaging with the platforms, because, again, it was an academic research project. Most people do not deputize 20 year olds to run censorship cabals. Yeah. The term used in the book is the point was punishment, not oversight. And I think you probably put that to

the lawyers at Stanford. How did they react when you said that? You know, I, I felt like it was important to lay out the rules of the game as I saw them. And. Yeah, so I did. But how did they view it when you said it? I think they thought that I was well, no, I know, They thought that I was, you know, just sort of overreacting that that this was going to be just like any other investigation, right? Like an investigation in the 90s. To put it as bluntly as I can, as a major

American institution with an endowment of $36.4 billion, been cowed by this? In your personal view? Yes. Yeah. And how do you feel about that? Disappointed. Woof. Fair enough. And that's actually led me to another question. What has years of looking at this and living with this and dealing with this done to you? What is this like? You know, I feel like since at all for me, the first time I got doxed was in 2015. Right. So when I was doing the vaccine stuff, and so I feel like I have a pretty like I have a

very thick skin at this point. Sure. There's very little that has said on the internet about me that bothers me. I feel like I have, you know, I wrote this article about being turned into CIA Renee and my husband and I joke around about it, how I should, like, get it on a mug and just, you know, wear it on a shirt at this point. So I think, you know, we kind of roll with the punches, and that aspect of it doesn't bother me. We're. I do feel frustrated every now and then, the realization that

there will be people Who will believe things about me, and no amount of me denying them or offering the counterpoint or explaining what we did or didn't do, is going to sway them. They want to believe the thing. Is it just so hard for people to change their minds? Is that what's going on here? I, I feel like it has gotten that way. This is one of the things where I feel like the online crowds and, you know, I talk about this in the book tie to the social science research, the, you are deeply, you

know, you become deeply entrenched in that identity. And one of the things that you see or hear from people sometimes who transition, you know, like kind of leave, leave QAnon behind, in particular, is, You see, a lot of these stories is they just say, like, I started to not believe it, but these were my friends, and I had alienated all of my other friends long ago, and my family thought I was crazy. So these were the people that I turned to for support. And I think in Covid in particular, you did see a lot of,

people who are really looking for connection. They wanted to find a sense of belonging and, you know, that irony. I know in some of my, like, DM groups that were ideologically diverse that survived election 2020, like fell to Covid, actually, and there were a lot of these, like the rifts just became, kind of insurmountable. And I think I think that that happened for a lot of people during Covid, actually. Do you find it hard to trust people in things now? Somebody calls you up and says, I'm a journalist. Oh, I have a very healthy skepticism.

I'm at this point now, one thing that, the thing that I have found the weirdest part of my job or the last, you know, five years at Stanford, but ten years and since I've been thinking about this stuff is, I feel like at this point I have, like, almost like a tape running in my head on a slight delay where as I talk, I think, what is the absolute worst interpretation of the sentence I am about to utter? And do I want to say that? And I didn't want to ask, how are the conspiracy theorists

treating your removal from Stanford? Is this a victory? Oh, I'm sure there is this proof that you're really in deep now. She doesn't even need money. She's controlling. So many things. Well, I mean, obviously they feel like they got their scalp right. Jim Jordan tweeted about how excited he was about the whole thing. Actually, I think this is where I wonder sometimes, like when you're asked, you know, I'm not very often upset about myself. I do wonder, how do you jolt people out of complacency, though? That's the thing where I'm like, you know how long

we have to scream about it? Where Jim Jordan's tweet was like, we exercised. I think it was House GOP was the account that sent it out. But we exercised robust oversight over Stanford, either the Internet Observatory or the University. And I felt like that should be absolutely horrifying to anybody who actually cares about the First Amendment, ironically. That is an American Congressmen, the House GOP more than one saying we effectively got an academic research center that did First Amendment protected research, shut down victory for free speech. That's Orwellian in fact. I mean, it's absurd. And

I was like, what is it going to take to make Stanford or whomever else realize that that is actually a screaming red flag? I need to remind you, this is a family channel. Sorry. I'll take a question from the audience. Hit the beep button on that. I'm not sure if you have all the answers, but how do we balance free free speech and conspiracies? Should we update the clear and present danger test established by Brandenburg versus Ohio 1968 or do we need something entirely different for online? It's an interesting question because I think, you know,

People have written very long books. Many, many, many, particularly First Amendment professors have written long books about, how does the First Amendment function in this speech ecosystem. I am not a First Amendment scholar. My approach to it is more thinking about how do the incentives of the system we've created get us to this point right. And so there's the financial incentives and, you know, the dynamics that lead to the rise of the influencer algorithm crowd system. But we're I keep coming back to maybe it's because I kind of came out of tech. Is there are

ways to produce algorithms and, You know, algorithmic curation in particular that doesn't reward the most vitriolic or the most, you know, sensational or the worst. But that rewards bridging algorithms is the phrase in kind of academic research right now where what they're trying to do is say, like, we can have disagreement, disagreements, very healthy. You can believe whatever conspiracy theory you want. We can counter speak against it. But how do you get to a point where that counter speech is even seen? Right. How do you get to the point where you're having the dialog with

somebody instead of you're in your kind of, feed, where you're only going to see things You agree with? And I'm in my field where I'm only going to see things I agree with, and the only time we're ever going to encounter each other is when we're fighting about something. And this sort of, you know, clashes happen. So where you see, like bridging algorithms, things come in is can you level up people who are expressing a viewpoint about a hot button issue in a way that is, you know, they're they're speaking with conviction, but they're doing

it in a way that's not immediately going to alienate or spark fighting. And this is the kind of thing where, you know, I live in a pretty politically diverse area and have many neighbors, You know, who, you know, kind of a full spectrum of, the Don't Tread on Me license plates to the, to the, you know, the sort of heart license plates. And what I think is so interesting about it is you can have disagreements with your neighbors because you're their approaching each other from a position of, like, we're still going to see each other

at the barbecue, right? We're still going to see each other at the school, you know, school playground. and so when we do have disagreements about whatever and we talk politics, it's at least expressed in a like, I don't hate you, I don't agree with you, but I don't hate you. And so can you build that kind of, that, that kind of curatorial system So that what people are seeing is not the content that puts them at loggerheads, but instead the content that surfaces those disputes, those disagreements, but does it in a way that's not, you

know. Yeah. I mean, not to keep....immediately hostile. Not to give the sense that this is like unremittingly dark because you do proposed solutions, more context regulation of sorts, better user control so people can choose to get a healthier input. Decentralized social media, and also people just getting better at spotting this kind of thing. I kind of learning media literacy as, as a civics exercise, almost. I think it's there was some we talked about, some of the stuff from the history in the book. One of the things that's really interesting is, there's, this guy named Father

Coughlin, and he's, you know, kind of descending into fascism. He's got 30 million listeners on the radio, and all of a sudden people are trying to figure out, what do we do about Father Coughlin? And he's a very, very strident critic of Roosevelt. Roosevelt doesn't want to do anything. He doesn't want to be seen as impinging on the free speech of this person. The radio broadcasters are a little bit horrified. They begin to create, kind of like fact checkers on the spot. Fact checkers. So Coughlin finishes his speech, and then somebody says, actually, Father Coughlin

misled you about the following things and sort of, you know, they lay them out, then they try to make him pre-vet his speeches. You know, you see all of these different things that the broadcasters do eventually they do kick him off the airwaves. His followers protest, very similar to today. But one of the things that happens is the rise of this literacy effort that does not sit there and try to stamp out Father Coughlin’s, like they're not sitting there playing whac-a-mole with each individual thing, He says in his radio broadcasts. They are not fact checkers.

Most decidedly not. What they're doing instead is are teaching people how to recognize certain types of rhetoric. And they're saying, hey, when you hear, like, glittering generalities or, you know, ways in which, generalities are presented as if they are absolute truth or or, you know, the answer to the question, you know, the begging the question. Right? Why did you censor all those people? Why did you stop, you know, when did you stop beating your wife? You know, these sorts of things, right? Teaching people to recognize the rhetoric for what it is so that rather than

Litigating each individual fact or instead saying, here is how propaganda works. And this effort actually, was called the Institute for Propaganda Analysis, and it produced pamphlets for like middle and high schools and then for like the community bowling league type places like in the cracker littler it said like four in the Cracker Barrel kind of you can see the, microfilm archives of this. They're up in the New York Public Library. And to add a wrinkle to that, there are roles tech could play. One thing I think you toyed with is... When some things go viral,

what's wrong with slowing them down for a couple of minutes? So that's it. That idea actually comes from finance, the idea of the circuit breaker. And, one of the things that happens in quant finance is, if something, you know, kind of breaks past a certain threshold or there's some news coming out, they'll kind of halt the trading and stock to give people time to digest information before trading resumes. One of the reasons they do this is the idea that slowing things down, just having that momentary pause can help people calibrate, decide what's happening, and kind

of ensure that the marketplace is functioning. It's to end panic. Yeah. And so people come, you know, people are have broached this idea that this is something that you could do on social media as well, that actually rapid virality is not necessarily The best for people being in a reflective mindset. Tobias Rose-Stockwell, wrote an entire book on actually this this kind of idea of design, where what he says is, you know, there are little things that you can do. You're about to send out a tweet with like, curse words and, you know, horrible speech in

it, like yelling at somebody. And then it pops up a little thing that just says, like, most people don't talk to people like that on this platform, okay? I don't want to be that guy. That's funny. Basically, yeah. You can click the yes button, just to be clear. You can like you can go ahead and send it on through. Right. But it just gives you that two seconds of like, are you sure you want to do this? Is this how you want to express yourself? And it's just to, you know, so this is the thing

where instead of moderating hate speech or harassment or whatever after the fact or after, it's already come out, you go and you try to police it, what you're doing instead is you're giving people this little nudge that just says, you sure you want to do it? And these are the kinds of things that, like old Twitter actually used to study. I don't know if new Twitter is, but, there were things like that or, That if you were going to of there was one that was really funny. If you're a writer, you like go you share

your own article and it says like, are you sure you want to retweet other people sharing your stuff? And it's like, are you sure you want to share this? You haven't read it yet. You know, because it knows that you haven't clicked on the link. And so it just wants to make sure that, you know, the point of that little I always think it's like kind of cute, but the point of that little nudge is just to say again, are you sharing this based on some clickbait headline? Or is this a thing that you actually

want to put out as the. Yeah, as I used to say in the earliest days of this, you own your words. Yeah. And people have lost sight of that. You know, you really do own your words. I'm going to take the last question I had for the audience and tweak it just a bit. What is the reality of the traffic followers likes of automated bots versus real people? Is it a high or low percentage? Does it matter for the outcomes wanted? And let me just add to that. What do you think the effect of AI

is going to be in the coming years? I was actually trying to think about how to bridge those two things. We have some work. I keep saying we, there was some. There is some. No, there still is. It will come out. The, and we've got it's actually a bunch of stuff in the pipeline that I'm excited about, but, one of the things that we have started to see is the presence of LLM powered accounts, on places like Twitter, this is a thing that we 100% knew was going to happen, and it absolutely is happening. LLM

sorry, is large language model. So AI chat bots basically. How does the AI chat bot generate content? And one of the things that we can see is every now and then it spits out an error message. And if you have a low quality bot, you'll see the error message will be the thing that will be tweeted. So it will say, it's a violation of my policies to generate content related to and then the thing it's been asked to respond to. Most of these accounts are replying to people. They put out original content. It's kind of

anodyne, a little bit weird. You know, you can kind of tell it's spam. so they're not very good at, you know, creating programs of content, But they do sit in the replies quite a bit, and you do see them replying particularly to famous people like Elon Musk or political influencers. And that's because they know that people read the comments under those people because they're, you know, oftentimes very interesting or heated. And so you will see the the chat bots are, are kind of spinning content into the replies. Can we tell how many there are? No.

And that's because in May, Twitter revoked, what was called the sort of firehose, the, tools that researchers used for free to sort of look at sort of systematic, trends across the platform. And now it costs about $42,000 a month to do that. So many academic institutions, don't do it anymore. But it was this model of where, where you would have been able to answer that question. So I can't tell you how many, but as far as automated accounts, the point of them is to create, they, they're used basically for two things, one is spam. Overwhelmingly

spam. I think people think most of the bots on Twitter are there to talk about politics. They're not they're there to talk about spam and porn. And those are the two things that they're there for. The limited number that are there to talk about politics oftentimes are garbage. They do not get much pickup. But we do know that they're there. We know that there are hundreds of thousands of them. We know that Twitter used to take them down semi-regularly. It doesn't seem to be doing that quite so much anymore. Or if they are, they're not

disclosing. So it does seem that the problem has proliferated, but it's not necessarily like materially impacting, political conversations. And AI? AI is, AI is is it trying to think how to say this, it takes the cost of creation to zero, which I think is why it's captivated a lot of people. It makes it a lot easier to run propaganda campaigns. It makes it a lot easier to run, advertising campaigns. And so anybody can use it. And the challenge though, the thing that kind of keeps the brakes on is that even though you can use it

to create propaganda, political content, whenever you still have to get that content distributed. So like I said, there are these chat bots that are out there tweeting political things in the replies, but they're not getting any pick up because they're not integrated into communities and networks. They're not part of the crowd. They're not members of the crowd. They're on the outside. Is that going to change? I think the answer is yes. I think within 2 to 3 years, definitely yes. So I think you'll see a lot of research on proof of person and trying to

prove someone is real or human. but I, I think right now the real risk of AI an election 2024 would be a fake leaked audio. And, and that I think is going to be, the, the vector by which I would think that you'd run a real political manipulation campaign. Well we’re at time and, but I do want to close with this question. From the start, you know, we proclaim that creating narratives was no longer the purview of elites. And I was thinking back, reading this to the days that we'd say we'd have citizen journalists and

we'd have grassroots experts, And it would create a stronger and healthier democracy. And instead, right now, it looks like the narrative center does not hold. Did we make the mistake of thinking it'll be like the old world, only better. And instead the New World played jujitsu with the old world. Like, as as we've seen in the past. We're for, you know, the people who proclaimed this naive or is this still potentially true? This debate, going back to the 1920s, and, you know, I mentioned Walter Lippmann and Edward Bernays. There's a debate that happens in the

1920s. The Lippmann, Dewey debate, right? Where Bernays and Lippmann are pointing out like propaganda is the obligation of the government. And Dewey says, absolutely not. No, it's not, not in any way. Right. The, it is the mission of the free press to inform the people, not the government at all. And so you see this debate playing out, even, you know, a century ago at this point. and what's interesting is you do see the sort of concessions in which he recognizes that this is an inherently idealistic Point of view and that that press has not been

born yet. Right. Because yellow journalism is, of course, a thing. You know, that this idea that the press is, you know, sanctimonious truth tellers that, you know, are immune to, anything bad is not reality in the 1920s either. But you do see this debate going back and forth. And I think where we are today is in that same place. There are many, many, many excellent sub stacks and newsletters that I love reading because it's interesting commentators who don't write for major publications, they're just interesting people, with political viewpoints, many of which I disagree with, but

they're really good writers. And you see that sort of rise to the top, and you have an opportunity to support them very directly. The sort of patronage model is back. So I think that the potential is there. It's just that right now the incentives are much more to cater your content to a particularly angry niche, because that's how you continue to support yourself. And so I am curious to see if there is an opportunity to have these kind of interesting, compelling writers, you know, join forces, which of course, would be like a newspaper. But, you

know, maybe we see some new iteration of that, maybe rise from the ashes. Revenge of the olds, and we'll see. Okay. Well, we are at time. My great thanks. Our thanks as the Commonwealth Club to Renee DiResta, author of Invisible Rulers: The People Who Turn Lies to Reality. We encourage you, everyone here, to purchase a copy of Renee's book here or at your local bookstore, Wherever. If you want to continue to support the Commonwealth Club's efforts in making virtual and in person programing possible, please visit Commonwealth club.org. I'm Quentin Hardy, thank you and take care

everyone.