so this was by far one of the most surprising a releases this year and of course that's pretty funny because the year has just started now I'm referring to deep seek R1 so you can see they tweeted deep seek R1 is here and this is the model that is truly surprising because the performance is on par with open AI 01 but that is not the surprising part the surprising part about this is that this is actually a fully open-source model that is available to basically everyone for free on their website and trust me the research

surrounding this and how effective this model is is truly astounding so let's dive into exactly why the internet is actually raving about this because this is something that I would say caught opening eye by surprise and truly does show where the AI industry is headed as a whole in terms of how quickly these models are getting better so this bar chart diagram that you can all see right here basically shows you why this model has been all over the internet as of recently this is the Deep seek R1 model which is a direct direct competitor

to open ai's 01 model both of these models are based around system 2 Thinking which is essentially where these models think for longer and it's a New Concept that we've only recently got to explore but as you can all see this is something that is yielding outstanding results across the board for any models that are developed in this way and now most surprisingly it's incredible because the current available models if we're looking for whichever models are currently the best in terms terms of performance most people would say it's open AI but now somehow China has

managed to somewhat outshine the master in AI or the leaders in innovators by releasing such a powerful system you can see here that the Deep seek R1 in this striped purple/blue bar is right on par with opening eyes 01 and of course exceeds the opening eyes 01 mini in a variety of different benchmarks now you have to understand that a lot of these benchmarks are really really difficult and I would even argue that some of these are potentially completed considering that some of these benchmarks have maybe a 2 to 5% error rate so it's quite

likely that these models are remarkably effective at these tasks in way that people can't understand and this is why I think the industry is starting to realize that if we get these kinds of performance gains from AI models and we do know that now the cycle is speeding up in terms of capabilities where are we going to be in 3 years perhaps now this was pretty crazy because a lot of people who are excited about R1 like I am too is because not only is this on par with open eyes 01 model this is something

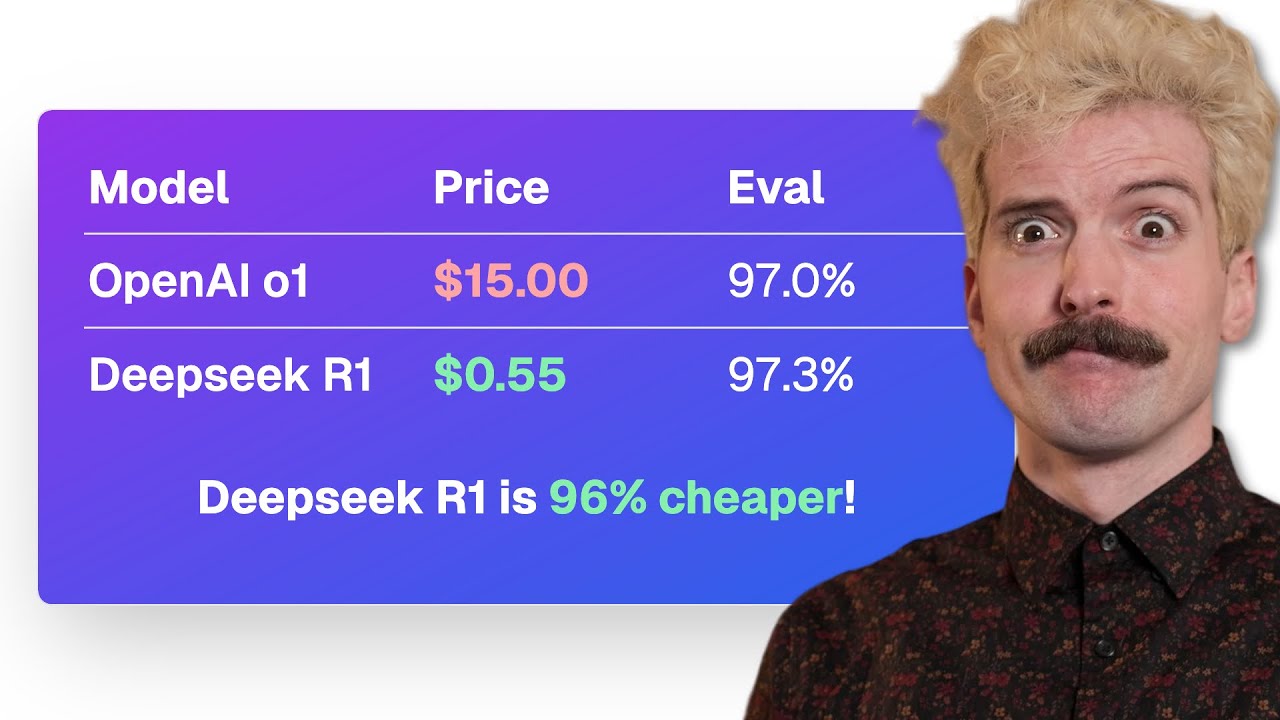

that is remarkably cheap which now means that developers who are trying to maybe build a product or just using this for testing can access a state-of-the-art system for pennies on the dollar so this is going to be something that is gamechanging for the entire industry because in a variety of use cases we know that these models are extraordinarily expensive now this part right here is what I think is also a remarkable piece of innovation from this team and this is something that most people skipped over whilst everyone was focused on the R1 /01 debate in

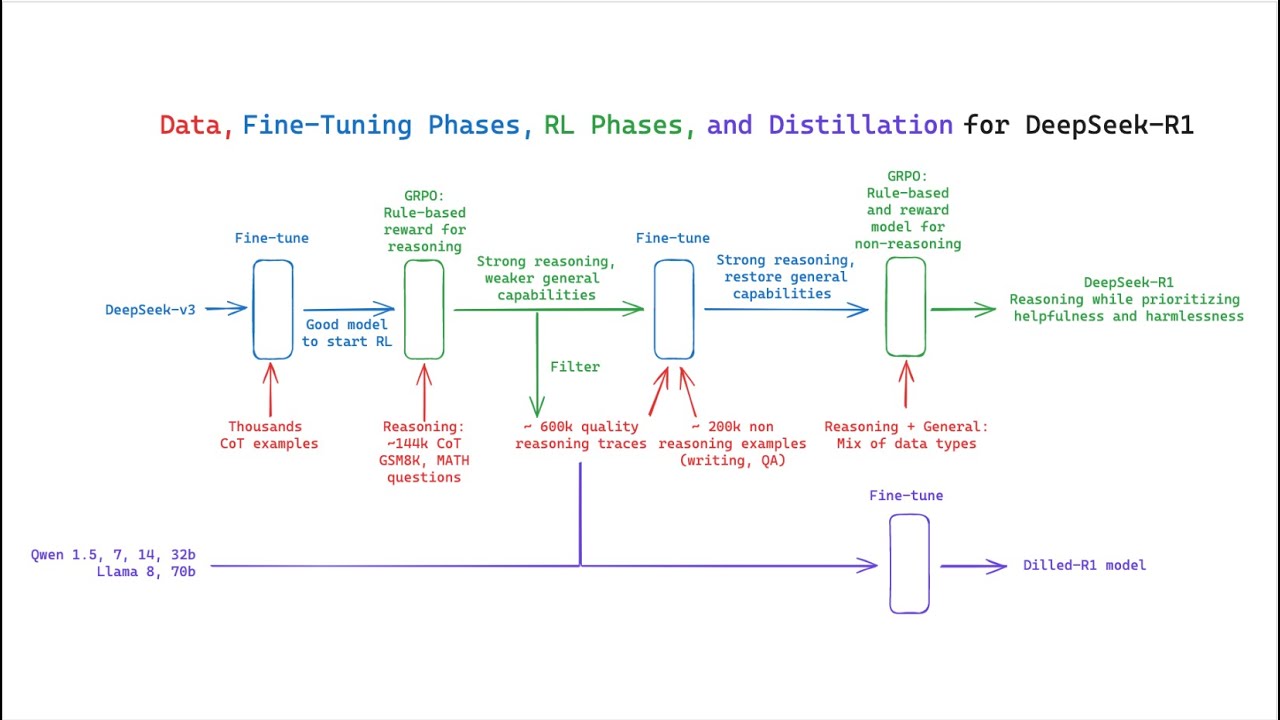

the research paper they actually give us a glance at how the distilled models perform so if you don't know model distillation is where you have a teacher model and you distill that knowledge down into smaller models making them more effective and smarter now this is of course done due to efficiency you can see right here that you essentially get the knowledge of a super smart model into a much smaller model and because of that you essentially Save A Lot on time and of course a lot on your inference now the crazy thing about this is

that without distillation we wouldn't be able to achieve relative results that would achieve that level of performance at that size so distillation is something that is remarkably effective so what we're seeing here is these Frontier models like GPT 40 claw 3.5 Sonet perform well on these tests but then surprisingly with seeing the R1 model which is the new model from Deep seek but that model being distilled down into a 70b Model A 32b model and Al llama 8B model and even surpassing these models in certain use cases and I think that is incredible it's like

imagine getting the level of claw 3.5 sonnet but in a 70b model size that is going to be an absolute GameChanger for many individuals of course I always recommend you to test these models on whatever specific bench Benchmark you're going to be using them on some people might use it for Creative Rising some people may use it for reasoning about math some may use it for reasoning about science or some may use it for coding whatever it is definitely make sure you thoroughly test the models before running away with excitement as sometimes certain models tend

to have certain characteristics if you will say that are just a lot better than other models but as I was saying this is something that is absolutely remarkable so we can get truly truly effective models at a much smaller size that have the reason ability of larger models and I think this is going to be a trend for the future so that who knows maybe in the future we're going to have ridiculously smart AI models that are on our side we can see that the GPT 40 on these benchmarks it achieves like around a 70

score and a 50 score on the GP QA which is some of the hardest test but these but these distilled models are achieving comparative results at a much smaller size which is truly incredible when we realize what's going on here and we do know that as time goes on this is quite likely to improve now this wasn't the only remarkable thing that existed in this paper that most people were surprised about one of the most remarkable things that they actually talk about is the fact that there is self Evolution and the emergence of sophisticated behaviors

as the test time computation increases as you know one of the things that these models do is they will think for a longer period of time and the longer they think the better the responses get so you can see right here that it says behaviors such as reflection where the model revisits and re-evaluates it pre its previous steps and the exploration of alternative approaches to problem solving arise spontaneously and what's interesting is that these behaviors are not explicitly programmed but instead emerge as a result of the model's interaction with the reinforcement learning environment and this

spontaneous development significantly enhances the model's reasoning capabilities enabling it to tackle more challenging tasks with greater efficiency and accuracy essentially what they're stating here is that because the model is thinking for so long often times during those reasoning thought process it might come up with inter ways to solve the problem and this is something that is emergent in this system because they didn't explicitly train it to do that so this is actually an example of this in practice so you can see right here the model is reasoning through this math equation and it's reasoning through

this problem by thinking about how it can solve it and the bit that they've highlighted here you can see it says wait wait wait that's an aha moment I can actually flag here and this is something that is super surprising because this is where the model learns to rethink using an anthropomorphic tone and one of the things that you know most people have seen about this deep seek R1 model is that it actually thinks somewhat like a human it's weird you're essentially hearing the internal thoughts of an AI model that's able to reason through certain

problems and surprisingly it managed to say wait wait wait aha I can actually flag this here and solve the problem better I'm going to show you guys another example of this which is just absolutely incredible this is where we have one user on Twitter who is actually sharing the internal thought process when they prompted the model to think of a random number and you can see if you actually read this in detail the model somewhat talks like a human like if I was reading this I would say yep that's a human that's thinking about a

random number you can see it says the user wants me to think of a number between 1 and 10,000 but not tell them they mentioned not to overthink it so maybe just pick a random number quickly let me see one of my numbers is 777 but hey that might be too obvious maybe 1 2 3 4 wait that's too sequential maybe a number that's common but not too predictable 42 that's from hitch kiker's Guide to the Galaxy alternatively 2004 nope 18765 wait that's from a song and the crazy thing about this is that like the

crazy thing is that we've never been able to see the internal reasoning thoughts from open ai's model they've always wanted to keep that hidden many people have speculated because of course they're trying to protect their methods of reaching Advanced reasoning from their competitors which is completely understood but what if the reason is the fact that a lot of the time these models are thinking in ways which are extraordinarily human that would make you anthropized the model which basically just means we're going to start to think that these things are truly human I mean that is

something that's always up for debate the Consciousness stuff but to see the internal thoughts is super fast even for this example right here it says hey want to be a man en tized give me a single word answer and you can see it actually talks about how hm I first need to understand what this actually means and then it says hm given that I'm an AI I actually don't have any personal desires he says if I say yes it might support this idea and it's just something that I think most people should start to realize

because I think it's going to be a heated debate as these systems get more and more intelligent and the reason why like I said before most people are bullish about this kind of technology is because as they say that this moment is not an aha moment just for the model but also for the researchers observing Its Behavior it underscores the power and beauty of reinforcement learning because rather than explicitly teaching the model on how to solve a problem you simply provide it with the right incentives and then it autonomously develops Advanced problem solving strategies and

this aha mement serves as a powerful reminder of the potential reinforcement learning to unlock new levels of intelligence in AI systems Paving the way for more autonomous and adaptive models in the future if you aren't familiar with reinforcement learning I may include a short snippet of what exactly that means but reinforcement learning is basically where you reinforce the good behaviors and over time when it's constrained to an environment or a set of rules these emerging capabilities spawn in and usually they are basically magic in the sense that you couldn't have predicted them before and they

usually allow the AI to do something crazy now if you want to take a look at the full R1 evaluation you can see right here how the model looks in terms of comparisons to other models like claw 3.5 Sonet gbt 40 the Deep seek V3 base model that they released before 01 mini and 01 and we can see that this model is definitely up there in terms of the capabilities again if we look at the distilled model evaluation we can see that these models also do perform exceedingly well on a variety of different benchmarks now

the craziest thing about this all is that someone asked on Twitter how is deep seek going to make money but someone actually responded deep seeks holding is a Quant company many years ago you know these were just super smart guys with top math backgrounds and they happen to own a lot of GPU sltrading equipment for mining purposes and deep seek is actually their side project for squeezing those gpus so I think this is something that's absolutely incredible this company's side project has managed to catch up to open AI so I'm actually wondering how much longer

their Moe May last for overall it seems to be an exciting time to be in the AI industry as there are non-stop updates with regards to how smart these models are