[ MUSIC ] Mark Brown: I'm Mark Brown. I'm with the Cosmos DB team. And, here, welcome to BRK117.

We're going to hear from Toyota and hear them talk about how they built a durable multi-agent AI system at Toyota to help them design and build cars. I think you're going to love it. There's four people up here on stage today.

I'm just going to be here to introduce folks. We've got Kenji Onishi from Toyota. He's going to talk about our business challenges here.

And then Kosuke Miyayaka -- sorry, sorry, Kosuke Miyasaka. I got your name wrong, I'm so sorry. He's from our App Innovation GBB Team.

He's going to talk about the system architecture that Toyota built and implemented there. We're going to bring Marco Casalaina on. He's Vice President for AI here at Microsoft.

He's going to talk about some great new stuff that Toyota is using and some new stuff that's coming. And then I'll wrap it up from the Cosmos DB Team, tell you about all the new features we've got coming here today. We'll have some time at the end for Q&A.

There's a microphone right in the middle of the room. So, if you have any questions at the end, please come on up and ask us some questions. So, I'd like to hand it over to Kenji.

Please come on up. [APPLAUSE] Okay [APPLAUSE] Kenji Onishi: Thank you. Okay.

[ APPLAUSE ] Okay, let's start. Thank you very much for inviting me. I would like to express my gratitude to everyone at Microsoft for their great support in building generative AI within our company.

Today, I will talk about Toyota challenges and building the O-Beya system. Our company is in the process of transforming from a car company to a mobility company. This is an introduction to our company.

For more details, please visit our website. We have 70,000 employees. We are considering the application of generative AI in our large manufacturing company.

Next, what's Powertrain? In our company, the three elements that make a car run are defined as powertrain, chassis and body. Among these, I belong to the Powertrain Development Department.

Let me introduce myself. My name is Kenji Onishi and I have been working at Toyota Motor Corporation since 2006. Initially, I was assigned in the development of hybrid vehicle control systems.

And, since 2014, I have shifted to the task of building development environments. I am considering how to apply a generative AI in our works. Next.

Okay. First, take a look at this. This is Toyota O-Beya multi-agent system we have developed.

[ MUSIC ] Speaker 1: Various experts are involved in car development. Toyota's strength lies in having a large number of specialists. On the other hand, because there are so many experts, we have to listen to many opinions when progressing with a single task.

For example, creating a car that performs well can lead to worse fuel efficiency and increased noise. To balance these factors, discussions are held among the experts. We have developed an AI system that simulates discussions among experts in a virtual environment allowing anyone to engage in development while conversing with many specialists.

[ MUSIC ] Now let's take a look at the new way of working that this system offers. [ MUSIC ] We call it O-Beya System, Toyota multi-agent AI system. [ MUSIC ] This system can automatically summon various expert AI agents based on the content of the questions.

There is a lineup of AI agents that can be summoned. [ MUSIC ] You can use the Automatic AI Agent Selection. Let's check what the customers are dissatisfied with.

Speaker 2: I want to know about the dissatisfaction of customers with our cars. [ MUSIC ] Speaker 1: This AI registers customer feedback. Speaker 2: I will ask the AI.

[ MUSIC ] Speaker 3: The engine is noisy when the accelerator is stepped on. Speaker 2: Hmmm, I want to get rid of the engine noise when the accelerator is stepped on. What is the factor in the voice of the customer?

[ MUSIC ] Speaker 3: There may be a muffled engine noise in the low RPM range. Speaker 2: There are many examples. Among them, the muffled sound of the engine seems to be applicable.

Which agents would be suitable to address muffled engine sounds? Speaker 1: You can get recommendations on which agents to choose. [ MUSIC ] They are the recommended based on the user's message.

Speaker 2: I have identified the agent to confirm with so I will ask the question. [ MUSIC ] Speaker 3: Resonance at certain rotational speeds can cause droning noise. Motor assist might help.

[ MUSIC ] Speaker 2: Okay, it seems like motor assist could be effective. What should be considered when enhancing motor assist? Speaker 3: Manage the temperature of the battery under high load.

Speaker 2: I agree, we should pay attention to the battery. I agree, we need to manage the battery condition. What should we do to assess the battery condition management?

Speaker 3: Monitor the battery temperature. Implement a system for cooling and heating. Speaker 2: I see.

These evaluations are indeed necessary. [ MUSIC ] Path to solutions identified. Using the information we've gathered, let's develop excellent cars.

[ MUSIC ] [ APPLAUSE ] Kenji Onishi: Thank you. [ MUSIC ] Oops, oops. Okay.

I will explain the reasons why we decided to develop this system. Fifteen percent, a number suddenly appears. Then this number represents the man hours required for investigation before carrying out tasks within the company.

Investigation refers to activities such as reviewing past documents and conducting interviews with knowledgeable individuals. These tasks begin with searching for people and gathering documents. In Powertrain, initially, cars only had engines and transmissions.

But they have now become hybrids and autonomous driving has been added. Furthermore, the transformation into a mobility company is advancing. The amount of research work has been expanding year by year.

And, currently, the workload has become so large that it is placing a significant burden on the engineers. The problems our company is facing, while we have good information, it is difficult to find because we are a large organization. The veterans in each field hold of a lot of information within the company.

I considered creating an AI that can replace veterans by developing specialized RAGs for each field. This is referred to as the General-Purpose RAG Environment. And we are processing building it.

Since the knowledge of the veterans in their heads, we are creating the system to extract that knowledge. First, we will register the knowledge held by the veterans as documentation. The AI we build will provide answers on the registered knowledge.

The veterans can review the answers given by the AI and make corrections if necessary. So, model answer collection. By accumulating high-quality data, it will develop into a useful AI.

This AI will become one of the expert AIs in multi-agent AI systems. We are widely introducing this system within our company. By doing so, our fellows are engaging in activities to create their own veteran AI.

We have created about 30 environments. As the veteran AIs have increased in this way, I suddenly recalled the following scenarios from our regular development work. These are often statements from the higher-ups like this: "We want to develop something called new system.

Let's bring together experts and do it all at once. " As a result, a place is created on one floor in our company where experts gather to carry out these tasks. In Toyota, we call "O-Beya activity.

" "O-Beya" means large meeting room. By doing this, the experts are close to each other so the work can be done very quickly. However, while this method is powerful, it cannot always be in implemented.

Naturally, it is difficult to gather experts all the time. We thought, "If we have expert AIs, could you connect those AIs to make this work easier to accomplish? " So, we are creating a system that allows us to collect veterans' knowledge at any time.

We have started considering the O-Beya system. This is a kind of system I envision. The engineer will pose a question to the AI saying, "I want to develop something like this.

" The AI calls upon another AI that seems capable of answering the question. The responses from each AI will be aggregated and returned to the engineer. With this O-Beya system, we achieve better development.

We are conducting trials with 800 powertrain developers. We have received comments through surveys indicating that the development process has become faster. Okay.

Transformation into a mobility company requires enhancing the development efficiency of everyone involved in the development process. I believe that utilizing generative AI, we can handle a vast amount of past knowledge, maximizing development efficiency. Together, generative AI and the engineers will work hand in hand to advance towards becoming a mobility company.

This concludes the demonstration and overview of the system. Mr Miyasaka from Microsoft will explain the system's structures. Mr Miyasaka, please go ahead with your explanation.

[ APPLAUSE ] Kosuke Miyasaka: Well, thank you, (non-English). My name is Kosuke Miyasaka, an Azure Innovation Specialist from Microsoft Japan. And I am leading the support for Toyota project.

Before diving into the detailed system architecture, let me first introduce the approach we take in this project. This project was divided into three major phases. In the initial first phase, we build the RAG and, second, we incorporate image data with the RAG.

And, finally, we developed the multi-agent configurations. In each phase, we collaborated closely with Toyota applying the latest technology as it became available. In each phase, sorry, for instance, we implemented GPT-4 as soon as it was generally available in the initial RAG phase.

And, for image integration, we developed GPT-4 Turbo with Vision the day after it's released. This (inaudible) adoption of new technology has been essential in accelerating the development of multi-agent configuration. How was this possible?

Toyota's application development environment, including the PaaS landing zone for generative AI, allowing us to instantly integrate new generative AI futures and past updates into our applications. This responsiveness is a crucial factor when building applications in generative AI given the frequent model updates. Now let's take a close look at the specific system architecture we have developed.

Since the GA of Azure Open-AI Services, various retrieval augmented generation patterns have advanced enabling our customers to experiment with multiple approaches for building generative AI applications, Toyota too. Toyota has been evaluating different RAG patterns to implementing agent-based solutions. In Toyota's multi-agent system, it's crucial to Toyota's implementation patterns to its specific agent requirement and data characteristics.

This slide presents a simplified overview of the RAG patterns. Toyota is currently exploring among them Toyota's activity validating four distinct patterns. The first pattern connects AI Search with SharePoint in straightforward RAG configuration.

This setup was selected as a fundamental RAG architecture given that much of Toyota's design data is already (inaudible) in SharePoint. The second pattern uses the AOAI extensions, Azure Functions, for a simple serverless data configuration. This approach proved effective for quickly deploying a serverless style system.

Both architectures offer easy development. The third patterns leverages Cosmos DB Vector Search designed to utilize total text-based design knowledge data within a RAG structure. This approach offers high performance at the advantage of automatic scaling even as the knowledge database grows.

The fourth pattern implements agents using the Functions Flex Consumption plan providing an effective solution for cost optimization as the number of agents scales. These two architectures are prioritized in the case where reliability and performance are crucial. Finally, the fifth pattern integrates Disk ANN features in Cosmos DB for agent implementation.

Although this hasn't been yet deployed in Toyota's multi-agent system, it is available as data volumes grow requiring high performance and configurations. Given the vast scale of Toyota design data, the ability to target between standard vector such to Disk ANN as needed is immensely beneficial. These are the core agent configurations within Toyota's O-Beya system.

Next, let's dive into how we are implementing these configurations in multi-agent framework. There are multiple ways to configure a multi-agent system, but Toyota focused on capability of Azure Durable Functions. The multi-agent system includes four distinct agents focused on batteries, motors, regulations and system control.

The prompts for each agent are defined and these prompts play a crucial role when the multi-agent system compiles its response. There are three main reasons for choosing Durable Functions. First, it's enabled parallel processing across agents enhancing performance.

Second, it supports complex workflows including error handling and (inaudible). The third, it allows easy monitoring by storing that state externally making it simpler to review. In terms of implementation, the fan-in/fan-out feature of Azure Durable Functions is used.

Durable Functions are (inaudible) by a user request activating four agents which are implemented as functions and launched in parallel with fan-out. Once all parallel processes are complete, fan-in collects the results, which are then compiled by generative AI to form the response. Each of the four agents has its own unique architecture.

To improve response accuracy, we improved the RAG architecture and prompt for each agent. Additionally, by storing conversation logs in Cosmos DB, the system can consider previous session data when generating responses for the next session. The main advantage of implementation is that agents can be easily added.

Since the agent operates asynchronously and in parallel, adding more agents has no negative impact on response time. This implementation has demonstrated high effectiveness in generative multi-agent responses. This is the overall architecture of this system.

As mentioned earlier, this application is deployed in Toyota's secure generative AI-native environment, each question and answer handled by the multi-agent system is logged in Cosmos DB allowing us to monitor for any inappropriate content. Now, let me explain the two key layers: The O'Beya system layers and agent API layers. The agent API layers follows a Microsoft architecture making it easy to use to deploy the agent APIs.

For example, as shown at the top of the slide, "Apps," a single application can easily utilize a specific agent as needed. In practice, we are creating a plugin for Toyota's design application we call a battery agent which is activated in use. Additionally, leveraging the Microsoft architecture enables us to easily add new APIs and agents.

Each agent can be modified to meet specific requirements. Moreover, by using Durable Functions, we can process tasks in parallel and minimalizing any performance impact. We refer to this architecture as the O'Beya system.

It's already in use and delivering significant benefit for Toyota. And we are looking forward to further development with using Azure's latest update. So, finally, at this Ignite event, we have seen exciting updates for Cosmos DB and Azure OpenAI Services.

Notably, Cosmos DB's Disk ANN and Azure OpenAI Services, Azure AI Agent functions seems especially applicable to our multi-agent system. Leveraging these capabilities, we plan to advance our collaborative work with Toyota to create a pioneer use case for manufacturing industries. Now, I'd like to invite the product's team to introduce these new updates.

First, let's hear from Marco, Azure AI Team. Welcome, Marco. Marco Casalaina: All right.

[APPLAUSE] Thank you. Kosuke Miyasaka: Thank you. Marco Casalaina: All right.

Well, hello, everybody. I am Marco Casalaina. I am VP Products of Azure AI and AI Futurist.

And I'm going to kind of roll it back a little bit because we've been talking about these agents, the agents that Toyota has built and has been using. What is an agent actually? I mean, really, fundamentally, what is it?

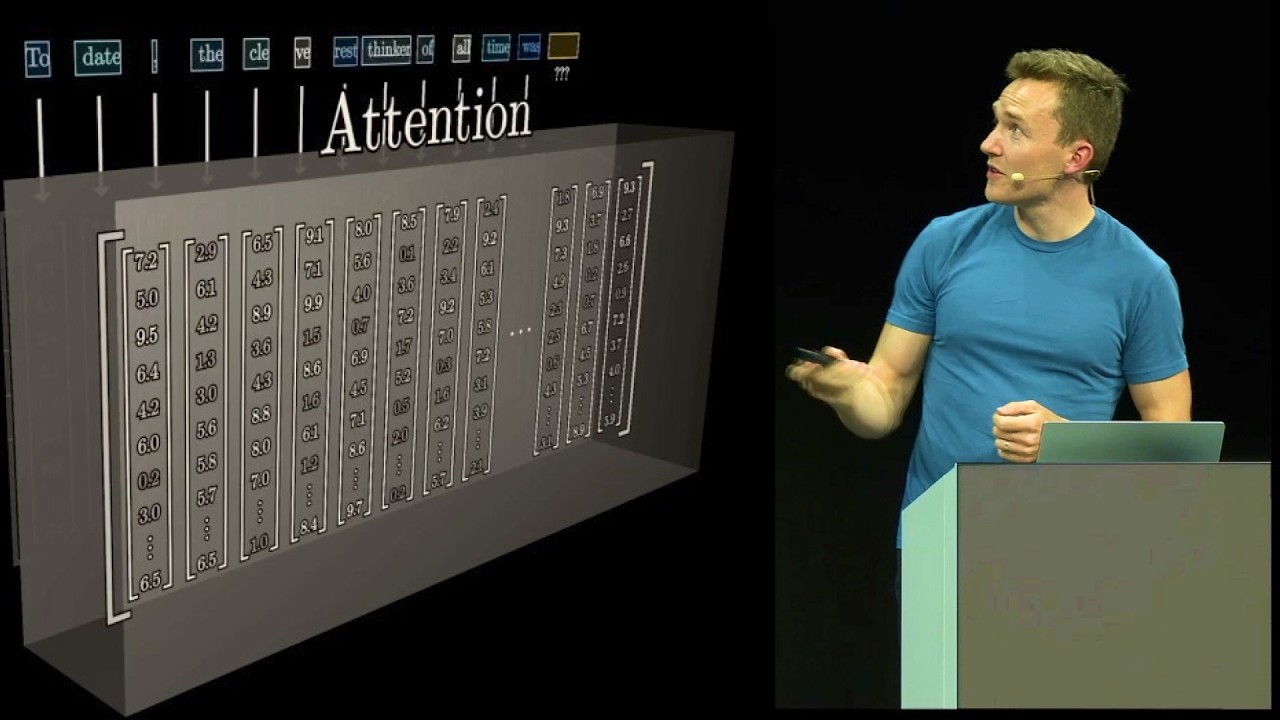

An agent is a framework that sits above one or more models that allows you to do complex and long-running operations and can take actions on your behalf. And how do these agents even work? Well, they really provide reasoning.

That is the key. That's the difference between what an agent is and what came before it, like robotic process automation, or search, or any of those kinds of things. Agents can do reasoning and it can do those reasonings to help you search for information, as Toyota is doing, or it can help you take actions.

Now, for those of you who missed my session yesterday, which was BRK102, you should probably check that out on YouTube because one of the things that I did there, and what we will do again tomorrow, by the way, in Theater C at 1:00 p. m. , so we have another agent session in the big conference area, we are making these agents take actions.

And, really, what differentiates the Azure AI Agent Service from just about all these other agent frameworks out there, because there are a million agent frameworks that exist today, but the differentiator is the integrations, the ability to integrate to sources of knowledge, as Toyota did, but also to actions. In this picture, this is one of the things that I did do yesterday in my session where I connected it to Outlook and I made it make bookings in my Outlook calendar. So, it's not just about looking stuff up, it's also about taking actions.

And there are all kinds of actions that I could make it take. I can make it do stuff in Dynamics. Here, I have a picture of where I'm making it do something in SAP or in Salesforce.

In all kinds of third-party systems, you can make these agents take action. That is where the rubber meets the road. And, now, Satya, in the keynote, he was talking about autonomous agents.

What do we mean by autonomous agents? What is an autonomous agent? Well, now, you think of AI as being kind of a chatbot nowadays.

Everybody thinks of ChatGPT, you type to it and it types to you. But an autonomous agent is really an agent that is triggered by some event in a system. And that event could be a business event in Dynamics, it could be that a record gets added to Salesforce, or something gets changed in SAP, or an email comes in.

There's all kinds of ways that you can trigger these agents. So, when you think about agents now, think past the chatbot and think about how you might trigger these things to wake the agent up to do something useful. Now, for somebody like Toyota, there are a number of steps that they have to go through to bring these agents to production.

Well, the first part of it is evaluation. I mean, think about even some of the things that they just showed. Imagine how much rigor they had to go through to evaluate that the results that these agents were giving to their users were correct.

So, evaluation is very key. And we have here at Ignite announced some new features for evaluation specifically. There is scalability.

So, at some point, when the agent moves beyond the prototype point, you want to get that to all of your users so you have to scale that out. And then you want to monitor it in real time as agents use it. Now, AI systems involve a different type of monitoring than traditional applications.

For traditional applications, you're monitoring latency and uptime, you're looking for 500 server errors and things like that. But, with an AI application, well, you still monitor for all those things also, but you're also monitoring for things like groundedness, is it speaking the truth, is it telling things correctly, is it working correctly and taking the right actions? You want to be able to evaluate that in bulk, as I said earlier, but also monitor for that in real time.

And, finally, there is security. You want to make sure that your agents don't give access to information that the user should not have access to, that the agents don't take actions that the user should not be able to take. These agents should be acting on behalf of the users and should have those same permissions.

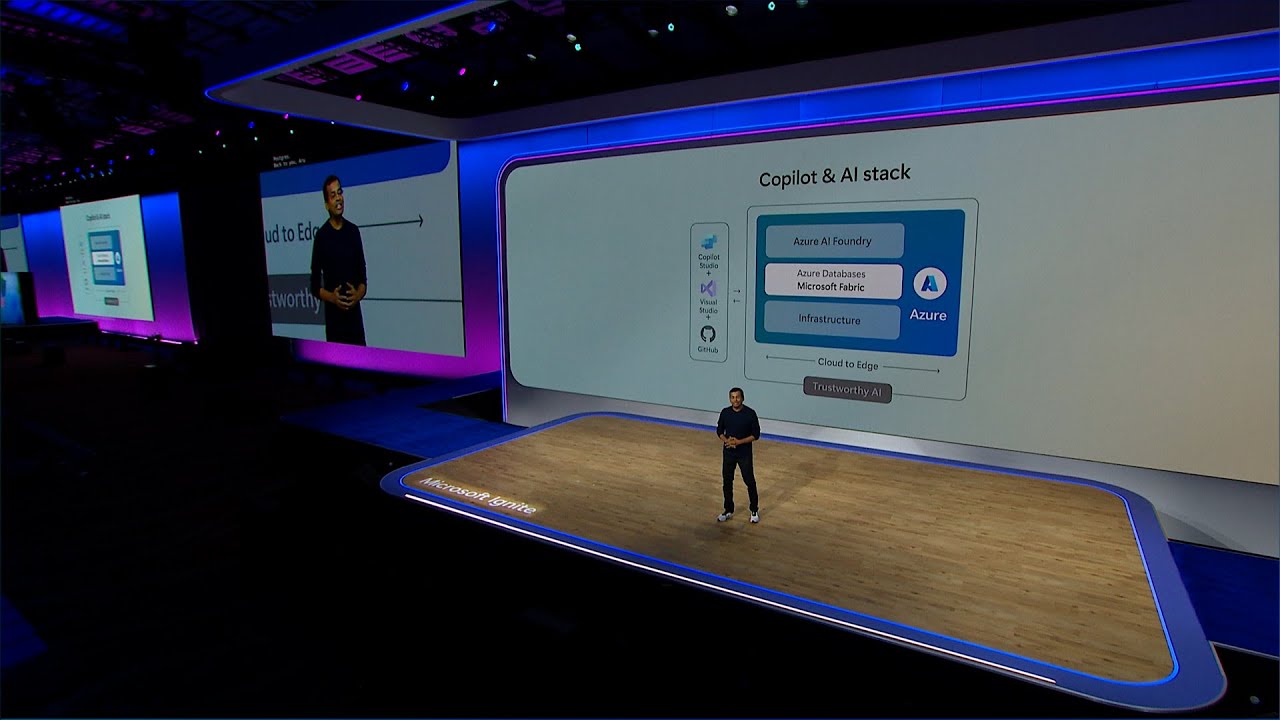

And, so, for all of these reasons, we have introduced the Azure AI Agent Service. This is part of the Azure AI Foundry that you've heard about here at Microsoft. And it supports that kind of evaluation, scaling of the agents, monitoring and security.

And, as I said, this is just one part of the Azure AI Foundry, which is a holistic AI solution that we announced here at Ignite. But what you heard the folks from Toyota say is that these agents need to be grounded in knowledge and, in many cases, they need to keep memory, they need to kind of remember what it is that you've interacted with them about in the past. And one of the best ways to do that is with Cosmos DB, which is indeed what Toyota is using.

And, so, now to talk a little bit about how this works in Cosmos DB, I'd like to welcome to the stage Mark Brown. Mark Brown: All right. [APPLAUSE] Hey, that's a great intro.

Thank you, Marco. So, you heard a little bit about how Toyota is using Cosmos DB today to build this multi-agent AI system. Let me talk about some of the features they're using here today.

And I also want to share some of the features they're going to be implementing here in the future. For Cosmos DB, we make the world's most scalable, performant, cost-effective and flexible database for building AI applications. There's two flavors for Cosmos DB for building generative AI applications today.

We have our Cosmos DB for MongoDB, which provides high compatibility for MongoDB Atlas in a fully managed first-party database with providing native Vector Search capabilities as well. And then, of course, there's our Azure Cosmos DB for NoSQL. So, this is for customers that need that infinite scalability or high availability, extreme low latency for their types of applications, which is perfect when you're building these kind of high-volume multi-agent systems here.

Instant autoscale, automatic data partitioning, all these features can help allow you to focus on building your multi-agent systems or your generative AI applications rather than focusing on your database. And, of course, it's built using Microsoft's Disk ANN, which we announced GA here today this week. Disk ANN is Microsoft's vector indexing and search algorithm developed by Microsoft Research.

It combines a lightweight in-memory vector store with full fidelity vector graphs stored on disk. Disk ANN constantly maintains the index built within the database there so it's able to withstand very high data mutation rates while maintaining very high accuracy for your applications. Disk ANN's design is uniquely suited for Cosmos DB with Disk -- sorry, the graphs stored on disk on very fast SSD storage on cluster for the compute.

And combined with Cosmos DB's unlimited scale and best-in-class latency, customers can get the absolute best performance when building their generative AI applications using Cosmos DB as their vector database today. So, unlimited scalability is great when you're going into production, but Cosmos is also really, really, cost efficient. Customers can use our serverless and get upwards of a million point reads for 25 U.

S. cents. Now, that translates into hundreds of thousands of vector queries as well for their applications.

And, since it's a pay-as-you-go model, it's really great for starting your new generative AI applications. Also, it's fully managed. Developers can focus on building the logic for their applications rather than having to manage and provision their databases in there.

And, as a NoSQL database, it's also uniquely suited for applications that are going to go through rapid evolution and development as they go from development into testing and into production. So, with all of these great new capabilities for Cosmos DB and its vector capabilities for building generative AI applications, it really unlocks a whole slew of different scenarios for building these types of applications. When you combine the vector data from the database with the operational data, it drastically simplifies the design of your applications.

You don't have to ETL it into a single-purpose vector database for your applications. This provides greater consistency for your applications when you have high transaction volumes for your data. Anything committed in there is immediately available for Vector Search within the database.

This makes building RAG pattern applications much, much simpler, way less complex and more cost-efficient and also enables other features as well, building things like a semantic cache, which I'll show you a little bit later. It's also ideally suited for just capturing chat. Customers who were using Cosmos DB to build chat applications way before generative AI or any of these RAG pattern applications, it's even more suited for it now.

And then, finally, the performance for Cosmos DB makes it ideally suited. And you saw here with Toyota for handling all of the logging that's going on with the multi-agents within their system there because it can handle the massive transaction volumes that are going to go through these autonomous agents within their system. Just to put a point on how scalable Cosmos DB is, OpenAI's ChatGPT runs that entire service using Cosmos DB to store all of their data.

So, every prompt and every completion that you have created using ChatGPT is stored within Cosmos DB. Using a single Cosmos DB database that they provisioned when they started the service allowed it to grow to over 180 million daily active users. And it's fully managed.

They've never touched it since they provisioned this thing. They love it so much now they run over 40 different workloads using Cosmos DB as the backing store for all these different services. Now, I want to highlight even further the power that Cosmos DB brings when you're building these types of applications.

So, I talked a little bit about a semantic cache. And, here, you have in, say, simple ChatGPT scenarios, generating responses generally happens pretty fast and doesn't take a lot of tokens in there. However, when you're building RAG pattern applications, you're now grounding that model with a bunch of data that's going to come out of a database.

And this can consume a lot of tokens. And, as you may have noticed, generating the responses can take quite a bit of more time. When you are building something like a semantic cache using Cosmos DB, you can make these very, very, much faster.

And, in fact, it can save a lot of money on tokens because you don't have to go and generate a response. Instead of sending all of the context window and the RAG pattern data to the LLM to go generate a response, you can just send the context window into a query in your cache collection and then get all of that back right away. It, in fact, just short circuits the whole pipeline in generating responses.

And the results of this are absolutely staggering. In fact, let me show you a little demo here on just how much performance improvement you can get out of here. So, I've got a very simple little chat application here.

And this is for a retail bike store. And, as you can guess, for customer-facing applications, customers tend to ask kind of the same questions over and over and over again. Right?

So, you can imagine, if you're a retail bike store, one of the questions you may get is, "What bikes do you have? " Did I type that right? Now, this is going to take a few seconds to generate here because it's going to go and do the RAG pattern query and then it's going to send it to the LLM.

And, here, you can see -- let's see, let me move this up here. I want to try and see if I can zoom in here. You can see this took almost 1,300 tokens just to process the payload that got sent into it and then, of course, another 300 to generate the response, but it took over six seconds for that whole thing to go round trip in there.

Now, let me show you what happens when you cache that. So, I'm going to go and I'm going to select another user here in our database. And then I'm going to ask something that's semantically similar, but not the same thing.

Right? This is a semantic cache. "Do you have any bikes?

" And there you can see that came back immediately and, even more importantly, it took zero tokens to generate or process that payload because it never saw it and zero tokens to generate the response. More importantly, that came back in 145 milliseconds. Right?

It was almost instantaneous. So, this is the kind of power you can get when you're implementing something like a semantic cache for applications for these customer-facing applications where you have customers that are asking very, very often the same question over and over again. So, this power to create a semantic cache is now possible in your production applications.

And we're announcing the general availability for both Vector Search and Disk ANN for Azure Cosmos DB for NoSQL. With this GA release for these features, customers can now get better performance both for the ingestion of the data and indexing of data, as well as for the searches and the queries within there, increased compatibility for enterprise features like customer managed keys, backup, encryption. Also, with Fabric mirroring, another key innovation where you can take and connect and automatically ETL your data out of your GenAI applications, let's say your chat app, and then do analytics over all of that.

It's a great way and easy way to help build something like, I don't know, analytics over what your customers are asking you. Customers can also take and now modify the vector policy and then also the container indexing policy within there and then tune that for better performance in there to help increase or improve the latency of their applications. We're also thrilled to announce the public preview for two new features.

Customers can now perform full-text search giving them advanced text capabilities and also enhanced search relevance with BM25. We're also announcing the preview for Hybrid Search. So, this is the ability to be able to do both a vector search combined with Full-Text Search and then rank those for even better relevancy for your applications there.

So, to get more details, I want to direct you to, there's a session later this afternoon, BRK193. And if you want to go see both of these features and even Disk ANN, you can watch it and just see how fast this thing can rip through like a billion vectors using Disk ANN in there. And then we'll also show detailed demos on using the Full-Text Search as well as the Hybrid Search within there as well.

Lastly, I want to point out customers that are looking to explore these new full-text advanced features, we've built a solution accelerator to do document ingestion and processing in here. So, this will drastically simplify getting data from, say, Blob Storage into Cosmos DB and it handles all of the steps you would expect. So, it will handle all the ingestion, all the chunking, vectorization and then load that data into your Cosmos DB containers.

Yeah, go ahead and take a picture of this. The source code for the solution accelerator is up on GitHub here so you can take a look at that aka. ms link there today.

We would also love to get your feedback on this and tell us if you like it, any features, other things that are in there as well. So, that is it for our session. Please take a picture of this and then open the little browser and then give us a 5 I think is what we want.

I'm just kidding. Please do fill out a survey. I'm going to leave this slide up.

Please also take a picture of this. We've got blog posts, announcements, more information and then also we've got sessions you should absolutely go and hit. Last thing I want to plug here is, for any Azure Cosmos DB customers that are here with us today, we would invite you to go and give us a product review with PeerSpot.

I believe there's some PeerSpot people outside the room here as well as back in the expo hall at our booth. And you can get a $50 Amazon gift card as well. So, come and visit us outside here or in the expert booth today.

Also, some extra special swag I want to point out. We have the coolest swag, by the way. The coolest database, the coolest swag.

So, that's it. I'm going to invite up our other speakers here for this session. There's a microphone in the middle of the room and we've got four minutes and 23 seconds for your questions.

So, thank you very much.

![[Webinar] How to Build a Modern Agentic System](https://img.youtube.com/vi/pGdZ2SnrKFU/maxresdefault.jpg)