foreign [Music] best-selling author and serial entrepreneur it's Gary Marcus [Music] pardon me so I'm here to talk today about the urgency and challenges in regulating AI I want to start with some good news which is I think Global AI policy is finally on the radar pretty much everybody here is talking about it and pretty much everybody in the world is and that's a huge change from not that long ago I gave a Ted Talk on April 18th of this year where I talked about global AI governance and I think people looked at me like I

was I don't know kind of strange talking about it then I started by saying that there are lots of risks to society I talked about wholesale disinformation and Market manipulation and accidental misinformation and maybe voice faking scams and automated cyber crime I talked about all this stuff in my TED Talk and there was a hush in the room like people hadn't really thought about that now everywhere I go I think people have already thought about these risks I think there's been an astonishing change just since April 18th Uncle Royale who's sitting um in the front

wrote an economist piece with me that came out the same day we said the world needs an international Agency for AI say two experts and when the economist ran this again 8 April 18th I think people thought that was kind of a weird idea now everybody's talking about whether it's the right formulation which formulation things have really changed since April by the time I got to the Senate on May 16th I stood next to Sam Altman or sat next to Sam Altman and just before we went on stage he said you know what Gary I

think the idea that you're pushing of an international Agency for AI is actually a good one and I was surprised because Sam Altman and I have not always had the best relationship and I said well don't just tell me tell them tell the Senators and he did and that was astonishing and I think that really changed the world when he said that so that was just May 16th by May 25th I spoke at the UN and I was pushing some of the same ideas and they were already starting I think to be a little bit

popular um where she sunak talked about them around the same time now all that's great that people are talking about International AI governance there are lots of questions around what that might mean but there are I think some bad news too with some gaps that we need to think about the first one we'll call the governance gap which is we all know we need AI governance but we don't know exactly what it is that we need there's actually very little consensus when we get down to the details and I've been going around talking to a

lot of world governments in the last month or two and everybody's thinking about this in a different way and everybody is aware excuse me the governments move very slowly we we all know that um and we know that AI itself especially in its adoption is moving very quickly I would argue that the technology is not advancing as fast as some people think that gpt4 is not that different from gpt3 is not that different from gpt2 which we've had for several years but certainly the fact that 100 million people are now using this is really radically

new so we have this situation where governments move slowly the technology is moving quickly even if not at the scientific level at the adoption level so that's the first Gap the second Gap I'll call the alignment Gap which is we know how to make AI that people want but we don't know how to make AI that people can trust and I'll just give you one simple example of this that kind of still blows my mind you read about gpt4 and people think that gpt4 is this amazing technology that is on the verge of artificial general

intelligence the reality is it's not um it's fantastic at writing boilerplate tax it's really good at helping coders I might have some disagreements about the medical study we just saw the reality is it's not as good at just learning everyday basic things as you think it is to take the rules of Chess or take the game of chess gpt4 has probably been exposed to millions of games of Chess because they're all there for the taking on the internet and we know gpt4 has taken most of what's on the internet and the rules of Jess are

actually explicit so Wikipedia is free and available and surely in the training set so you would think if this system were like an artificial general intelligence it would learn to play chess and if you played it for 10 moves you'd be like wow this thing does play chess it knows all the moves in the Roy Lopez opening or whatever your favorite open is Sicilian Defense and then you get to like move 15 or 16 and it would start doing really weird things like having Bishops jump over Queens which you can't do in actual chess but

which chat dpt4 or has been known to do so we don't actually know how to get the systems even to a larger glass of water thank you we don't know how to force the systems to follow the rules that we want to follow and if we can't give them the blade chess how can we be sure that they will follow other rules like be honest or be harmless don't cause harm to humans so that's the alignment Gap it's important to remember that large language models are not conventional software we're mostly thinking about them the way

we think about conventional software but the reality is they hallucinate they suffer from data leaks they're basically incorrigible you can't say don't make stuff up you know if somebody had a classical database as a competitor to SQL or something like that and it hallucinated 20 of the time they'd be laughed off the market here we have a system that makes errors 20 of the time people think that's kind of acceptable because of some other virtues but from a software design perspective it's crazy then we have a values gap which is we know what we want

the UNESCO guidelines for example are terrific at articulating what we want we want transparency we want privacy we want accountability fairness the White House built blueprint um bill of AI Bill of Rights it similarly calls for this even the big tech companies give lip service to these things like transparency but we have to remember and I have a quote from Satya Nadella saying we're taking a comprehensive approach to ensure we always build deploy and use AI in a safe secure and transparent way but we're not so gpt4 is something that bankersoft owns part of and

uses right and it's not transparent at all we don't know what's in gpt4 we know there's a large language model there we know this this thing called our lhf but we don't really know how it works and we suspect there are other things in there maybe even classical AI rules but we don't know we don't know most importantly what's in the data and we know that these systems are incredibly sensitive to their data and that what biases political biases hiring biases all kinds of stuff they will do is a function of what data is in

there so Microsoft tells you they believe in transparency but they're not actually following transparency so we don't yet have a way to hold the companies accountable to these principles that we all agree on I see two Futures here one is a positive future you know maybe we form a global AI agency we start thoughtfully regulating AI maybe the idea of responsible AI becomes a prestigious profession rather than something small number of people talk about maybe new companies and new technologies emerge we can't just use large language models because we can't trust them we actually need

new kinds of AI which is something nobody's really talking about right now everybody's assuming that the street light we've got right now is the one that we need I don't think that's true maybe we get to more efficient AI both in terms of data and the amount of energy we use and maybe AI eventually is able to massively contribute to the world addressing climate change medicine elder care and many more but there's also a Bleaker future so we could come into a world where conflicts over which risk we should even address in the field of

AI preclude anything from happening so we have right now for example AI safety Community ethics Community arguing with each other my problem is more important than your problem stop talking about your problem mine is the only one that matters my short-term one is what matters my long-term one is what matters I'm afraid Congress in the U.S for example is going to give up and discuss if you like if you guys can't agree on what the problem is why should we bother we'll go back to some other problem we might get stuck on large language models

and never invent more efficient technology more reliable technology we may have a small number of companies that are more powerful than States running the world as they please shutting out all competition with ill-conceived regulation of their own devising right that's what we call regulatory capture cyber crimes and big companies might wind up in some epic battle like drug cartel worlds I mean Wars who knows increasingly powerful AI systems might be constructed but if this is a dress rehearsal right now you know maybe they're going to become more and more weaponized large numbers of people are

going to get killed in deadly conflicts it could be accidental on purpose employment could crash there could be widespread unrest there could be civil wars there could be Anarchy these are really very different Futures I'm not saying which one's coming um but I'm saying we need to figure out what we're doing so here are five suggestions about AI policy uh first is I think every country needs to have its own agency there's so much going on so quickly and so much expertise required that I don't think we can assume that every country is going to

just get by on its existing agencies I think we need special purpose AI agency in every country we probably should also have an international Agency for AI maybe it's voluntary right now eventually we might need actual enforcement and requirements we're going to need to look at a lot of different models a lot of discussion nowadays about the international atomic energy agency versus the IPC and the iCal model and so forth I think the main thing to understand there is no one model is going to suffice because there's actually many different things we're trying to address

ranging from what happens if AI gets super powerful which it isn't right now but could to what do we do about for example misinformation and elections each of these are going to require different aspects of solutions so probably nothing off the shelf is going to work the most important thing though I think is agility is key we need systems the government systems that can move quickly I think we need something like an FDA pre-approval process for widespread deployment it's okay to do research on new forms of AI but if you're going to release something to

100 million people probably you should do a hazard analysis and say what are the benefits what are the risks do the benefits actually outweigh the risk as we put this out for society we're also going to need post-deployment auditing with government backing where companies excuse me put things out the government say hmm here's a question are people using gpt4 to decide whether or not other people should get jobs and it turns out the answer I hear is yes and there's almost certainly bias in that the government should be able to say we want to know

how much this is happening how much bias is there so we need post-deployment auditing most importantly we need scientists involved I've been seeing a lot of press like photo opportunities where governments bring in the leaders of the big companies the big companies have been going on these tours and the governments host them that's actually sending the wrong signal the signal that's sending is regulatory capture that the companies the big companies are going to tell us what the rules are well obviously they're going to make them in their own interest if they do that so we

need scientists and app assist and so forth people from Civil Society at the table another thing that I have been thinking about is how philanthropy can help so uh with Anka who is sitting here in the front row we've been proposing a model something like this um a CERN like International agency we could talk about what we mean by certain like um but catalyzed by philanthropy focused on mitigating AI risks and we see this as having three tracks one of those tracks is about basic research and applied research basic research how do we build new

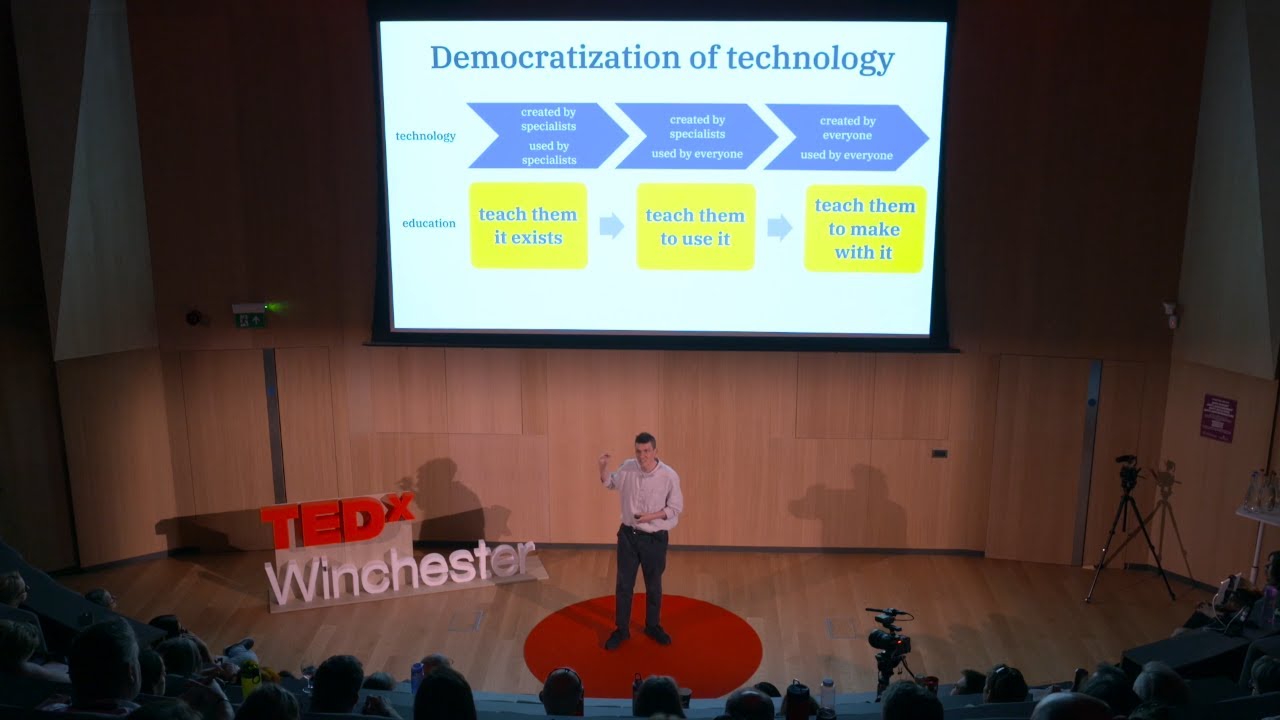

approaches to AI that aren't trustworthy and applied research given the risks that we have now what do we do about them so for example misinformation is a risk right now can we build new tools to detect misinformation the second thing is about world-class expertise having advice scientists willing to step in for the governments around the world who don't have their own expertise the third is something we're calling regulation in a box or governance in a box and the idea is to make it as easy as possible for the governments around the world to do what

they need to do give them metrics standards tools that are easy to use and give them away free or close to free so I am pleased to announce today that we are launching the center for the advancement of trustworthy AI we've gotten our first funding from the midiar network um to help us get this started today is the first time I'm saying this publicly to the world right now um and it's built on the model thank you very much I should add that um some members of the UN UNESCO have been very warm to this

idea we may have further announcements about that at some point um so I'm pleased to announce this and I I will end a little bit early possibly there's time for questions I don't know um but here's how I will end the choices that we make now are going to shape the next Century if we don't have scientists in Ephesus at the table I don't think our prospects are great if we just leave this to the big companies telling the governments what to do we can't afford not regulate Ai and we can't afford regulatory capture where

the companies decide we have to get this one right if we don't have a lot of time to waste and I thank you very much [Applause] [Music] thank you