many developers experienced or otherwise write poorly performant code but it's not always clear where the developer can depend on the language or the compiler to provide the necessary optimizations and where they themselves should be incorporating optimization techniques manually most languages for example will recognize constant expressions in code and evaluate them at compile time rather than at runtime which reduces the number of instructions needed to execute the code thereby optimizing [Music] [Music] performance this is known as constant folding and you the developer usually don't need to worry about manually handling this optimization but let's consider another

example say we have a for loop with a loop invariant expression inside of it that is an expression or subex expression that will produce the same value if replaced with another expression or sub expression so if we replace 3 * 4 here with 2 * 6 the overall expression will still produce the same result likewise if we replace 2 * 6 with 12 here the overall expression will still produce the same result so why should we evaluate an expression like this for every iteration of the loop well the answer is we shouldn't what we should

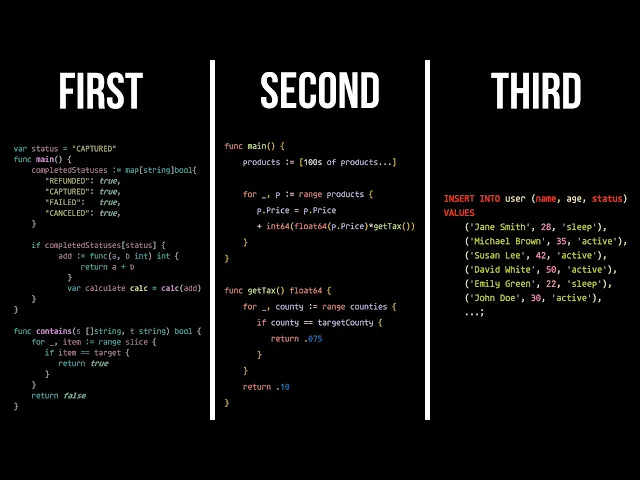

do is Hoist or move the invariant out of the loop so that it only needs to be evaluated once and since it's a constant expression we'd likely get the added benefit of the compiler handling the previously discussed constant folding optimization so as you can see understanding how your languages compiler will and will not optimize the code you write is critical to writing performant code moving on to the second law let's start with a simple code snippet where we need to do some Logic for orders with a completed status and just use your imagination here maybe

you're working on some e-commerce backend currently to check if the current status is one of the completed statuses we are linearly doing the look up on an array or list of completed statuses but as you can see here we likely won't have any duplicate completed statuses because that wouldn't make sense from a business perspective and the Order of the completed statuses array doesn't have any impact on what we're trying to do so why even use an array here why don't we just optimize this by using a set instead of an array then we'd be able

to do away with this linear search and just access statuses in constant time right but here's where the second law of writing performant code comes in as a go developer I understand that the go programming language's standard Library doesn't include sets but if you're familiar with go you know that you can use a map of string bull to achieve the same end goal and this is a very simple example of why the second law of writing performance code is to understand intricately the data structures available to you for your chosen language let's imagine we have

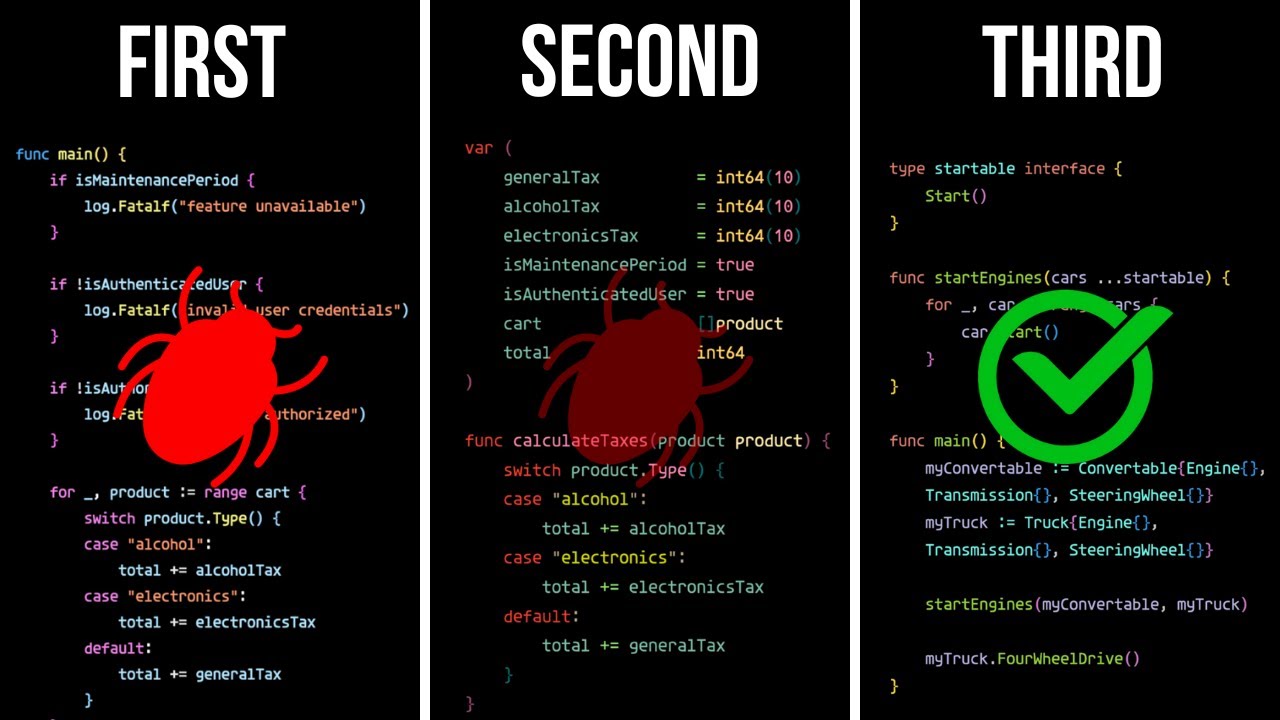

a table schema for user data with a field for an ID which is an auto incremented primary key fields for name and age and a field for status with a default value of active assuming we need to insert hundreds of users in the aforementioned user table can you tell which of these code Snippets is more performant and why for the first one we iterate through each user and do an insert for each iteration for the second one we're just passing in the entire list of users to the bulk insert function but to understand which is

more performant we need to understand what's happening on the database side of things so this is what an insert statement to insert a single user looks like in MySQL and this is this is what it looks like to iterate through each user doing an individual insert for each the important thing to keep in mind here is that each insert requires a network round trip which includes the sending of the data from our application to the DB and the returning of the acknowledgement from the [Music] DB a bulk insert on the other hand might look something

like this you'll notice that there's only one insert statement here that includes includes all of the users and you guessed it that means that it's the difference between multiple network round trips to the DB and a single round trip and at this point which is more performant should be obvious but without this underlying knowledge knowing the most performant way to integrate your code with these database apis is going to be a shot in thee dark which is why the third and final law of writing performant code is to understand database and just as a bonus

do you think you know which of these insert statements might be more optimal and how would you adjust your code to account for this when all is said and done it's easy to focus purely on application development and ignore or only vaguely touch on the database stuff but the reality is people who know how to write performant code understand database anyways that's going to be it for this video I'll see you in the next one