I am doing this thing for the very first time on my YouTube channel and that is uploading an end to end data engineering project and I have decided that I will be bringing such type of videos more and more on my YouTube channel and for that one I definitely need your support so that I also motivated enough to bring those type of videos and the project which I am going to cover in this video is very very special to me because I Have inherited that problem statement from the very first project which I did in

2017 when I started my career in the IT industry and that was related to Aviation data domain and problem statements was like Airline data injection with some business transformation so that we can just cater a 360 view of a customer journey to our client so I have used that problem statement but the tech stack is going to be AWS so this will be a whole Cloud Agnostic data pipeline using amazing AWS services like S3 event Bridge the step functions glue red shift so the whole life cycle of incremental data inje with the transformation you will

be seeing here so make sure to watch this video till the very end implement it end to end add it in your resume and increase your chances to get more number of interview opportunities and at the end of the tutorial if you find this video informative make sure to give a like in The big numbers so that we can reach more number of people and also share your thoughts opinions and design related question questions in the comment section to kickart your career in the data engineering or to land in a high paying job for the

data engineer role these type of projects are going to play a very very important role and this is very crucial for all the aspiring data Engineers first thing obviously acquire the demanding Tech stack the Demanding Tech skills not just that old school thing like Hado python SQL and Spark no data engineering has evolve a lot plus then you have to cover these kind of projects and I'm very glad that I have covered all these things end to end in my data engineering boot camps I have a separate data engineering boot cam with open- Source data

engine Tech stack plus AWS similarly open source data engine Tech stack plus Azure so whole extensive data engineering boot Camp where I have covered the industry oriented Tech skills most demanding Tech skills a very high quality type of content covering things like snowflake data braks big query airflow cafka mongodb AWS n2n then Hands-On projects more than 1520 overall project exposure you will be getting in these type of data engineering boot camp so I have used all my personal experience of more than 7 years while curating these high quality data engineering boot camp at a Very

very affordable price and you can see that extensive curriculum at your screen which you are not going to get anywhere I can bet on that even if you're paying 100K 250k for any such type of course is still you are not going to get this kind of worth which you are going to get from this data engineering boot camp I have seen it I have seen how we have transformed the career of many aspiring data Engineers who became part of our data enging boot Camp you can see those success stories on your screen so we

have a full trust what we are delivering not any such type of fake promise and we help all our students at every step like with the interview preparation material quizzes tons of assignments their solution plus the dedicated doubt support in the Discord Community networking opportunities plus the dedicated placement assistance as well for the resume building LinkedIn profile then Multiple job opportunities every single day so this is how we are upscaling aspiring data engineers at grow data skills so I would highly recommend all of you to be a part of this data engineering boot camp link

has been given in the description or you can visit ww.go skills.com to be a part of it is still if you have any doubt any question then the support number is given on your screen you can call us and drop a WhatsApp message anytime to Resolve any sort of query and apart from data engineering if you want to upscale yourself in other data domains like low code ETL data analytics data science machine learning gen AI then on your screen you can see we have covered everything for you again high quality project driven things 20% Theory

and 80% handson so what are you waiting for visit and be a part of it right now Airline data inje so this project basically I created From uh the reference of uh the very first project which I worked in my career uh itself like when I started my career in 2017 so I worked on a project like data project which was basically for the United Airlines so I inherited the idea of building this project with the same uh concept where uh we were getting some uh Dimension uh tables we we were getting some static data

like uh related to airports and then we were getting Some daily data of the flights itself and there we were doing lots of uh complex business transformation but here it's a simplified one uh right when you have a dimension table and you have a fact uh table how you are basically processing uh that one in your uh maybe how you are writing the data pipeline to process it and to dump that one in the uh data warehouse okay so Air Line data injection so here uh the idea or the text tag would be like we

will have one Dimension table d Dimension uh table of uh you can say airport codes right so you would have seen uh mostly like when it comes to the airports right so each airport has a dedicated airport code and based on that you can Fitch all the details of it Dimension table of airport codes uh in S3 on on daily basis uh on daily basis flight data will arrive in hi style partition okay so this is how it is going to be uh so what all things we are going to use in this one so

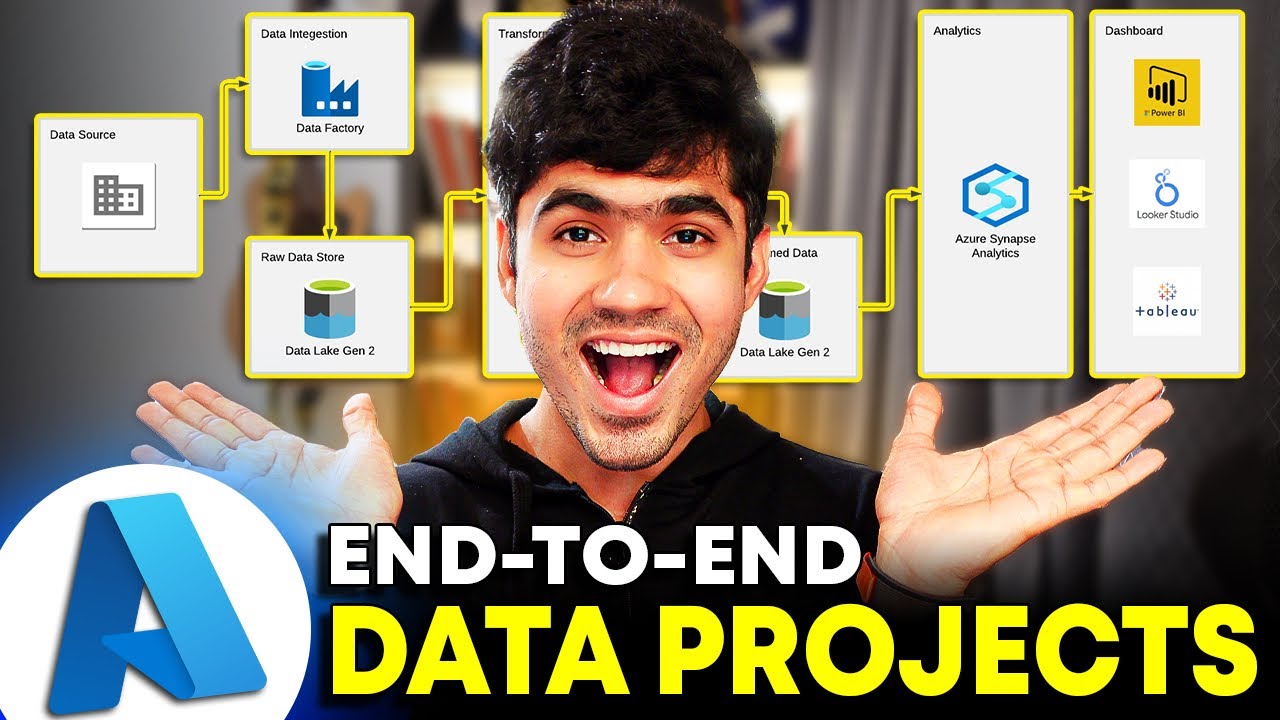

obviously S3 has our data L okay and second thing is uh going to be uh S3 as our data L uh event Bridge uh rule right so that's what we will use as soon As file is landing in the uh S3 folder we will use the event uh Bridge rule basically and after that uh what we will have we will have a step function where we would have orchestrated all these things and we will have the uh glue crawler okay and then we will be having the uh glue job itself okay and here after that

we are also going to have the red shift as our data warehouse and also we will be using the Uh SNS for notification right so this is how it is going to uh work okay uh so if I have to just give you the idea so uh imagine here you have your red shift so this is your red shift okay so here uh we will be creating one table named as airport dim this will represent the dimension table so that will be a pre-loaded table uh right we will already load it uh in the Red

shift and we will be having one more table named as let's say flight uh Dimension or Airline Dimension something sorry Air flight fact or the airline fact so this will be a table which will have the data and some measurable attributes that's why we will be calling it as a fact table as well and here we are going to have the S3 bucket right so here we are going to have the S3 bucket so here the file named as airport Dim so airport DM CSV is going to be here and when we are saying airport

dim kind of Dimension table we will be creating in red shift so that is actually going to be uh you can say we will load this one so load in dim table so we will load so in our S3 we will be having this file already and we will be creating our table in the red shift and we will load this data from here to this Lo uh this Dimension table right and here we will Be getting our daily files right so we will be getting our daily files as soon as we are getting it

what we will do so there will be a event Bridge rule which will capture this uh object creation event right and this event Bridge rule what it will do it will basically trigger a step function so this step function will basically first trigger the glue crawler right so it will trigger the Glue crawler and it will wait uh here right there will be a waiting Loop itself wait wait for Success because why we are covering it in this way you can imagine as soon as your St function triggers your glue crawler right your glue crawler

might even take 2 3 minutes to get completely executed so until unless your crawler is successfully completed you will not be triggering your glue job because the um Metadata of that particular partition would not have been captured by the glue till that time and since you will be also enabling the job book marking because incremental data load you want to do right so even if you uh don't wait for it to complete and just after that one you are triggering your glue job it will load the MPT data so it's better to first uh just

run your uh crawler and wait for uh the success right and then what you are going to do you are going To trigger your uh glue job basically right so success then glue job so glue job will basically do the uh joining operation right so we will be having the daily files okay so in the daily files we will be having the airport codes the flight uh carrier the departure delay arrival DeLay So based on the uh departure airport code and Arrival airport code we will join our daily files data with the airport Dimension so

that the fact table which we are pre preparing there we will have the uh you can say there we will be having the denormalized table it will have the full detail of the airport including the airport name City uh right and the state of it so that the analysis can be done and uh we are not joining our raw data again and again with the dimension table for any sort of queries So once the crawler is successful you're triggering the glue job and here uh if the glue job is uh successful uh right so how

I can probably create it so if it is let's say failed then you are sending the failed notification and if it is successful that means what is happening your data will be there in the uh your data will be in the flight Factor Table right so this is how your architecture is going to look like and this is how the entire execution will happen so I have already created these things uh read means the dimension table all these things are already there so uh we will just I will just walk you through with all the

important steps sequentially what we need to follow what we need to create right and then we will uh execute this entire pipeline as we discussed so Let's get started let me open the different tabs and I will show you where we have the data how it's look like all those things we'll discuss and uh let me open this thing let me quickly start my red shift cluster uh what all we need we need event Bridge Rule and and we need the glue right okay so first let me quickly uh start right it will take one

or two Minutes and then we will uh also open the glue and we will also open the event Bridge uh Rule and we will also open the step functions all right so let's first start with our uh data itself that how uh it is going to land and how it's look like so what I did I have uh created this bucket named as air line uh data injection Landing zone right so if you click on this one here we have the two folders one named as dim and another Named as the daily flights right so

in the dim folder what we have we have this uh file named as airports. CSV so how this data looks like let me show you uh airport. CSV right so here you can see this data is basically having four uh columns the airport ID city state and name right so these are the airport codes basically and the uh the city name and the state and then the name of that airport right so this is how this data is so you can see Approximately 350 records and why we are calling it dimensional we are calling it

as a dim table or dimensional data this is going to be quite static right you can even even imagine not every day uh in the world there is one airport which is getting added right this is something which might come under the slowly changing Dimension if we are accommodating more and more airports in our data but you can say that this is unlikely to change very frequently right So that's why uh we will always have it in the pre-loaded form in our data warehouse so that we can use it with the daily raw data and

join it uh in our application okay so that's what we will do so this is how our airports. CSV data looks like and we have already created the bucket and we have placed that file in the dimension folder right and then we will be having another folder named as daily flights right and you can see in this daily flights folder data right And that data is nothing basically this file named as flights. CSV so if I open it so it is having so many records approximately more than even 10,000 20,000 records as far as I

remember even more than that okay yeah you can see approximately uh 1 million right approximately 1 million records so even if you uh want to do what you can do you can simply break it down right you can simply break it down in multiple uh parts let's say you can Create four five parts of it okay and uh then you can replicate this entire uh daily data trigger but even if I create the multiple hi style of partition and if I load the same data uh same flights. CSP still that will work okay so here

in this one you can uh see right we have uh this particular uh partition right and inside that one we have this flights Dot CSV so as I said the idea would be as soon as we are placing this flights. CSV file right because this uh this is also The object inside the S3 so we will be creating the event Bridge trigger based on the object creation this is also a object right so the way we will be writing our event Bridge rule that it will only listen for the do CSV suffix once that kind

of uh suffix is being captured in the pattern only in that case our event Bridge rule will get triggered right so this is how it will look like and let me show you the data itself how This data looks like so you can see carrier means the flight carrier so DL means Delta aines or origin airport uh ID and the destination airport ID then the departure delay uh and departure delay in minutes arrival delay in minutes right so this is how uh the flights data will look like okay so this was all about the data

and what I am going to do I will also clean this up so that we can uh see the process from the scratch when we will run our pipeline so Let me clean this up okay so now you will see that this folder is empty okay so this is the first thing and uh what we will uh also do here uh I will show you that we will create the uh two crawlers right not two crawlers basically we would need three crawlers right why let me show you first the red shift part of it right

what we have created in the red shift so let me open it Right so if you look at the architecture I told you that there will be a dimension table named as airport dim there will be a flight fact uh table and uh we will be having our daily files in the S3 location so in total if you look at this architecture we would need three crawlers so that we can prepare the metadata for these three things and accordingly we will use it in our glue job okay so one crawler we will create which will

crawl the airport Dimension Table and will prepare the metadata in the glue catalog another will be flight fact right that will be one more uh we will be creating right one more uh we uh we will create and based on that one uh we will be having one more uh entry as a metadata in the glue catalog for this table and one more crawler we will create for this daily data which is arriving in the S3 so that is how we are Going to have the three crawlers and three metadata tables for the same right

so let me just minimize it let me remove all these things right so this is our red shift so in total we would need these things right these uh are the important steps first of all we need to follow in our red ship okay and I will be providing all these create table commands the copy command so don't worry about it so what we should have first we should have the Uh schema named as Airlines and inside that schema we are going to create one table named as airports dim it will have this kind of

uh AR it will have this kind of definition and then as I said we already have our Dimension table data in the S3 location so we will be using the copy Command right so copy into basically this table from this S3 location right and also IM Ro needs to be passed and we have seen uh this thing when we were working on the red shift Dedicated that copy this is actually the copy command so it would need that IM Ro which is associated with your red shift right so this was the role which is associated

associated with my red shift so I have included that IM Ro the complete Arn of that IM Ro then file is in the CSP form comma delimiter I will be uh ignoring the first line because it contains the header and the uh red shift is in is actually residing in Us East one reason right so first I created this One and uh I have already populated the data so let me do one thing quickly I will truncate uh these tables so that it is empty and we are doing everything from the scratch so I will

just truncate it will not drop it uh because I have already created it so let me just truncate the dimension table so that is done and let me also truncate the uh daily flights fact table right so let's just truncate it okay so the definition is already there Now what I am going to do I will quickly run this one uh right copy command so that data is loaded in our Dimension table right so you can see the data is loaded and we can even quickly check that one select a star from this one limit

five okay so let's run it and you can see our Dimension data is present there and uh this table as we have already created but at this moment there will be nothing inside this table so select a star from This so this is also completely empty but you will be able to see the architecture of it that the daily data which we are getting it contains the airport codes but this is the D normalized table or the fact table we have created which contains the uh complete information right so that let's say we are only

loading the data as it is we are getting in the S3 right so let's say the business analyst or data analyst need to write their queries Based on the airport name city states all these kind of analysis uh they want to do so in that case in every query they are writing they need to join the daily with the dimension table right so in that way that may slow down the performance as well so it's always better because whenever we talk about the data warehousing and the heavy data data analytics so denormalizing the table is

one of the most crucial uh part of the data modeling and designing Itself so that you can just eliminate all that uh heavy join kind of Burden uh on your data warehouses right so you would like to eliminate all those challenges so here we have our tables ready now so our uh data L part is sorted our tables are ready now the next part which I wanted to talk about the uh glue right the Crawlers which we will be creating but as I said before creating the Crawlers right here as per our diagram we need

to create two Crawlers On top of the red shift and on top of the red shift so what we need to do even if we would like to crawl the red shift tables we need to use the jdbc connection for that one only in that case we will be able to do that right so the way we created the RDS the way we created the connection for the RDS in the same way you need to create the connection for your uh in the same way you need to create the connection for your thread shift as

well right so when We were creating uh when we were creating the connection jdbc connection with the red shift right or the um red shift I I remember so there you um if you remember right we created the S3 end point right so all those things will always be the same right you need to enable all those things right if you want to make a connection to your red shift right right you need to know the username and password which you uh set Up while creating your red shift cluster and apart from that whatever VPC

you are using for your uh red shift right so if I go to our red shift here just a moment right if I go to Red shift here and uh probably properties right so this is the VPC attached to it this is the security group so all these things you already know now that what you need to do inside this security group that in the inbound rules right you need to make sure that the port is open the red shift Port is actually uh open which we did earlier right so Port is 5439 make sure

uh this is exposed in the inbound rule of your security group and after that if you open this attack Ed VPC you need to make sure that the S3 end point is created and we would need two more end points I will talk about it once we will create our glue job right so make sure you come to the end points and this S3 end point which we also created earlier if you remember right for that same VPC Inside which our red shift is created this is also created these two things are mandatory only in

that case you'll be able to set up the uh connection right in your glue uh with the red shift okay so uh come to the connections here so I have already created it named as red shift new connection so you can follow the same approach again like you can click on the create connection and then what you want to do you want to create for the red shift so click on Next uh and it will ask that which cluster and the database name you can provide and then you can see right username and password so

the username and password which you used while creating your uh red shift cluster same thing you need to use here right and then you can uh give a name to it something like this okay and then you can simply click on create connection and if you have Followed all those things which I talked about uh the S3 endpoint and the port is open in the security group then as soon as you create your connection you will be able to uh see this thing here and as soon as you select your connection you can come here

and you can click on the test connection to verify whether your connection is established successfully or not right so as soon as I do that and the glue roll right so earlier we created that uh Glue rooll right if you remember that role is already created so whatever previously like previously whatever glue job we were running so we will all we were having this glue roll which had most of the uh accesses right uh if in your glue job you want to read the data from the S3 or the RDS right so now if your

glue rooll wants to interact with the red shift make sure in your glue glue roll you have given the uh full access for your red shift itself right So make sure whatever role you are going to use in your glue job it has the full access for your red ship so let's just click on the confirm and see whether the connection is successful so you can see the connection is successful means the crawler and other things which will be creating they will run completely fine right so connection is done now uh come we will come

to the part of creating the Crawlers right so uh you can see I have first I Already created a database in the glue catalog named as Airline data Mar so you can create that one and then you need to come to the Crawlers this crawler part okay so you can see these two crawlers the airline dim crawler Airline fact crawler right so very easy to create uh with respect to your red shift okay so let me quickly show you how you can uh do that let's say I will give a name to this uh temp

crawler okay so if let's say I want to Crawl the red shift table inside uh a particular schema so as a data source what we will be using we will be using the jdbc right and the connection so I will be using shift new connection which we have created and then after that it will ask the path for that table inside your red shift so you can give a path like this first your database name then schema name and then the table name so our database name uh is like this Dev and the schema name

is like Airlines and The table name was airport dim and then you can click as the jdbc data source click on next then IM roll uh the glue roll which we have and then click on next it will ask in which database uh of catalog you want to create this table and if you want to give some suffix name for your metadata table you can give that one and then click next and then create C crawler means your crawler for the red shift table will be created and then you can simply run that one so

I Can show you that this crawler I created for for these two tables the dream crawler it is uh pointing uh basically the particular table uh data source you can see right it is pointing to this jdbc source so this crawler is about the dimension table and this is our fact crawler and it is pointing to this data source like the daily flights fact which we have created okay and regarding and as soon as you run These two crawlers you will see the tables like this right Dev Airlines airport dims okay so you can see

uh this is how it will look like and another table if you want to see so this is our daily flight facts so this is the uh metadata for that one and now coming to the third call which will actually crawl our daily data so flights data crawler okay so flights uh data crawler So when I was showing you this example right I actually cleaned this up completely I actually clean uh this up so now the question is how we will see the field names and how everything will happen because uh in our architecture diagram

we saw that this is uh how we will create there will be high style of partition and inside that one we will be placing our flights. CSV and then the crawler will run and it will prepare the data so for the first time When you are creating the crawler because we need to create our glue data pipeline as well right we need to create our glue data pipeline so in order to create that one definitely you need to know uh the schema for it so that you can set up your pipeline what all things you

need to drop uh in the join operation what you need to keep so the better idea is to first uh maybe create a dummy Hive style of partition like say date equals to Some random date there then put up your flights. CSV and run your crawler at least once right run your crawler at least once so that accordingly your you are able to create your glue job because once the metadata for your daily flights is prepared only in that case you when you are creating your glue data Pipeline with the visual uh drag and draw

features you'll be able to see the column names which are present here and you will be able to complete your Pipeline right so this is how you can uh do that so same thing I did right the very first time I created this scrawler so I placed one file in inside this one and the only reason of doing that I can visually complete my ETL Pipeline and then I just deleted everything from here and I can simply do the entire injection process from the scratch okay so this is the crawler right which will Basically point

to our uh this particular S3 path right so this crawler we have created for the S3 location like this for the CSV file and as soon as you run this one right you will see this kind of table raw daily flights right so carrier airport ID destination airport ID departure delay arrival delay and the date because in the highway style of partition we will be putting the data in the S3 bucket so there will be a Partition column always named like date that is going to be there okay so our crawler parts are done now

the next thing which uh we are going to move on that we will create right that uh we will basically create a particular glue ETL pipeline right and once our our glue ETL pipeline uh is done we will move on to our event Bridge pattern and and the is of functions that how it is going to work on okay so let Me create a pipeline from the scratch so let's click on the visual ETL okay and we are going to create a fresh pipeline we will uh flight data inje okay so this is how we

are going to do that and how this pipeline will actually look like so the first thing we would uh have our Data right we will read the daily flights data from the catalog and we will name it like uh the daily data daily flights data and then Airline data Mark and and which uh table will represent the daily data like raw daily flights okay and here we need to choose the glue roll and click on start session as well okay so this is the first thing and then we will look at the uh schema of

it once it's just loads the preview and Schema we can already see here that it will have all these type of columns right so as soon as we get the files uh right what we would like to do we would be interested because here you can see we have 1 million of Records right in the 1 million of records and maybe for our analysis right what first thing what you can do you can basically load this entire 1 million record itself but let's say maybe to make it little bit uh interesting we would like to

analyze Only that kind of data where uh let's say flight was getting delayed by more than 60 minutes right more than uh 60 Minutes of departure DeLay So after this one right before even joining our data with the dimension table what we would like to do we would like to do the filter so let's add a filter condition okay so what kind of filter we would like to add here the filter is going to be this one let's say add condition here add Condition now you can see uh so for the condition departure delay right

so if we look at a schema right so here the uh departure delay arrival delay is going to be in this numeric form obviously so for the filter condition what we would like to do we would like to choose this operation uh let's say GR greater than and equals to and here the value is going to be 60 because in our data departure and arrival delay is in the minutes so departure delay greater than Equals to 60 only those kind of Records we would like to filter okay so this is the filter operation and now

the filter is done what we would like to do so this is the part where uh things get little bit tricky because as I said you can see we have the origin airport ID and destination uh airport ID okay and now what I want to do what I want to do I want to populate uh this Thing I want to populate uh this thing the airport details for the origin airport and for the destination airport so now the one question I want to ask can I achieve this thing in one single join op operation or

I have to do two join operations because I have to populate the things for these two uh airport IDs for the departure or the origin airport and for the destination airport is it possible to do it in one Join two join would be needed right two join would be uh needed and I will show you how that we how we are actually going to work on it let me show you because when you are doing the join operation in your right hand side table in your right hand side table right one record is representing one

a port code right one record is representing one year port code so that is the thing uh which is happening because when you are join Joining your left hand side data which is which is having the airport uh destination airport code and the um departure airport code and the arrival airport code so if you are writing your joint condition where let's say uh how should I say let's say this is one row from your flights data it is having two Fields the departure airport code and the arrival airport code and in your right hand side

table which is your dimension table it is only having one Record which is either representing your uh you can say departure or the arrival right so one row of your left hand side right either you can join for the departure or for the arrival okay you can't do it like and condition where departure airport code equals to this and the arrival airport code equals to this because that will act like a and condition which will never get satisfied right so you have to join it in the two step so that you can Achieve this part

part so after the uh Filter part what I'm going to do uh let's also first have our one source that source is going to be the dimension table so I will name it like airport dim okay and the database is going to be this and the table is going to be airport uh Dimension and here you can give a temporary bucket name so it would be needed and I already created a directory named a bucket named as red shift temp data inside that one Uh this is the folder I have created and you can create

the folder structure similar to that one and Airline dim this is the directory let's say I would like to use for the uh Dimension right now I am roll uh so this role which is attached with my red shift that I need to add here so red shift roll so this is prepared now after this filter step I want to do a join operation so first I will do it for the uh first I will do it for the Departure airport code and we will then solve it for the arrival as well so here uh

node parents in the join operation first filter this is going to be left hand side data set and this is going to be the right hand side data set to I will use use uh this one airport dim so right you can see this is how the join is going to happen I will be using the left join right and then the condition condition is going to be from the filter uh I will be using the origin uh Airport ID and in the right hand side table I will be using the airport ID so this

is how the join will happen and after the join operation as you know uh we would like to start keeping we would like to start keeping the column names as per our red shift uh table which we have created like the departure airport the depart uh departure State and the departure City so it's better after this step we can simply add one more of part which is change schema right so uh after This step I will call this thing change uh schema so here I can name it like modify departure airport columns okay so um

here first of all we don't need this date column moving forward so I can click on the drop so we will start dropping it and now uh this is how we will start uh naming it because this origin airport ID since it in this step we have already done the join operation For the origin so we will also drop this one because moving forward we don't need this one because for the origin airport ID we have already uh captured this information the uh departure City departure name and something like this so in our red shift

table how is named like the uh departure airport means the airport name will be named as this thing name should get mapped with this thing departure airport right similarly State should be named like this departure State because this joint operation we did for the origin airport means the departure hence I am uh changing the schema naming convention like this C City similarly departure city right departure City and this airport ID since we are dropping the origin airport ID we also don't need this airport ID as well because this is coming from the right hand side

table and I don't need It moving forward right and then carrier uh is fine we have the same name and then we have the departure delay and the arrival delay so here uh you can see this arrival delay we will name it like this departure delay we will name it like this okay and then let's verify the data type of it so only the departure delay and arrival delay are in the begin form rest everything is in the Ware so let's do it in the same way let's say we have the big Int yes so

big int I will delay this one this one and uh destination airport yes we need it because in the second step we will join it again for the destination airport and rest everything is fine okay so this is also done and since we have done this now we can perform our next set of join after this one we we will perform one more join operation and you can see the output schema of this operation departure delay arrival delay it only contains the destination airport Because that is the only join left carrier departure City departure airport

and departure State now let's perform one more join operation so join and here uh what we left hand side data will be this one right hand side data uh will be the again airport dim right and let's name this join for departure airport right and we will name this one this join operation like Join for arrival airport okay and let's follow the left hand side join condition so from this left hand side data we need to use the destination airport ID and in the right hand side we will be joining it on the airport dim

and again we can simply make a use of change uh schema right so let's add change schema to derive The Columns which we want right so all these things are fine we will drop this destination airport we will Drop this airport ID and now this city name is State this will correspond to the arrival one right so I can name it like arrival City and uh this name will be replaced by the uh arrival airport and this state will be named as arrival state right so this is how this final uh will look like and

after this step we simply want to inest data in the red shift right so for that one again we will pick the load operation and this task we will name uh It like this uh modify modify arrival airport columns okay and after this one let's add one more step which is the glue catalog and where that is added okay it is added Here so let me delete it it is replicated uh delete this one so the this is my last step here I can add a node like [Music] this and here trans so this this

glue catalog and uh where it is going transform change [Music] schema this is the node parent from oh sorry I need to add a Target um my bad so after this one uh we need to come here we need to go to the Target I was just using source source again so catalog so I needed this one red shift Target table I needed to use the target so this is going to be our Target now and in which table we want to Load uh flight facts okay and uh the temporary bucket red shift temp data

fact right and then the IM role associated with my red Shi okay so now after doing so our pipeline is ready so flight data inje okay and uh we will come here and we will provide the worker related Details uh already pretty much done here just the last thing would be needed the job parameter I job name light data inje okay and let's click on save so our pipeline is ready now uh right and our data sources data warehouses all those things are ready now the remaining thing is how we are going to run our

how we are going to Orchestrate this process because orchestrate orchestrating this process process includes multiple steps because we are going to read the data from here and one more thing I forgot because we would like to do the incremental load process uh all the time so it's better to enable the job book marking as well so I will do this thing enable job book marking and then save it one more time okay so if we look at it here in order to AR Orchestrate this process the thing which we needed as soon as the file

is landing in the S3 it will send a trigger to the uh event Bridge pattern rule event Bridge pattern will uh simply capture that one and it will trigger the step function and what our step function will do step function will trigger the crawler it will wait for the crawler to get finished once it is finished it will trigger the glue job if glue job is failed it will send the failed uh you Can say SNS notification if it is succeeded then it will send the successful notification okay so first let's see how the event

Bridge rule is going to look like and in order to send in order to send the uh pattern right to our event Bridge what we need to do I hope you all remember now we need to go to our bucket and we need to enable the event Bridge uh notification so we need to go so this is the bucket we have created from this bucket some sort of Event Bridge notification we want to send so that event Bridge rule can capture it we will come to the properties and if we go down here uh right

we can see this part Amazon event Bridge so you can go to edit if it is not turn on you can simply uh turn it on so that the patterns or whatever uh operations like creating the object all these things we are going to do it can get captured by the it can get captured by this one the Event Bridge okay so now uh let me show you the step function that we will create for it so again going to be very simple one and I in the previous session I already told you what is

the best approach to create the step function because sometimes you don't know you don't know the input and output to a particular state in your step function so creating it step by step will always help you and that thing I already told You in the previous session so follow the same approach here I will give you the work through of complete uh step function which I have created but now you are pretty much aware that how to look at the input and how to look at the output and how to pass it in the input

filter output path so that a specific set U of uh the specific set of data you can get uh from the uh State response which we include in the step function and how You can take the decision based on that one because in the step function everything is going to be a API call for the different different uh States we are going to include and every API call will give you some sort of responses based on the responses you can apply the next check and you can take the decision accordingly right so this is one

of the uh States or you can say stuff function I have already created and you will see that how it is uh actually working so Let me click on the edit part and I will show you that what all things needs to be done here right so now when when you will start from the uh step function it will be completely blank right so we need to orchestrate it now right we need to orchestrate it uh step by step and how we are going to do it so the very first step as soon as our

event bridge will trigger uh as soon as our event bridge will trigger this uh Step function so the very first thing this step function needs to do that is starting the crawler right so that whatever file we have uploaded in the new high style partition the metadata can be prepared for it and then we will be having the um metadata for it and we can simply read it so first step what we are doing again you can uh browse all those things here like crawler related operation if you type it here you have the options

like The get crawler stop crawler get get crawlers start crawler so we would like to start a particular crawler and again as I said everything is going to be a API call for you so it will give you some input some sort of output right and step by step you will be able to see it how it looks like so I included this step start crawler so in the start crawler what it will ask you it will ask you to provide the name of the crawler right so here if I take you Back and the

Crawlers so this is the crawler I created to crawl the files in the S3 named as flights data crawler okay so this is my state machine right you can see in the name of the API parameters I have given the name of the crawler which I want to start right rest you can keep it as it is okay and input output I will show you right let me first explain it till this weight condition right then I will show you uh because I have done some previous Execution so there you will be able to see

what kind of response this start crawler will give so that you can take some for uh decision so as soon as the crawler is started right this is very common thing and I already told you because your crawler might even take uh two to three minute or 4 minutes to just call the new partitions the data which has been created right so it's better as soon as you started the crawler it's uh better to just check the status it's Better to check the status of your crawler like if it is already running then what you

would like to do you would like to go in a weight condition you might wait for 5 to 10 seconds and then again uh call this get crawler API because this get crawler API what it gives you it gives you the current execution state of the particular crawler and based on that you can check the status of it whether it is still running or some failure or success has Happened to it okay so start crawler here in the next step what I doing I am uh calling this uh step which is get crawler so again

get crawler this is kind of API so here you need to give this thing flights data crawler so get crawler and after that what I have done I have added this Choice State you remember Choice state which gives like the if else condition so I have added the choice State you can simply type it here you can just type like this choice And you will get this one right the choice State and as soon as you have the choice State here you need to give the rules right and this is is how we are going

to give it the default so since this is like the if else condition so if this condition is succeeded means whatever is the response of the G crawler inside that one there will be a parameter named as crawler dot State like the Json path we Are giving we are checking the value of it if the value is running means my crawler is still running so what what will happen so the choice state if this is the rule one right what I will do I will add one more uh you can say state which will be

a weight state so you can type it like the weight State you can see delays for the specified time so as soon as I go to this wait State you can see wait for a fixed interval means I'm Waiting for 5 seconds and in the next state since I'm in a weight condition after 5 Seconds what I would like to do I would like to again just uh go to this get crawler state so that this is like a conditional Loop so this is like a conditional Loop that it again uh called this get crawler

and then again checking the status of it and then again coming back here correct so this is how it is checking it after the five or 10 second whatever kind of delay you want to put Here right and let's say uh if it did not get the running status so here we are assuming the succeeded or the running these kind of status we are getting so if it is not in the running State means it has been successfully executed then we are going into the another Direction so if I again come back to the choice

one so in the rule one if I click on this one similarly you can edit the condition you will get a option for the add condition So there you need to provide the path for it that what kind of condition you want to put up okay so you can click on this one edit condition so this is how it will look like where you can give the variable how you are fetching it matches the string means equality comparison and then value should be running okay and when uh it is in the running State you Are

uh so when it is in the running State what you are doing you are calling the next state which is weight State and weight state is again going back to the get crawler state so that it can check the status in the loop right now in the default run means if the above condition is false then what is going to happen we will go to the next state which is nothing basically the glue start job Run state so let's see let's click on it and here you will see we would like To start a glue

job right so here we have created a new job which is this one so let's go here and we will use this new job name here which is flight data injection so let's use that one okay so I have simply used this glue job name that this should get start and here I will enable this thing wait for task to complete right so there could be multiple options maybe as soon as this blue job started some sort of errors or Issues were there so instantly it will go into the fail condition right so let's say

if it is failing some sort of exceptions are coming here so that is the reason you can see in the error handling I have added one catch errors part so in the catch errors like if this task is in the failed status then the next state which it should directly go to that is nothing basically the SNS publish state right means from here I can add one more State named as like SNS Publish and I can provide the detail like what message I would like to publish and what what is going to be the uh

topic AR right so that will be my uh you can say that will be the next fall state if some sort of exception occurs in my glue job while starting it and here since we have enabled this one wait for task to complete right so as soon as it gets complete maybe with some failed status or with some success status so Again I will add this Choice State here and this Choice State what it will check it will check the final status of our glue job because failure with the glue job can happen in two

steps right maybe when you are starting your job run if during that time some exception is occurring so you are catching it directly and you are sending the failed notification but let's say your job has started and maybe while doing some transformation it is failing then you Will be able to capture it here right as a final status failed or succeeded so again I have added the choice State and in the rule one right means in the if condition what I'm putting so in here the start job Run state of a glue job will send

a response where there will be one parameter named as job Run State and that has to be equals to succeeded if that is the case I am sending a notification like this to SNS glue job execution successful if Succeeded is not the case then I'm sending the field notification with the state input message itself okay so this is how the state machine is going to look like so I will simply save uh this one and I will do the exit from here and I will show you these operations because I have done this previous execution

I can quickly show you the input output how it looks looks like so you can see this is the Successful Run start crawler so input means we given the name of the crawler which we want to execute right so this will be the input to our task and what kind of output it will give uh just a moment this one so it is it is not it is not um giving some sort of output path but as in response it will send it to the get crawler directly as an input so that's what I said

uh you might not be Able to find what kind of inputs you are getting to the next state so it's always better to create get your orchestrated flow step by step and based on that you can apply the filter the way I was writing let's say crawler dot state that kind of Json path right so you can see in the get crawler this is the kind of uh input you are getting so crawler object and inside that one you are getting this type of uh State stopping running right so this is the kind of States

you will be having so as soon as you have the get crawler what you did you applied this Choice State and in the choice State what is coming as an input right this entire response of the G crawler Right is coming as a input that is the reason what I did in the is running condition I use this one if I minimize all these things right if I minimize all these things then you will be able to see that this was the crawler like dollar. crawler do estate So I gave it like a running okay

so same thing happened here in the glue job run right in the glue job run uh when it will be succeeded or stopped we got that kind of input in the start glue job run and in the input parameter we given the job run name which had to be executed and uh here as a task result as a task result what this start job run has executed you can see right this is the kind of output this particular glue start job run will return right so this Kind of output it will return to the next

state and you can see the job Run State and I am simply using this parameter in the next choice state right I created this Choice state if you remember and what I am getting here as an input I am getting this entire response as an input and that is the reason I applied that filter dollar do job Run state if that is succeeded I am sending the success notification if that is not the case I will be sending the Failed notification so when you are orchestrating it step by step add your step so that you

can check what kind of possible status you can get and based on that you can apply your Json path filters in the conditional check like the choice state so the step function is also pretty much done now the next thing is the event Bridge rule so let's quickly let me quickly walk you through With the event Bridge rule okay uh let me click on the edit part so this is the rule name I have created like rule with an event pattern so click on the next right then uh here okay let me show you that

how because here in this one we had to make a custom change so I will show you how to create it from a scratch so let's say uh flight data inje rule okay so rule with an event Pattern so click on next uh here what you would like to do uh just keep this one AWS events or event Bridge partner events and then use pattern form right so AWS Services what we need here we are applying this pattern for the uh S3 create object so let's type the S3 and the event type uh event type

will be uh Amazon S3 event notification right so as soon as you do this any specific event I want to apply It on the object created right any bucket no for a specific bucket and our bucket inside which the data is going to be landed that will be this thing Airline Landing zone so I will SP specify this one right so now you can see that this is the simple one it has created but the drawback with this event pattern that in our S3 right we will be always we will be uh creating the files

with the hive style of partition So this is also one of the object right which you are creating and inside this bucket even for the first time you are creating this folder this is also the object right so in that case what will happen your event Bridge rule will get triggered and it will trigger your step function as well although it will fail because there will be no table or no metadata as such so it's always better that whatever pattern we have written uh make sure we can simply add some custom Suffix as well right

and that custom suffix is going to be nothing but a simple suffix on the key right like what kind of file extension you are expecting here so you can simply click on the edit pattern right and you can add a suffix like this okay I will show you the event Bridge rule Json so you can see right this is the only extra thing you need to add here like on the object right because as soon as you upload load the file in the S3 so if you remember right When you are capturing when you are

integrating S3 with Lambda Lambda was getting something as a event trigger so the same sort of event our S3 object create notification will generate which we are simply parsing in our pattern so same sort of rule I'm writing that there will be object keyword inside that key and suffix will be CSV okay so I will simply copy this Rule and I will simply apply here and let's click on the next and then you can Select the target like which Step function or that thing you want to call so here I would like to call the

step function State machine uh so that state machine is Airline data Pipeline and then the uh you can say the role will get created for this particular uh for this particular event Bridge rule you can click on the next and then it will get created so as soon as this event Bridge rule is getting created and one IM Ro will get attached To it so you need to make sure that you are giving the permission to this IM Ro to invoke a particular step function and also to listen something from the S3 so S3 full

axis and the step function full axis needs to be added in this IM roll permission for your event Bridge uh rule same for other IM roles as well so whatever IM Ro you are attaching in your glue make sure it is able to read the data from S3 means enabling the S3 full access and also enabling the Red full access okay so since I have already created one uh Airline dat step function I will enable this and also I will make the changes inside that one uh or changes not needed because in the step function

I'm calling a different job so that is fine I will simply enable this thing so now this is enabled so what we can simply do now we can uh just probably uh delete everything from here right and we will Create a new high style type of partition and we will upload a file there so that everything can run from the scratch so create folder let's say 9th of April create folder right so now you can see the create folder is there so if you want to check whether your state machine got executed or not right

there will be no execution as of now because The reason is the way we have written our event Bridge rule it is listening to the particular create object event where the suffix is ending with CSV okay so now what I'm going to do I'm going to upload the file here the flights. CSV open just upload it so data is uploaded now and let's come to our state machine now you can see State machine is in the running State so you can see start uh crawler and let's see you can see right it is checking get

crawler it is in the weight condition and now you can come here and you can see your crawler is also in the running State and if you come to your uh particular State machine you can see is running was checked now it is in the weight condition and you can see the task here which are getting uh created get crawler after 5 second then again get crawler then again get Crawler so it is waiting it has still not uh gone to the particular step of starting your glue job right so you can see it is

uh running so let's wait when it will go to the next step now you can see it has gone to this particular step and let's see about our glue job flight data injection and coming to the runs so you can see our glue job has also triggered so now you can see this is Failed okay and let let see uh what would have happened with our uh State machine because the glue job is failed and you can see the expected Behavior right that the failed SNS notification has been sent okay so it has uh come

here on this Gmail uh IDs okay you can see this one right the task failed cause this particular SNS notification I have got here right and this is the now interesting thing which I want to talk About so this is a kind of bug okay so this is the kind of bug with probably their visual ETL or whatever it is so let me show you so if we come to this pipeline so whenever we are creating uh this thing Let's uh come here uh just so let's come to the edit job right so you look

at this part this is the airport Dimension right this is the airport Dimension and uh here we already provided this part right we already provide if you remember in the beginning itself we provided this part uh which is the temporary location for the S3 in the read operation which was this one we already provided it right and let's click on the save button itself right this error is actually coming for this part itself right the temporary path related to the red shift operations like reading Uh it so if now we come to the script path

so if you look at this thing where the airport dim airport Dimension table which we are reading you can see although we are mentioning the temporary path although we are mentioning the temporary path for the particular um what I should say this particular airport Dimension table when we are reading it you can see the data database name table name transformation Context these things are getting added but although we are adding the bucket path for the temporary location it is it is not coming up here right so that is the sort of bug I have seen

I'm not sure if anyone else has tried it yet and they have pointed out this bug or not but I have found this bug that it does not appear here but for the right operation when you are doing it in the Target right if I show you the target here or maybe clicking on the not yet just a Moment so if you come to the red shift uh Target um not sure how uh you are able to look into it how much it is visible but uh I will show you so you can see right

this is the last step on the line 43 where this red shift temp directory is actually coming up right so for the Target it is actually coming up but for the source it is not and that is the reason our glue job is failing so now what we need to do first thing first obviously as soon as You click on the edit descript your visual ETL will just completely gone so that is what we will do now and what you simply need to do you just need to copy this part uh this temp directory kind

of parameter from here I already have here just let me copy it from here uh this thing okay so you need to add it manually now so as soon as you click on This one and save it obviously that thing will be gone so airport dim right and now click on the save and now we will uh do the same right so now since our job is failed nothing is loaded as of now so either you can give a manual trigger because you can simply give a manual trigger to your uh what I should say

estate machine and uh it will just run your crawler but it has no new file to uh execute but uh whatever you have Uploaded uh right it will be able to read that one because although we have enabled the job book Job book marking but there was no data to read so maybe same thing we will try to do uh this is our state machine we will give a manual trigger to it right uh new execution get crawler we are giving the crawler name so that is fine I can simply click on this one so

it has picked the uh previous you can say uh previous input which was Received to it so you can simply click on this one and you can just click on start execution with the previous input parameters it will again uh just run this one so let's click on it and start looking at the state of it so it is in the weight condition now crawler is again running but it will not update any metadata because the Previous uh one it is actually the previous file which we uploaded it is looking at that one so the

crawler is running it will start the glue job we'll just wait for this one the blue job is triggered now let's refresh it wait uh given the incorrect bucket name just a moment uh I copied the incorrect bucket name and it job So it is like this temp data and inside that one I have created this is the bucket name let me cross verify shift temp CDs temp data Airline hyphen dim just a moment let me copy Airline hyph dim okay so let's run it one more time execution stopping now so I hope our glue

job will start in a second now it Has started let's wait for this time it should be successful ideally meanwhile it is running you will see that it is waiting here right as we put the condition uh right that once this glue job starts so there should be a task wait condition so it is waiting for this task to complete either with a succeeded or the failed status so here we can see that it is succeeded okay it has Completed here might be uh some delay in the responses so here it checked the success notification

it has uh send the success notification as well let's see we have got show so here yes glue job execution successful let's verify this thing from uh this data itself five so you can see the data has been loaded here and all these departure like all these records are actually coming Where we put up the condition for the departure delay greater than the um 60 Minutes greater than equals to the 1 hour basically the 60 minutes okay so this is how your end to end pipeline is going to look like from the start till the

end but one more thing that when you are going to orchestrate all these things and specifically while running your glue data Pipeline with the where you are using uh the red shift as Your Downstream system right so when we were working with the S3 Etc uh RDS basically then creating the S3 Endo was the enough but when you are working with the AWS glue and you are actually orchestrating it uh from your your step functions you are running it so your glue job ultimately you can see right first thing first what it is doing it

is publishing the logs in the cloud watch uh as well so that is the first thing and uh second thing uh you are just Running your blue job which should work with your uh red shift itself right so in the orchestrated manner when you are doing it so apart from the S3 and point for red shift you need to create two more end points right you need to create two more end points so that your glue job is running successfully even when you are orchestrating it right else you might face some sort of errors issues

right so apart from the S3 endpoint I will show You that two more endpoints which you need to create so first Endo is the cloud watch monitoring endpoint that is what you need to create right and you can see uh the VPC uh ID right so in in the VPC in which basically our red shift is uh located right so Cloud watch uh monitoring endpoint I am creating for the same uh right so very simple to create earlier as we did so create endpoint you can let's say give the cloud watch and similarly you can

find That one so here you can type the monitoring so you will get this one com. Amazon aw. us east1 monitoring so click on this one just select this part VPC so this one 389 that was our default VPC which we were using earlier and then you can simply go to your red shift and you can see in which uh security group or basically your subnet uh itself this is the security group uh this is the security group and let me see what we need to put up Here the subnet okay uh just a moment

where is my glue job for glue jobs you will not be having these things job details properties will be no VPC connections this one so whatever submit uh right you will get this detail inside the connections because the red shift connection we are using so this was the VPC and this subnet right so make sure the monitoring Endo which you are creating so have the same subnet look for this one uh which we1 we have 38 D3 so the same subnet uh not 38 D3 so this one right and then ipv4 and probably the security

group so if you look at this one what is the security group so 105 so 105 is the security group so select that one full access here and Then simply create the end point so in the similar way following the same subnet VPC and the security group you can create the glue VPC endpoint so in the services you need to Simply search the glue so let's say click on the end point here and in the services type just glue so you'll be getting this service uh simply itself and after that same step the3 9 and

this one the 38 D3 and this 105 Security Group basically that subnet that security group that VPC in Which your red shift is running simil so for that one simply you need to create the cloud watch endpoint and the glue endpoint right and S3 end point is definitely mandatory even if you let's say using RDS as a connection in your glue job or the red shift so in total these three end points would be needed once you do all these things and again with for red shift specifically uh your Port should be exposed there in

the inbound rule the Security groups and whatever Services you are creating if you're creating event Bridge rule make sure in the IM role of that event Bridge rule the step function permissions the S3 permissions are added from your glue job you are going to interact with the red shift and with the S3 so in your glue job IM role make sure red shift full access is there S3 full access is there similarly for your red shift it will be interacting with the S3 so make sure it is having The IM Ro attached to the red

Shi has the full access to the S3 so all those things you need to take care and then you will be able to run this pipeline end to n successfully what we did here so that is what I had for all of you in this video where I implemented this Airline data injection project n to n using AWS if you found it informative make sure to give a like in the big numbers if you're new to the channel make sure to hit the Subscribe button And press the notification icon for all such type of amazing

videos and again let me know your thoughts opinions about this project in the comment section let me know what kind of new data engine project you want to see in near future I will be creating such type of video as I already said that and again don't forget to join the data engering boot camp for the AWS or the Azure like n2n open source plus the Cloud so everything you are going to get here at a single place Link has been given in the description or you can visit ww. grata skills.com I will see you

guys really soon with another amazing video till then just keep exploring data

![PySpark Full Course [2024] | Learn PySpark | PySpark Tutorial | Edureka](https://img.youtube.com/vi/sSkAuTqfBA8/maxresdefault.jpg)

![Hands-On Power BI Tutorial 📊 Beginner to Pro [Full Course] 2023 Edition⚡](https://img.youtube.com/vi/77jIzgvCIYY/maxresdefault.jpg)

![React Tutorial For Beginners [ReactJS] | ReactJS Course | ReactJS For Beginners | Intellipaat](https://img.youtube.com/vi/9vZ8ELqerPo/maxresdefault.jpg)

![Hands-On Power BI Tutorial 📊Beginner to Pro [Full Course] ⚡](https://img.youtube.com/vi/5X5LWcLtkzg/maxresdefault.jpg)