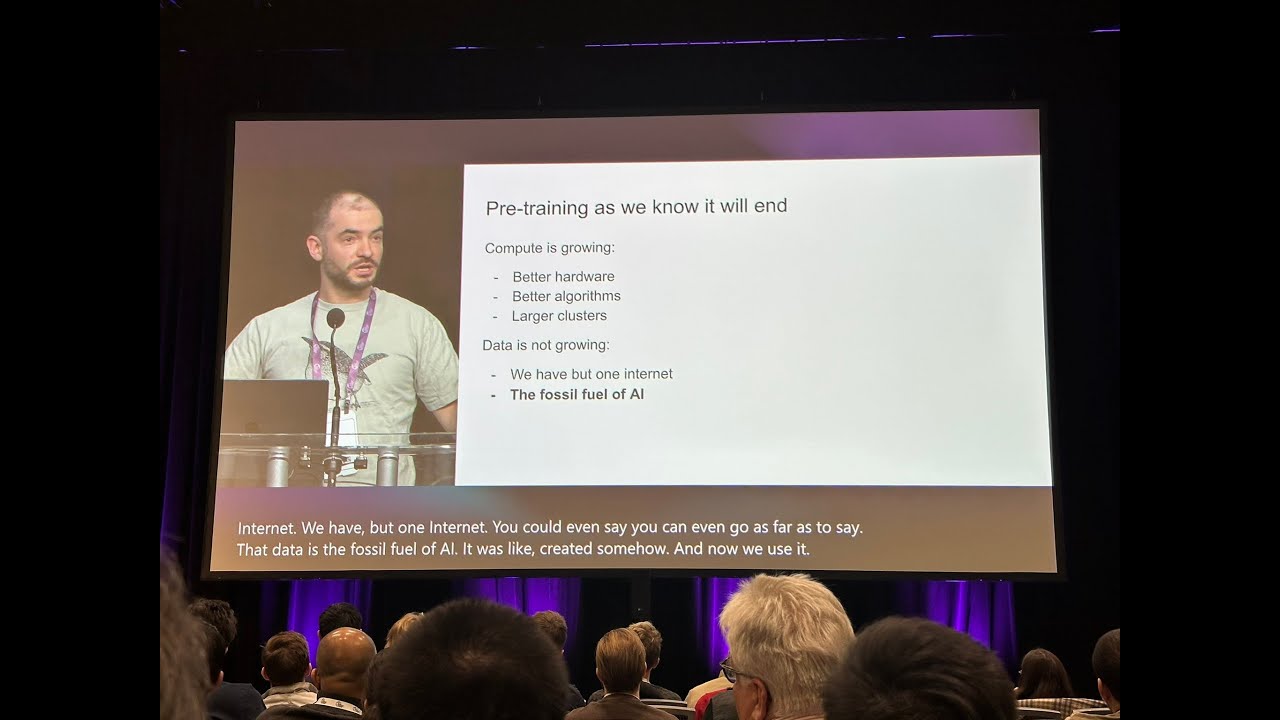

[ MUSIC ] Sarah Bird: Hello, good morning. Thank you all for coming bright and early to our session about "Trustworthy AI". I'm Sarah Bird, and I lead Responsible AI Implementation at Microsoft.

And today we're really excited to tell you more about what we're doing and how we're helping you make AI trustworthy. I've got quite a few people joining me today in this conversation, and so we'll bring them up throughout the course of this. But I'm going to kick us off.

So you've been here for a couple days. You probably knew this before you came, that the AI transformation is happening now, right? We are seeing an enormous amount of excitement and adoption of this technology.

And it's really only the beginning. And the reason I think there's so much potential in this technology is that it actually meets people where they are. It helps speak the language they understand, and use the jargon that they understand, and it helps them bridge to all other systems.

And so this can be an enormously empowering technology for individuals, for organizations, for society. And I think we're just only at the beginning of what we're going to do with this. However, we can't just take for granted that this is just automatically going to happen.

People are also justifiably concerned about the technology. If we have a technology that is this powerful, that we're using everywhere, we need to build it in a way that people trust it and that it's worthy of that trust. And so, making our AI systems trustworthy, we see as foundational to our entire AI approach.

And one of the things I love about doing this at Microsoft -- oh I have -- yes one of the things I love about doing this at Microsoft is that our leadership gets that from the beginning. We can pull any number of quotes from Satya, or Brad Smith, or any of our leaders, about how essential it is as the creators of this technology that we also deliver the ability to use it responsibly, to build it responsibly. It's core to the development of the technology itself; it's not something on the side.

And so let's talk a little bit about how we actually put this into practice. So we have AI trust priorities. And there are three big areas that we look at here.

The first is security. This is an area that Microsoft has been working in for a long time, and it is a great foundation for us to start from and extend in terms of how we look at implementing trustworthy systems. We also look at privacy, which we see as a fundamental right, and something that's incredibly important in how we build applications.

And then of course, safety of our AI systems. We want them to be safe and reliable, and behave in ways that we expect. And of course, to make sure that you're successfully implementing all of these pieces, we have to look at governance, right, to make sure that you understand your AI systems and the right testing, the right checks, the right everything has occurred in place, the right people understand and have signed off.

And so we are hard at work across Microsoft in each of these areas to make sure that we are implementing AI in a way that is trustworthy and we're making it easier for you to do as well. And so we look at two dimensions here. One is the commitments that we make about how we are going to do this, and how we're going to support you in your effort.

And then it's not enough just to make commitment, we want to make it easy for you to do this. And so we also deliver capabilities. So to talk about these in a little bit more detail, the first thing is our Secure Future Initiative, which is a new effort ensuring that we have security built into all of our systems, and we're helping make the world a safer and securer place.

We have privacy principles that we adhere to, we have AI principles that we adhere to. And all of these are the foundation for how we're developing this at Microsoft and commitments that we make to you. But as I said, it's not enough just to make commitments, we need to make this easy to do in applications.

And so we developed capabilities across each of these dimensions. And here is just a list of just a few of these capabilities. And we're going to show you some of them today, and demo these as well.

But we also have deeper sessions on each of these areas. And so there are quite a few different trustworthy AI sessions that I recommend you check out where we can go deeper and talk about each of these. And so, to start at the top with security, I want to invite Herain Oberoi, who is going to come -- he's our GM of Security, and talk more about what we're doing here.

Herain? [ APPLAUSE ] Herain Oberoi: Thank you, Sarah. So good morning.

So as Sarah mentioned, when we think about AI transformation, we talk about AI transformation starting first with security. And that means a couple of things. So when we think about security, we first want to think about, what are the top-of-mind concerns that we hear from customers?

So I've been working with customers deploying Microsoft 365 Copilot, as well as customers who are building their own custom copilots and chatbots and things like that. And there are a couple of conversations that happen over and over again. The first one is around just giving a sense of visibility.

I have customers tell me, "I'd like to just understand what's going on in my organization, who is using AI, how are they using it, what's the information that's being handed to it, what's coming back? ". And so this idea of just getting visibility to, "What's going on in my organization?

", that's the number one thing that comes up. The next one is around data oversharing and data leaks. So it's -- the conversations on those ones end up being, "Well we've got people using, whether it's Copilot or ChatGPT or Gemini, and I really want to know what they're typing into those prompts, and does that include sensitive information about my organization?

Am I copying/pasting things from a confidential document online into the web? And then are the responses that are coming back and coming into my organization, that's data as well, and so how do I think about the sensitivity of that piece as well? ".

And then the last piece is around this idea of emerging risks and threats. In the security world we talk about this idea of an application having an attack surface, and that attack surface creates what we call "threat vectors". Well, when you have AI applications, we've now got these new attack surfaces like the model itself, the orchestration layer, things like the training data, the fine-tuning data, and all of those lead to new types of vulnerabilities and new types of attacks; and some of them are things like prompt injection attacks, jailbreaks, so on and so forth.

And so those are the three big things that we typically hear. From a capabilities standpoint, there's of course a much more detailed list that we can get into. But at the highest level, the way to think about this is there are capabilities to help you discover the risk in your organization around your applications, and then manage the vulnerabilities.

By discovering risk, we have capabilities like data security posture management, which gives you a dashboard of what's the sensitive information in my organization, how is that getting fed in -- going in and out of different AI apps, and then also how are users using these apps, and can I classify users as being high-risk or low-risk? When I talk about managing vulnerabilities, you can do things like posture management, which is getting an inventory of all your assets and then being able to scan them and look for things like vulnerabilities. On the other side, you also have the ability to prevent data leaks and data oversharing.

So the data loss prevention capabilities we've been using in Microsoft 365 for a long time, we can now apply to the chat conversations that you have with your GenAI applications as well. And then the last one is around preventing prompt injection attacks and deploying secure models; so being able to scan models in the Azure AI Model Catalog as well as identify vulnerabilities in these models is something that we have. So this is just a high-level view, but there's much more that's under this, and we also have deeper dive sessions that go into it.

Now, when I talk about sensitive data, so here you can see you have to think about, what's the data that's going into your application, and then, because it's a GenAI app, it's actually generating content or generating data, and so you have to be able to understand the sensitivity of the data coming out. The last piece at the bottom that you see here is just having that visibility, as I mentioned earlier, into, what are the AI apps and controls being used in the organizations? Now, one of the products I look after is Microsoft Purview.

Microsoft Purview is our data security compliance and governance and privacy product. And we've been working for over a year now using Purview to secure and govern data in Copilot for Microsoft 365, or Microsoft 365 Copilot. The feedback we got from customers was, "This is great, but I also want those same data security and compliance controls on my custom Agents that I'm building with Copilot Studio.

" So yesterday at the keynote, you heard Satya talk about Copilot Studio and how you can build custom Agents using sort of low-code, no-code ways. And so what we announced yesterday with that was also the ability to apply Purview data security and compliance controls to Copilot Studio. So with that, the interactions you have with your custom Agents, you get visibility into that as well, in that data security dashboard that I mentioned earlier.

You can continue to detect risky use of that custom Agent and any kind of risky use will show up for your administrators in the dashboard as well. And then finally, all the compliance controls such as auditing, eDiscovery, life cycle management, that we apply today to other types of applications, you can apply those to your custom Agents as well. So what I want to do is show you a quick demo on how this works.

So over here what you see is -- let me just play this. I have -- I'm in my Copilot Studio -- I'm in the Studio right now, and I'm going to point out a Contoso Agent that I've just built. And in that Agent, I can now sort of create the -- I can point to a specific SharePoint site -- that's where it's getting the data for; I can deploy that to Teams and now that Agent shows up in Teams like another user.

I'm going to ask it a question about a confidential project. Now, the user that's asking the question has the ability to access that information in the SharePoint site. And so what it's going to do is it's going to summarize that, and it's going to show me the response.

Now, you'll notice that in the response you've also seen that the response has inherited the sensitivity label of the SharePoint site that it had, and then you'll also see in addition to inheriting the sensitivity label, it will also site the source document. So that's an example of being able to classify the information, inherit the sensitivity label, and then site the sensitivity label that's in the source document as well. Now, I'm going to switch gears and be a user that does not have access to that SharePoint site or doesn't have access to that content through a DLP policy.

And so when that other user asks the same question, "Summarize this confidential project for me", I get a response saying, "I can't do that for you. " Now, the next view you see here is that data security dashboard that I mentioned. And here you can see different reports about usage, you can see details about the type of sensitive data that's been used in my different AI apps, and you'll see Contoso is in there as well, and then finally, I'll also be able to see the types of users on the bottom right-hand side over here, of risky users that are there, the type of risk level that you have of users using your different AI apps as well.

Okay. Next, I want to talk about privacy. Now, when it comes to AI systems, I always say, "Data is the fuel that powers AI", and in so many use cases the data that you're dealing with -- and when we have the panelists come up here as well, we're going to talk about the importance of being able to identify and work with data that includes privacy.

So being able to handle that information, it's not just about the data, it's also about dealing with the regulations and laws around privacy that you have to be able to work with. And so when we talk about capabilities for privacy, we look at things like how do you automate privacy assessments in order to kick off a project that's dealing with confidential information -- we have a panelist here from a healthcare industry that's thinking about HIPAA, you've got to do your privacy assessment up front before you kick off the project. If you're building an application that's dealing with PII information, you have to be able to think about how do you get consent from the end user and be able to customize the consent for your specific region or your regional regulations that you might have.

And then last is around this notion of confidential computing. So privacy isn't just about encrypting the data at rest when it's in storage and encrypting it when it's in motion over the network, but this idea of encrypting data in use, so while it's being processed as well by the data processor, ensuring that even the data processor will never have access to that data because of the encryption. So I'm going to switch gears again and show you a quick, quick demo of some of the privacy capabilities.

So here I'm a developer. I'm being asked to do a self-assessment around a particular project that I'm working on. And so I'm going to give it details of the project and then I get asked, "Does this application use any organizational data that requires privacy?

" I go ahead and say, "Yes. " And the next thing it tells me to do is, "Okay, well, in that case, you need to build a consent form. " And so, here in Priva I can build a generic consent form.

I can go in there and then start to customize it for my specific use case. So I'll give it the details of the project name, the description, and I can go ahead and then select the specific layout. I'm going to do that from a mobile device over here.

I can provide details around the description that I want in the consent as well. And then, once I'm done, I can save that, and then I can publish and deploy that directly into my CDN network. I have a choice of doing it in Azure, AWS, Cloudflare, etcetera.

In this example I'm going to go ahead and just deploy that consent in Azure, and then once I've done that, my application will require consent before an end user sees it. Now, the point about doing consent with a centralized tool like Priva is that you want to do it at the domain level, and you want to manage the consent that you get centrally versus having to do it individually for each application that a developer is building. And so typically you're going to have a privacy team that's going to manage the consent.

We have legal teams that work together. And so having the consent in a centralized place and doing it in a consistent, standards-based way is the reason why we do it like this. So, with that, I'd like to invite Sarah back onstage and talk about safety as well.

So over to you, Sarah. Sarah Bird: Thank you. [ APPLAUSE ] So with generative AI applications, we see new types of risk.

And we actually have a much larger taxonomy that we use behind the scenes here, but to talk about the big categories we see, one is the ability of this technology to generate harmful content and harmful code. Another one, which Herain was just talking about is the new types of attacks and vulnerabilities we see in the system: jailbreaks, prompt injection attacks. We also know that it is really top-of-mind to ensure that you're getting quality outputs in these systems.

And so ungrounded outputs and errors is another risk that we look at. We also want to ensure that the systems are producing material that we have a right to be using, and so looking at where the system is accidentally producing potentially copyright material. And the last one is, as I mentioned at the beginning, one of the things that's so exciting about these systems is that they speak human language, and they can interact with us directly.

But there are risks that can come with that, and we want to make sure that people understand that even though the system has human-like behavior, it is not human, and they are not misled by that. So we look at this range of risks and many others when we're thinking about the safety of the system. And the way that we address this is a defense in-depth approach.

We don't have time to talk through all of the details here, but as I said, we have a lot of other sessions where we'll go into more. But the idea is that at every layer, starting with the model, and then adding an outside safety system, and then guiding the system with appropriate data and programming instructions as the system message, and designing an experience with the user being center and making sure they understand and are empowered, every one of these layers is critical to how we think about implementing safety in the system. And we need to make sure that they're all working together.

And so evaluation is an important part to actually test your system and make sure your defenses are working before you ship it, and after you've shipped it. You want to keep doing this in an ongoing way. So I'm going to talk about a couple key pieces here.

So first of all, we have many safety capabilities. As Herain said, this is just a high-level look at what you can see from us. But we have guardrails to protect your generative AI application, and I'm going to show you that in a demo in a minute.

We have the ability to evaluate and test your different mitigations to make sure your system is prepared -- is functioning the way you expect it. And we have the ability to monitor so that you understand, as your system is going, if it's doing what you expect. And so I want to jump over here and actually show you the guardrails in practice so that it's not so abstract.

Let's make sure I can -- here we go. And so this is our new AI Foundry portal. And we have a lovely "safety and security" section here where you can discover more of the full set of capabilities we have.

And I want to show you one today that addresses that middle risk around the system producing ungrounded outputs, which means outputs that are not aligned with the data that you gave the system. So this is a little demo we have built in so you can see how it works. I'm going to go to "summarization" and grab the first one here.

And I want to show you our newest capability, which is "correction". And so what I have is grounding sources, and, for the demo, a completion that has some errors in the system. And I'm going to point it at an OpenAI model that we can use.

I'm going to hopefully type everything correctly. And we're going to run this test. The fun thing about live demos is you hope it's going to work.

And so what we see is that the system is able to detect that a 20-year-old student in the completion -- we have a longer completion than that, but it can detect that that particular part is not correct. And it actually then rewrites it so that we fix the error before it even makes it out to the user. And so here is the new completion that's been rewritten.

It does correct the fact saying it's actually a 21-year-old. And so these are the new types of capabilities we're developing in our guardrail system to make sure it's addressing these risks that we see in real time. And so we can go further than this.

This is just one example. But I want to show you the system overall. And so I can go here and create a content filter.

And what you'll see is -- going to click through this quickly, is actually the ability to configure these guardrails against many of the different types of risks we are talking about. So here I can look at harmful content coming in at the input, I can look for different types of jailbreak and prompt injection attacks that are coming in, I can configure my system to also address different types of places where the system has made a mistake on the output. So for example, it might also make a mistake here and produce harmful content; or it might produce protected materials, potentially copyright text or code.

And this is the groundedness one I just showed you. So I can turn this on and have it choose to annotate my outputs to actually address any of these groundedness risks that we're seeing in practice. And the nice thing about this is you can go and configure this for your application, because each application is different, and so the risks that you see emerging as well as the settings that are going to be appropriate for your context are going to vary.

But we want to make it easy for you to use this. So we've actually already built it directly in. So if I go to the "playground" here, I can show you this working in action.

And I probably should have cleared this chat history. You can see it works, it's great. But I'm just going to do this again.

I don't have all of these jailbreaks memorized. But if I paste this here, you'll see that the system immediately flags this as a jailbreak-type attack, and blocks it before it even gets to the model. So you have this defense in depth happening.

So you don't have to worry about the model getting confused and making a mistake. So that is our quick demo. Given the time, I'm going to switch back here and talk about a couple more things that I think are really important.

So as I mentioned, you don't want to just have guardrails, you want to know that they're working, and so it's really important that you evaluate your system. And I'm excited to say that, coming soon, we're going to have new risk and safety evaluations specifically for images. We released text before; I hope you're all using it.

But images now will be available next month, and so you'll be able to start testing your system for these multimodal type of inputs as well. So let's wrap up that section and talk about the last part, which is "governance". As I said, it's hard to know that all the other pieces are working if you don't have the right governance in place.

And absolutely the world is expecting this of us. We now are seeing regulations emerging globally, right, and there are going to be more to come. And so one of the things we're focusing in a lot on right now is the EU AI Act.

And the reason for this is, of course, it's an incredibly important piece on its own, but it's also happening right now. And the things that it's asking to do are things we expect most regulations to ask for. For example, documentation, and testing our systems, and having appropriate governance in place.

And so we're going to be sharing more about this next month on our approach to the EU AI Act, but here is a little sneak preview where we are actively engaged in the conversations around this regulation, making sure we're providing what we're learning in practice and what we find really works into the conversation for the code of practice and with the EU. The second thing is we're going to make sure that Microsoft's AI systems are compliant. So we're actively working on our data governance, our model governance, all of our AI capabilities, so that when you use our AI, you're confident in what you're getting.

And the last piece is we want to help you on your compliance journey, and so we'll be sharing best practices, tools, technologies, things we find that are going to make it easy for you to comply. And so really excited about going on this journey with you. It's going to be an interesting experience for all of us as we learn to transition into a space where AI is regulated.

And I'm excited to announce that we're starting to bring out new capabilities to support this. So we have new AI reports starting in private preview now, which allows you to get some of that essential documentation and test results that you're going to need as part of your compliance process. And so this is a new capability in our Foundry, but we'll be adding more going forward.

And to show quickly what this looks like, we have a nice screen where you're going to actually be able to see the different risk levels in your system and everything that is coming up with that. So with that, I'm going to say the other part of the story is we know that it's not enough just to have the right testing and the right documentation. You need compliance workflows and governance workflows to make sure that you're implementing this successfully in your organization, and so we're really excited to announce partnerships with Credo.

AI and also Saidot, who are supporting us in integrating these new governance capabilities we've built into the Foundry, such as AI reports, so that we can give you an end-to-end solution no matter which system you're using. So really excited to start this partnership journey with them. Now, there's lots more we can talk about.

As I said, there are many other sessions. Trust is foundational. And so, I want to stop talking, and I want to hear from the people that are actually doing this in practice.

And so we have some really amazing organizations who are going to come and join us onstage here to talk about their journey in trustworthy AI and how they are changing the world with AI and actually putting it into the practice. So with that, if you can all come up to the stage. [ APPLAUSE ] Speaker 1: We can sit?

Sarah Bird: Yes, we can sit. I think you will need this. Herain Oberoi: All right.

So we have a really great panel here that have been quite excited to have this conversation all week. But maybe we'll just start out with some quick introductions. And maybe, John, we'll start with you at the end and go down and just share who you are, and what you're working on, and your use case, and then we'll sort of get into it from there.

John Israel: Thanks, Herain. My name is John Israel. And as of last Friday, I was the CISO for KPMG US and Americas, and just made the switch to become the guy that's leading our global efforts around data and AI security for the Global Membership Referral.

Sumit Bhattacharyya: My name is Sumit. Can you all hear me? Sarah Bird: Yes.

Sumit Bhattacharyya: I am a Principal Product Development Engineer from TELUS Health, and I lead a small team of engineers and project owners to develop mostly conversation chatbots for caregivers and call center agents to improve operation efficiency and productivity. But we have many more use cases in the pipeline. Anna Maria Bunnhofer-Pedemonte: I'm Anna Maria Brunnhofer-Pedemonte.

I am the cofounder and CEO of Impact AI. And we are there for you to help you have 360 degrees AI product analytics. Markus Mooslechner: Well, I'm Markus Mooslechner.

I'm a Filmmaker and Science Communicator and Podcaster with a company called Terra Mater Studios based out of Vienna in Austria. Herain Oberoi: Thanks, Markus. And just for context for the audience, too, maybe share a little bit about the project that you're involved in with Anna as well.

Markus Mooslechner: Sure. So the project I'm involved with is in fact involving a pretty fantastic space mission that is happening as we speak. It's a space mission to save our future, our common future because it's all about deflecting asteroids and making sure that we have a future on this planet.

It's happening as we speak. It's a mission, joint mission between NASA and European Space Agency. And what we are doing is we are giving sensor data and telemetry data access to an LLM and vice versa.

And we're bringing the spacecraft sort of as a friend on your device you can chat with, right away, right now. It's hera. space, and you can chat with a spacecraft that's on a mission to save our planet for the future to come, together with -- so this is the vision, and now this is the implementation and making sure that this works.

Thank you. Herain Oberoi: Thanks, Markus. Sarah Bird: Yes.

So Markus, I know we were able to catch up and chat a little bit yesterday, and a huge part of making AI systems trustworthy is making sure that we're putting humans at the center of that. And I know you have an interesting perspective on how we do that. So I'd love for you to share more with everyone on how you're thinking about it.

Markus Mooslechner: Absolutely. So as storytellers, as communication specialists, we know, in order to come across and engage with audiences, it's important to bring human, and to build that bridge to what makes us human, and how we function as humans, and that usually works with stories. We think in stories.

And this is why we thought, "We need to give an LLM this experience that we're trying to pull off, a story. " And how fascinating can it get to have this story that I just mentioned as a backdrop for an LLM. And so we told the LLM and we trained the LLM, "Hey, this is your story.

You have memory. You can relate to your audience, the person you're chatting with, you remember what they are about, and you will confront them with this and that. " So it's a new form of engagement that makes it very lively and -- yes, and engaging.

And you wish you can -- to come back to Hera -- "Hera" is the name of the spacecraft, because she's always surprising me with something. And it's not made up. It's a true space mission.

Herain Oberoi: That's great. So I want to take this conversation -- I'm a data guy so I always like to get into the data aspects of this. And earlier we were just chatting about what types of data are being used by the application itself.

And what was interesting was, Anna, you were sharing about different types of sensitive data and also potentially harmful and not harmful data. Anna Maria Bunnhofer-Pedemonte: Yes. Herain Oberoi: And then, Sumit, you had mentioned that in healthcare, specifically for TELUS, you're dealing with privacy a lot and confidential patient data with HIPAA and all of that.

And so maybe, Anna and Sumit, maybe each of you can just talk a little bit about how you think about bringing data into the project and how do you categorize and classify different types of data that you deal with. Maybe, Sumit, you go first, and then Anna. Sumit Bhattacharyya: Okay; all right.

So first thing, at the very inception of the project use case, we ask the question, "Is it going to use any PII data? " "Okay, if yes, what about PHI; do you have that? " And then what kind of customer data?

For example, customers are interacting with our welding apps, right? These are considered sensitive data, customer information, so whether -- what kind of compliance requirement we have, right? And so if, let us say, we have PII/PHI, we have to make sure, since we have global presence, right, we have to be compliant with the data privacy standards like HIPAA and GDPR.

Herain Oberoi: Right. Sumit Bhattacharyya: And so what we do with the customer data? We take an approach of data minimization.

So you only use the data you need, right? And obviously, at every step of the way, when the customers are using it, you have standard disclaimers that we just say clearly that, "Please don't put any PII/PHI information into the chatbot. " Anna Maria Bunnhofer-Pedemonte: Yes.

Sumit Bhattacharyya: But of course, people may do that. So then we have to take the approach of detecting it and then de-identify it. So in this case, Microsoft's de-identification API can be pretty handy.

Otherwise, we have -- which is HIPAA 18 compliant -- Herain Oberoi: Yes. Sumit Bhattacharyya: -- where you have standard customer entities. Herain Oberoi: Yes.

That's right. Sumit Bhattacharyya: And also you have to make sure that application data sync, whatever you are sending, it is scrubbed, obfuscated, etcetera. Herain Oberoi: That's great, yes.

And Anna, the characteristics of your data are quite different. Anna Maria Bunnhofer-Pedemonte: It's quite different, yes. Herain Oberoi: So do you want to share a little bit about that and how you're dealing with it as well?

Anna Maria Bunnhofer-Pedemonte: Definitely. We -- let's say we also started to structure the data in different buckets where we say, "Okay, we have this one data that needs to be really grounded," because it's the European Space Agency data about the mission, which is truly important, obviously, for Terra Mater and for the European Space Agency to be right, because you see it as an educational B2C application that's freely available for anyone here. So you really want to have -- stay true to the mission there to be robust and reliable in your contextual understanding of the grounded data.

And then also, with this idea of being a medium, a new medium, there was the idea from Terra Mater to, for example, access media from the internet that can be social media or other websites. And so, there, we want to be really careful to make sure that the media that is accessed is safe and grounded also in some ways. So there are some pages that we whitelist and some that we just don't.

We use some function callings to whitelist the applications and to others we just said, "No way we will do that. " So that's important. And that leads to the third one, which is really you have to be careful what data is being dropped in your data dump, as you said maybe.

It's not only about -- let's say, not only about the decision, "Do I have PII data in there or not? " What we realize is you have to be very careful what people provide to you also as in data for GenAI applications, because very often there might be just content in there where no one is aware that you really don't want to have it for reasons of groundedness, or for PII, or other safety issues. Herain Oberoi: Thanks, Anna.

Yes, so I think this whole topic of how to think about data, how to deal with data. Anna Maria Bunnhofer-Pedemonte: Right. Herain Oberoi: And what's been interesting for me to see also is the kind of cross-functional collaboration that it requires.

Sumit and I were just chatting earlier about having a cross-functional team including privacy experts, legal experts, the data team, the security team. And I want to also take it to you, John, in a second because one of the things I thought was really interesting about John's background is, John, you just started a new role. John Israel: Yes.

Herain Oberoi: And your shift into this new role actually starts to bridge those different cross-functional groups that we're talking about here. So do you just want to share a little bit about what you did do, and what your new role is, and how those things connect? John Israel: Yes, sure.

Thank you. So when KPMG started our journey back a couple years ago we-- both out of necessity and out of deliberate movement, we brought together the business functions, the technical leaders, like you would expect. But in the same room, we had our entire governance establishment.

We had privacy, we had risk with Office of the General Counsel and the CISO. And we -- ultimately I ended up quarterbacking. And that's not to say that was any special role.

All the star athletes were everybody else in the room. But we brought together many different perspectives. And I thought that was really important because, one, it ended up being a governance-led operationalization of AI within the firm, but also, it created these incredible collision domains of perspectives, right?

I mentioned yesterday in a briefing that, who would have thought back in the '80s that a doctor, biologist, and statistician would be working on cancer back in the '80s? And kind of the same thing when you jam together perspectives. And we were collaborating and looking at these over-the-horizon ideas, and then conceptualizing, and then implementing all in one chain.

And that form, if you will, that tiger team, beget two different elements later as we transition out of reactive, "What are we going to do? " to operationalizing this more across the organization. And we've ended up with a Center of Excellence and then a Trusted AI program that has an oversight and integrated capability to look at our ten pillars and how we're doing things.

And so from a -- that transition from a CISO to data and AI security role, it's really the evolution, the natural evolution of what was going to come. Like a lot of people in the room, and especially if you're a CISO, what was hard was common across all of this, right? We were learning new vocabulary, we were looking at new attack surfaces, we were sussing out how we were going to deal with these new risks.

But on the other side, where there's risk, there's tremendous opportunity. And we saw that generative AI was going to be our way of projecting out even greater trust to our clients, and both internally as well as revenue growth. And to do that, we took the optic of-- you have the traditional data security that we all think about, the triad.

You have the AI security, which we're talking all about today. And to double quick down on that, I personally take the viewpoint of there's security of, from, and with AI as well. So all of the systems which we're talking about: "from": social, the social engineering attacks, right?

and "with": Security Copilot and some other technologies. So and then in the middle, there is this data security within the context of AI and AI systems. And the firm felt really strongly that we wanted this focus at the right -- same level as our CISOs to be able to suss out the specific strategies that fit into each of these domains.

And that's the role that I look forward to playing in the near future -- actually, now, right, and starting this journey. Sarah Bird: Yes. And there are a lot of parallels, actually, in our own journey in this where we're frequently asked how do we balance AI innovation and risk management?

And it's exactly the same thing for us of getting the people in the room and the different experts and actually talking through this and making sure we have that sort of centralized body that's thinking through these issues. So thank you for sharing. I think I'd love to go to you, Sumit, and if you could share a little bit more about, how do you determine what controls and guardrails you need to put in place in your implementation, obviously working in a very sensitive domain?

Sumit Bhattacharyya: Okay. So first, the very beginning, we have to build trustworthy AI. So our goal is really to earn the trust of the users, so the users can really trust the application, right?

So first to understand is to -- we have to understand the risk landscape, right? So for example, we have to ask -- start asking questions like, "What if the system fails? Will it do any harm to the user?

Is it using any sensitive data? " For example, right? So then, once you understand the level of risk, then you categorize and you see, "Okay, this is the most important risk we need to mitigate for this use case.

" So you categorize it, and okay, maybe technically very challenging. But since this is the most important stuff, we have to build it, right? So now we -- the second part is that once we put all those guardrails in place, for example, once you have identified all the risks, you have to continuously measure it and monitor it, and you need to have continuous oversight.

And of course, you involve the end users while testing it to see whether it is performing well but it is performing in a trustworthy way. Sarah Bird: Yes, that's been really important in our practice as well, is thinking about what happens after you've deployed and how do you constantly learn and adapt? But it's something that we see, people are so focused on getting to that ship line, they often aren't thinking about what comes after.

Sumit Bhattacharyya: Yes, exactly. Sarah Bird: So it's something really important. Sumit Bhattacharyya: By the way, what I saw today from your presentation is very exciting for us.

Looking forward to it. Sarah Bird: Great. We hope, yes.

Herain Oberoi: That's great. So couple of takeaways from me, just one is I think this idea that building trustworthy AI systems is a team sport and we're seeing security teams -- Sarah Bird: Yes. Herain Oberoi: -- data teams, development teams come together around this, and the roles are actually shifting as a result of it.

And then the other one is this notion that it's a continuous monitoring exercise. I think are both interesting. The third topic I wanted us to touch on is this idea that, how do you balance safety, security, trustworthiness of the system, with creativity?

And Anna, we were chatting earlier about how that's been a core challenge for you, or at least an area that you've been very actively focused on, and maybe you will share a little bit about -- Anna Maria Bunnhofer-Pedemonte: Yes. Herain Oberoi: -- how you're balancing those things. Anna Maria Bunnhofer-Pedemonte: Definitely.

And I think it comes back to -- which we'll start here as trust, risk, and opportunity. It's a really interesting triangle that, at an AI application level, you want to be aware of. And the only way you can do it is being aware and having really robust evils that you continuously monitor, that you have from the beginning.

We call it a "shift left approach" for basically AI products. You want to know in the beginning, what are your most important evils -- we call them "success vectors", where you say they make or break my product. And because we were chatting, just to say, for Hera, it is basically some that are in the technical capability spectrum like accuracy.

But then you also have, all of the sudden, creativity. Markus wants personality, and he wants a certain personality. And we have to take this as a metric in our structure and say, "Okay, how is Hera performing in that?

" And that's something everyone has to do in any AI application. If it should be valuable, but also if you want to mitigate risks, because that's what you do with your evils. And basically -- sorry, what you have in the end is a value alignment with the user, but you also have the value alignment with your business goals and aligned evils.

So that's basically the outcome of this whole process then. Herain Oberoi: That's awesome. Thanks for sharing that, Anna.

Anna Maria Bunnhofer-Pedemonte: Great. Herain Oberoi: So maybe with just a minute, Sarah, were there any final thoughts from you? Sarah Bird: Yes, I think what I want to say is this could go on forever, and it's so important because we're all learning together and it's actually really important that we are sharing our experiences, and our best practices, and our learning, because this is an innovation area and we are, every day, inventing new ways to do this, new things to do it better.

And so it's really important that we're really all learning together. And so, so happy to have all of you come and share your story, and very much hope that people continue to have an open dialogue about what they are finding in their organization and how they're doing this. Herain Oberoi: That's great.

Yes, and thank you again. Thank you so much for your time. (applause) And while we wrap up -- Sarah Bird: I'll switch this for you.

Yes, so as we wrap up, there's a ton of detail in terms of sections -- in terms of additional sessions that we have to dive deeper into this. So the team has done a great job putting the slide together, so if you want to go deeper into any of these aspects, take a look at some of these sessions, I would highly encourage you to attend it. And thank you all for being here, being the early morning risers for the first session of the day, so really appreciate it.

Thank you.

![[Webinar] How to Build a Modern Agentic System](https://img.youtube.com/vi/pGdZ2SnrKFU/maxresdefault.jpg)