[Music] I brought my little uh my little book nice but I guess um we'll do introductions first so I'm John from uh prompt player uh prom player is a tool for collaborative iteration evaluation and observability for uh prompt engineering and rogo happens to be one of our customers so we have uh Tomas here from there I'll let him explain what they do there and then we'll get into the questions sounds great um so rogo is a financial research platform for public markets investors investment Banks and private Equity the main core focus of rogo is to

Center trust make sure that when you are making billions of dollars of uh or you're trading billions of dollars of assets that every number that goes into the you know any one of those trades is heavily audited and trustworthy the same process that happens in finance so working to automate that workflow add trust and yeah that's the main core using llms and prompt player to get that done exactly um okay so I think I want to focus this conversation a little bit because uh it's almost talked about trust we're going to talk a little bit

about evaluations um so what are uh some evaluation metrics rogo is looking at right now yeah so a lot of this process and I think like you know any and user application like rogo always has to focus on the actual output that comes from the user and how often are users you know using your product are they going you know are they asking 20 questions in a row are they using the llm very very commonly like that's a sign of really really good success but to start even getting to that point you know you have

to come up with and you're designing the system you have to come up with you know empirical metrics to build a data set you know build a you know a test data set to be able to evaluate your metrics on you know like any type of metric on you know rag any type of metric on you know how much you're hallucinating coming up with those systems to then you know build your system and you know continuously deploy it to production once it's deployed in production then you see how users actually using it if they are

really getting a lot of value out of this next new deployment and then you know continuously iterating it and you know what one thing that we really use promptler for is promptler allows you to have versions inside the prompt registry where heavy users of the prompt registry and one thing that you can do is you can set uh one of the tags to be from staging to production and so as we're building out our you know prompts and we're iterating continuously on how we actually you know call the llms then we actually are able to

switch the best um you know version of that into production using prompt players registry yeah for sure that's a feature we just released recently happy to hear that uh you guys are using it um I guess something you touched upon which I think is very interesting for a very technical group that we have here is uh the place of um formal statistical methods when talking about evaluation versus more anecdotal customer driven uh type metrics like do you have a good mental model of how you know I think uh the second Elon I spoke about like

how we're not in the big leagues yet right we don't really have these defined metrics of certain scores and stuff like that that we could look at and I actually personally think it's kind of funny how we all look at using llms to evaluate themselves I think that is probably powerful but to me it it kind of indicates a little bit of the immaturity of where we are at a field but how do you guys look at both you know these empirical versus anecdotal types of evaluation when it comes to figuring out how well your

product's doing totally and to be in full transparency like um llms are not great at um evaluating all types of you know output that you've gotten out of there so for example in finance like you know if you end up hallucinating one small number out of you know a filing or earning transcript or any type of internal document and that ends up going into you know production some person is making you know a trade and putting that into a slide deck that then goes and then ends up being completely invalidated now they're going to lose

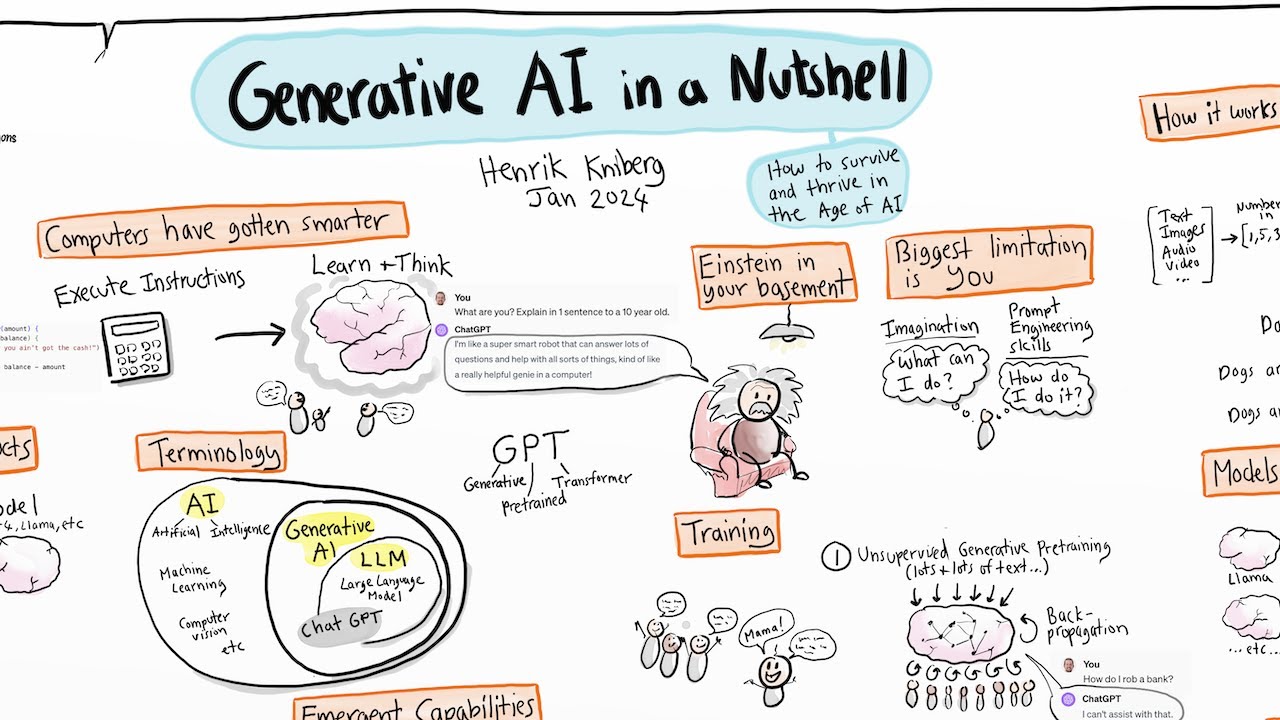

trust they might you know buy a product at a wrong multiple and you know there's you know they're taking a bath so to speak um at the same time you know they could be missing out on an amazing opportunity because they're just being fed in correct information um and so the way that I think you know you have to start is by thinking of and you know generally you know with Finance you have so many so much information so much documents that you have to use um Rag and rag I think is one of the

best ways in which you are seeing a lot of the theory that goes behind recommender systems actually being used now um because you know not every product the same way not every product on Amazon has a rating by every user on Amazon you know not in like the space of every possible query um you know that isn't a perfect match for every possible document and so you have this incredibly sparse World in which you are trying to you know find and rank the best documents inside like this you know this Matrix of queries and documents

and you know coming up with hypothetical documents is a really good idea um Jerry has incredible um webinars which like I've watched like three times over of just how um you know they how to boost drag and you know you have to come up and you know human evaluation at its core is like a big part and so at rogo what we do is you know we first um you know iterate on our you know Rag and uh prompt level um you know layers and as we're moving on in this iteration we then actually do

have a human evaluation step which is you know I used to work in finance so I'm decent at doing human evaluation in the same way as other people that work at rogo that are X finance and they're able to actually make sure and like duly check to make sure that like any one of these metrics works as for actual empirical methods um you know since you are treating rag as a recommender system a lot of the Theory actually applies there so looking at mean reciprocal Rank and all of the you know map and neck cumulative

discounted gain all those different metrics to evaluate how rank like rank aware evaluation metrics are really really useful for Rag and once those you know once that context gets injected into the prompt then you actually have the a second layer of evaluating you know whether this is a hallucination or not and stuff like that and for that I think the best way in which we were able to actually improve performance is by using GPT functions for as a classifier but it's not just that it's also by ensembling them and so ensembling is again making five

concurrent llm calls with the exact same context and taking the majority vote of those not five but maybe like a hundred if you you know have your own um you know GPU cluster where you're running you know a simple hugging face model on it um and that really really helps because now you're doing you're taking a majority vote of like the possible um like classification and that way you're able to raise it as soon as you're able to raise the temperature and you're able to like see the actual you know classical evaluation metrics of you

know the um of the uh you know classification system you just built with ensembling that's how you're able to really get really really good performance and then you're able to push that into production that's uh fairly interesting and fairly okay I actually didn't know you guys were going that complicated when when you're looking at this just to like double click on that do you guys uh look at it like as a black box and like have some form of binary yes or no or score that you're like this request slash response pair performed you know

85 on the scale are you guys not really at that stage or splitting it up into different pieces and looking at it that way yeah I mean I think we we do measure it um at a level at a layer level I'm not sure we do it at the exact granularity as arise and their spans do um but we definitely um you know are going toward that direction I think like the the best way to sort of you know start about building a system that's really production ready in production grade is to sort of just

like you know be scientific about it build a test set build what kinds of questions and what kinds of experiences do you want your users to have and work backwards from them once you have 100 of those as you can as soon as you're iterating on it make sure that you're not um regressing back because regression you know like just because you you can look at some output and be like oh this is awesome now we can answer these types of questions that's another thing one metric I really really like is um out of like

once you have user data and once you actually have people really really using your product is getting and encouraging users to like continuously make llm calls and really really interact with your product and interact with you know um you know the cool thing that is General generative Ai and my favorite metric for that is to take the average queries per session and see how many times a user is in like the 90th 95th percentile of those and once you're at that point once you're seeing that they're like really really like you're encouraging these binges where

they're just continuously calling you know rogo in our case but continuously calling um you know your production uh you know system that's when you're really really no you're good I have a like a random question about that how do you know that if they're like asking questions over and over again that that's a positive signal and not a negative signal that they're like pissed off Oh no you're not you're right it could be that but is the fact that like if they're pissed off they'll go to Google and they'll go somewhere else right like that's

right like there are many different places you can find information on the internet um but the fact that they are doing that and obviously like you know if they export it out and rogo is really really built into um you know Financial workflows that's how you're really you know once they export it out that's when you know you're at a good place right that's when you know you're in business so then on that talking more from a product perspective and a customer perspective the stakes are fairly high for rogo it's not like Chachi PT you

guys are doing financial data where any hallucination could be costly both for the company and also your relationship with them so how do you guys like approach that uh is it ux is it education is it engineering what what's the The Tackle yeah I mean look honestly like to some extent we're still figuring that out but the main way we do it is by setting user expectations and by making sure that like we are continuously working to improve the product with the users a lot of that goes with good onboarding teaching them how to prompt

better we've we've introduced a prompt Library where users can share really good prompts inside um our system and I think that's a pretty good idea for like a lot of chatbot applications um where you know you can share different types of problems between users and you can kind of like you know have this emergent Behavior where you're having collaboration um a lot of that you know really really helps um and also just like being in like close contact we use intercom and a bunch of these other um you know really really helpful tools to right

I guess on that um slightly more philosophical question rogo is a chat interface right it's it's main people are interacting yeah there's a chatter interface and then there's a um like a semantic search we basically just have our entire rag stack available for anyone that wants to do search inside our document system it's the same way if I finance people have ever used bamsec that's more what we've done but we've also added you know our our entire rag application behind it right so on that topic when you look at like say evolution of something like

GPT where at the beginning everybody was throwing their code questions into chat GPT and then now you have things like uh cursor and it saw Google release something like that where it's much more in context help that happening in general in this space or like there is going to be like Chad is going to to stay with us for a long time um I do think that chat Bots generally are the the first step of a lot of this a lot of like ml waves of interest um I think that's been sort of the case

for a lot um just the history of you know natural language processing but um you know I do think that a lot of chat applications are really cool um like I use chai GPT every day um and it's just a really really helpful workflow too you can use um I do think that you're right and a lot of being able to orchestrate different types of uses of llms to create really intelligent results and how that gets synthesized back to the user as a stream you know response is going to be it's going to change a

lot over time and I'm actually curious to hear your answer to that question and promptly your customers yeah I think for us we see uh with the bigger customers that we have coming through our door they already have a product the functionality and they want to use uh llms there so some of them will come and try to go with chat but that might be a little bit overwhelming ux is a little bit complicated what are you exactly going to chat with so we see those companies are building small features in their product that use

LMS the more the younger generation the new people that are coming in they're definitely much more like chat focused as that's like a very easy way to go in um I I guess while we're here I'll ask you one question about uh rogo and our relationship with prompt later how are you guys using prompt player uh to kind of work as a team yeah so I think like the one thing that and I think I'd love to hear you talk more about this too is that I don't think prompts are necessarily code um a lot

of things that you could do with prompts in a lot of the ways that you really get iterate on working with prompts or just um you know publishing the latest version of it and then quickly testing and running a lot of evaluations in parallel just to make sure that like you know you're generally right and then quickly like iterating and you know not making sure that you're not regressing and moving forward and you know getting there um and so like that's one that's the biggest way in which we use prompt layer is being able to

measure versions from the registry making being able to encourage collaboration with our within our organization um around prompting because a lot of people can do prompting um but it takes a while for them to really get them like really get a good sense of what's doing well um the other really really nice use case that we use with prop layers being able to measure our openai costs and open AI latency but all of that is very very nice to to measure as well foreign [Music]