No exactly there's some things in life that appreciate the deal okay taking some risks roll the dice temped by a gas station always gas station D to driving down toer pull the gas station cheese like my gosh maybe you don't get the sushi there but like you can get the old cheese I got really Nicely so how how you about in London end of July next july4 so I'm here for quite a while so am I missing it uh no I'm having good time in London I really like uh I think it's it's it's great

I love being here uh it's been so much fun a bit warmer than Washington yeah yeah I me all guys like exactly the same as I left it right it's been months but like oh nothing's like I'm G to come back a year Later nothing will change really except one put up new so there we go hi can I intervene for a second I hope you can hear me so yeah I'm just observing that the audio is still unfortunately not great in the room despite the it trying to fix it how about you mute that

one y yeah let's try that um and see because it's it's any way hard to hear people that are far away about this yeah so yeah Just is that one I I should have the only active he now yes so so now um your your voice uh is is very loud and clear just for everybody else in the room keep that in mind uh that the further away you are the harder it will be for us to hear you I might interject a couple of times just to ask to repeat the question so that people

if someone here ask something I can make we'll make sure to speak over to my microphone thank you yeah that Beone else in the room here the laptop I think we play sound and hopefully you won't have to hear too much I'll be so clear every be like oh my gosh there's no qu because oh so no question okay so so sorry say it again it just cut off yes but when Ed was talking that was already difficult to hear I say so first part I'll unmute mine and then when it's When all right um

get my mute here Nathan just wanted to ask is it okay to record this session it's being recorded right now oh oh okay yeah it's sometimes all right I think you need to mute I okay we're good we're good I think we're good now I will ask just one more logistical question are you are you okay with questions during the talk or would you like Questions sound yeah okay are we good okay go let's go for it it's kind of like a a mind bomb um so before starting I'd like to notify our audience online

that today's seminar is being recorded so obviously if you'd like not to appear kindly turn your cameras off uh first of all I'd like to say good afternoon to everyone and thank you so much for joining uh this is our 35th seminar in the series and today's seminar is a Special seminar because it's coordinated together with the research Engineering Group here at the ad intering Institute and it's also really cool that we have such a nice um fantastic turnout so I'll introduce myself uh my name is Zach shti and I a teering research fellow at

the alien touring Institute here in London and I would also like to introduce uh the co my colleague and co-organizer of the seminar series Andrea who is far back there um and for those of you who Are not familiar with the island touring Institute this is UK's National Institute for data science and AI uh and if I can sneak in a side note if any of you are interested in talking kindly please uh approach us um yeah so for as for the format of the seminar questions may be asked towards the end of the seminar

and uh either in person by using the raise hands function or directly in Zoom chat without further Ado uh it's my pleasure to introduce today Professor Naan Kutz who is a professor within the department of Applied Mathematics at the University of Washington in Seattle and who is currently based at the is intering Institute uh for a period of time his main research interests involve nonlinear waves and coherent structures as well as dimensionality reduction and data analysis techniques for complex systems Professor Kuts received his BS degree in physics and Mathematics from the University of Washington uh

his PhD Degree uh in Applied Mathematics from North Western University and also has been faculty at the University of Washington uh since 19 I believe so uh and also was the author of several books and lately has been elected as fellow of society for industrial and Applied Mathematics in 2022 so uh lastly I'd like to say so Professor Kut is also very well known for COD developing popular Dynamic mode composition algorithm which uh David will very Briefly introduce hi um I I'll go through your through your audio of course so um yeah my name is

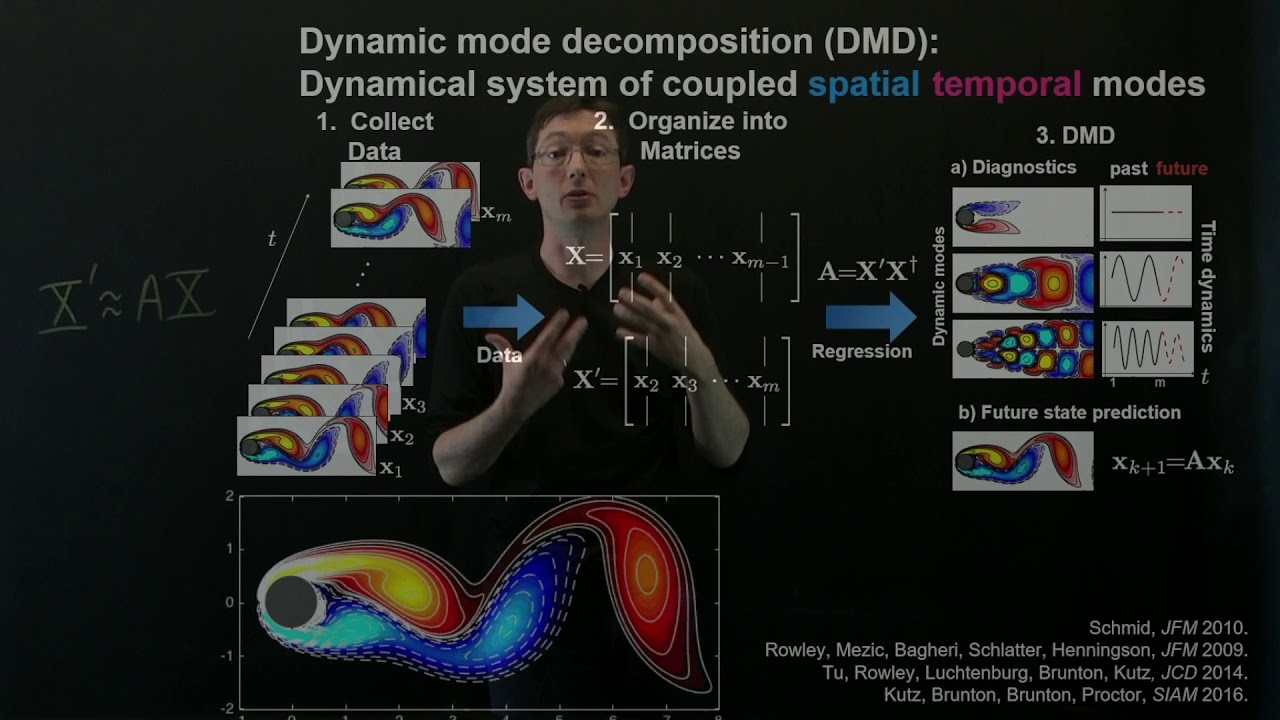

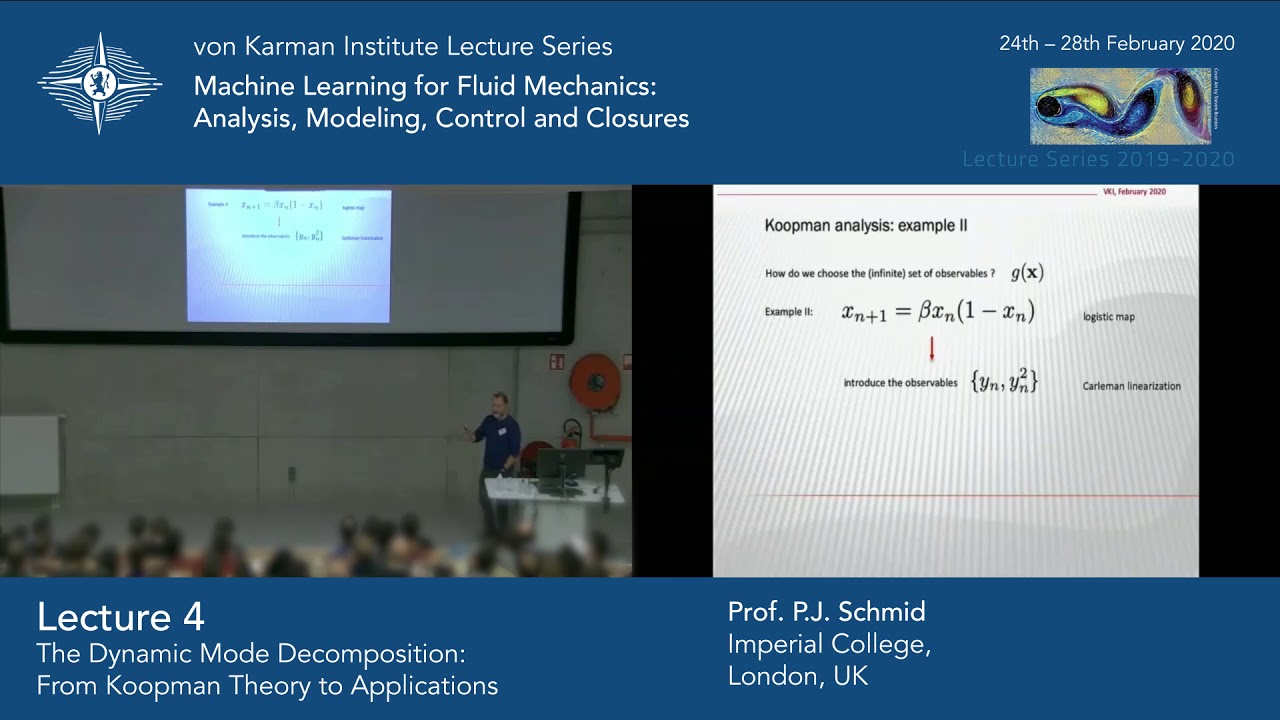

David I'm part of the research Engineering Group uh at the Allen cheering Institute and before before we start with Professor KS I was just going to do a brief introdu introduction to DMD um so DMD uh was first developed by Peter Schmidt in the field of um flood Dynamics to identify spatial temporal coherent structures from high dimensional data such as the Flow field around the circular cylinder um it is based on the proper orthogonal decomposition or principal component analysis and statistics and employe the SBD algorithm and um the work of Nathan KS um together with

colleagues Steve Brunton bney Brunton and Joshua proor has been essential in the development of this uh of the a generalization of DMD um for the datadriven modeling and control of complex dynamical and multiscale systems so without further Ado uh let's start with our talk um you have the floor Professor kits okay now let's see I got the mic now everybody got a good hear me all right hi everyone hope you're all doing well I hope you're enjoying I'm sitting in my corner office in London it's very nice I mean I'm sharing it with 11 people

but uh it's still corner office so it sounds pretty fancy um but it's great to be here at touring and uh thank you for coming today and hopefully this Will be uh educational uh as well as sort of maybe even help out with you know you know sort of just even research things that you might be uh playing around with and hopefully this is a nice tool for for you to explore uh so the dynamic mode decompos Let's talk about it as already just mentioned we do want to start it from a point of view

of a historical remark and I do want to make this this is the paper that kind of kicked it off uh this is Peter Schmidt so Peter was a faculty member with me at the University of Washington when I first started as a young Professor he was already there and we then uh spent uh quite a number of years together probably a decade or more as faculty members at the University of Washington before he went here to he was in France uh for quite a number of years and shortly after this paper he actually was

at Imperial College right right across town here uh and then in the last Year he moved to uh cou so he's uh he's he's moved quite a bit but so it was during this time France that he was uh that he built out this decomposition technique and you can see what it is right so and this is actually think about the year 2010 not everybody had quite gone on yet to sort of data driven science and modeling right we didn't have 2014 yet hit which was sort of your uh the revolution in Ai and sort

of alexnet and sort of the where that was The start of everybody starting to do neural Nets and deep learning Sciences but here's a here's a nice datadriven method and he was applying it to real data from PIV measurements now this paper was important it's actually this is the 2010 paper it was actually presented at a conference in 2008 uh Schmidt and sestan that presented the algorithm that finally showed up in jfm uh here in 2010 now the 2008 conference in the audience Sat oh sorry that's a picture of the composition in the audience sat

clarent uh clany rally who was at Princeton uh I think and they started talking with eigor mesich uh and others here and what they realized uh once they saw Peter's algorithm that this was in fact uh a directly it was the first numerical computation of What's called the cman operator so I'm going to come back to that in a minute so the two the pairing of these two papers is really important This one actually interestingly enough appeared before DMD in 2010 and so this was just the publication cycle taking a long time with jfm but

this is a 2009 paper which showed that like oh DMD was the first actual algorithm to produce a cman approximation I'm going to talk more about cman in a minute but uh those two papers set an important foundation for us in in sort of the theory and they applied it to Jet flows cross jet flows things like this did these Decompositions to show um both space and time decompositions so if you want to think about it if we think about spatial Temple systems uh essentially what DMD is is a decomposition but it's a separation of

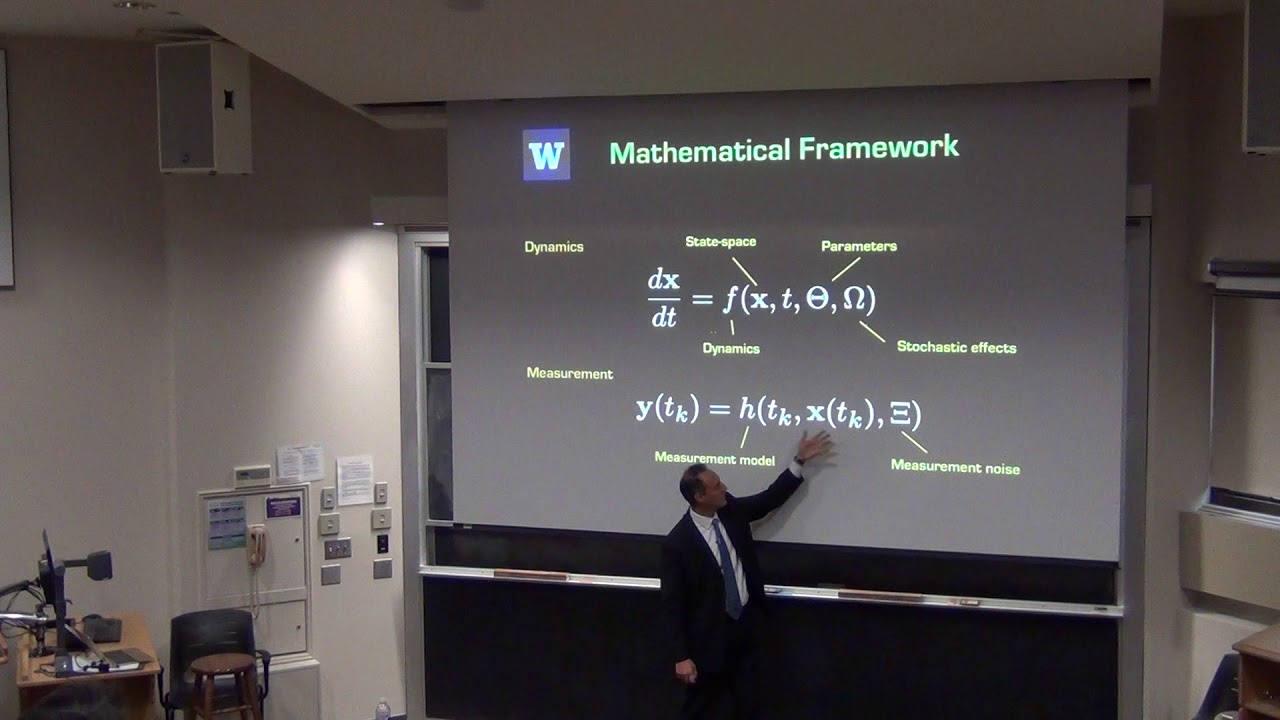

variables argument and this is how we solved partial differential equations for a long time we separate space and time and then find a way to represent time find a way to represent space okay and that's exactly what this does and let's just walk this Through and see why is it so fantastic to have this approximation because here's the one system we know how to solve right in terms of a differential equation that we're always guaranteed to be able to solve it which is a linear system so the reason I put X Tilda on here because

this is going to be our approximation to the Dynamics right so there's some underlying Dynamics we're going to take we're going to measure it and what we're going to Try to do is fit a linear dynamical system to it so what's that a matrix what's the best fit I can get for that a matrix that explains the data and why this is so important is that once you have that a matrix well you can actually solve that equation that's the solution right there XA is equal to some coefficient B of K which is just a

waiting term times a mode which is typically just an igen value an igen Vector sorry igen Vector Of this Matrix a times e to the Omega K which is the igen value of the Matrix a so the basically what it is is you're doing an Ian decomposition of a to represent the solution and this is what we've done for quite a quite a long time in solving differential equations and partial differential equation for linear systems is I and decompositions and there you go so that's what DMD really is um and so and hopefully here this

is important K is small hopefully you can Take your data and you know find some low dimensional approximation to your High dimensional system and a representation couple things of interest right you're you've gotten yourself back to a linear system which you have linear superos which means your Solutions are constructed just by simply adding these up and so as the BFK changes you get different solution types because you have more representation of one thing or the other Okay so keep the solution form in mind because that's what we're going to actually come after with the MD

can I can X is bold has a to so it's a state space yeah it's this is not space this is the this is here typically in like dynamical systems you said dxt equals ax so this is some n dimensional State space vector and that's the Bold yeah that's the Bold symbol yeah something else the Tila just means it's the approximation to the Dynamics right so The idea here is that you're going to take some data set which is created by nonlinear Dynamics and you're going to approximate it by this linear model and you know

it's nonlinear uh but you know when you're taking data it's not clear how you're going to get all that out you're going to say what's just what's just the best fit linear model because the linear model I know how to handle and here is this idea and that's exactly what they Did in those early papers that said hey look we have these modes I want to talk about some of the other things that didn't happen in those first papers which are kind of surprising in particular once you have the solution form you could say oh

I can predict the future right say well I measure my data from time 0 to 10 and I want to know what happened at time T1 100 you put in t00 right there seems pretty simple right it Took me a long time to figure out but they never did that in near early papers and there's a reason why I'm gonna get to it okay they or they can be complex yeah yeah uh so typically we're going to try to measure systems where what we're hoping will happen is you have the real Parts maybe constrained in

the left half plane uh so or or oscillatory really right persistent Dynamics should be living on the imaginary axis but we Don't constrain DMD that way however we can't I'll talk about that in the newest implementations of software so let me give you a a view of this and it's going to be a simple data set what you're going to see here is a little movie and the movie is two objects there's a there's this basically oscillating gaussian and then there's a this oscillating little square inside of this they have different frequencies right you so

basically there a two mode Thing for me but I give you this data and say can you can you pull those apart right so this is like your data you collected and how would you analyze this well you know if if you have two things here one thing you could do is just principal component analysis right and principal component analysis would say hey there's two there's a rank two OB objects in there here's one mode here's the other mode and you can see what PCA does it doesn't associate either of Those objects with time it

just simply says I'll give you two a Subspace of two modes from which you can construct that it's like well that's fine but that's not really what I wanted right because you mixed these two objects together there's independent component analysis which tries to separate these things that are doing differently uh but you know this is what it does sort of kind of gets the cube leaves a shadow of the cube here it Doesn't do so job such your job on that on the Gan piece and then there's DMD DMD does something else also that ICA

doesn't do it pulls out the two structures cleanly and it actually gives you exactly their frequencies too okay so that's good news that's exactly what we're kind of going after here is is is kind of OB objects like this so where where is it's ideally suited for uh when you have spatial temporal Dynamics we Have modes with oscillations right which is a lot of signals have underlying frequencies with them and this is the idea is that it pulls them out so you get a lot of interpretability and so what can we do with things like

this so let me walk you through some fun fun examples of what you can do things like this well here's all kinds of things you can do it you can apply it to financial trading strategies you guys have seen My pink McLaren over there right because I wrote this paper and I just everybody at touring knows the pink McLaren that sits in front of same Pancras right yeah okay oh it was I know everybody like when it's gone it's like something happened in the world I don't know how would that happen Okay anyway that's one

thing you could do you can do shorttime forecasts with this make trading decisions short or long a stock we show You could do that with the DMD where you just take stock data you don't have a model but you just basically fit this thing make a prediction um you can also do things like video background subtraction so here's a video of some cars driving around you can pull out foreground objects that are moving versus background you can apply it to uh Neuroscience data here that we were pulling out sleep spindles these are these little objects

that appear for uh Just a couple seconds at a time that have certain frequencies and so you can actually look through these ecog recordings during sleep and pull out these interesting structures that are what are called sleep spindles and DMD is a perfect object for doing such things so there's all kinds of applications it's kind of agnostic it doesn't really care what you're working on you know even though the early papers were all Fluids okay there's also a lot of different versions of this I'm not going to go into all of these very deeply uh

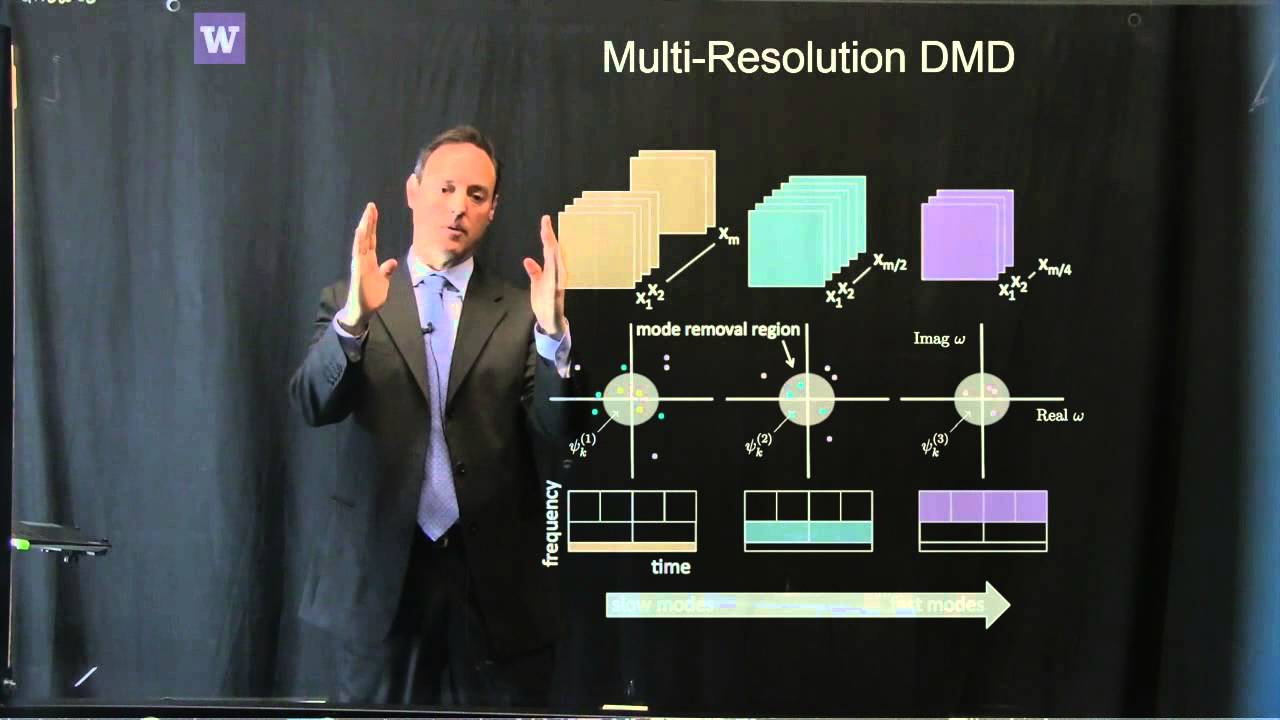

and we can have follow-ups on this uh but you can now start thinking about too is like there's a version with say well actually could I do this with control can I do this regression procedure where actually my system has a uh an input signal and I might know b or I might not know B right and so you can make this work for either Case so this is called DMD with control you can also do what's called multi-res resolution DMD or window DMD and this is the idea that over time series you might have

structures that are happening on really long time scales things that are happening on very fast time scales medium time scales you can pull them all out separately versus just doing a big decomposition at once and that makes a big difference for instance for instance in this data set this a SE Sea surface temperature data that was over a 20year period and so if you do like a 20-year period and look at correlated structures over a 20-year period you get some correlated structures in fact you know the biggest structure is right there it's just basically is

a the middle is warmer than the edge edges right it would look really nice right now to be in the middle right for all of us to go somewhere where red exists right be nice Right okay all right but the other things you miss are things like well an elino pops up occasionally so when you just do PCA or variance-based co-variance based methods you miss it because it's like well over 20 years yeah it's popped up but it doesn't leave much of a signature well what multires does is say let's look what happens over 20-

year period now let's look over 10e periods let's look over fiveyear periods over one year periods that's the idea That you look at these different bins of Windows of time and so when you do that and these different decomposition it's like a wavelet decomposition uh but now on your data and so for instance when you do this all of a sudden you look here in your window of 1997 boom here's this huge mode that's popped on and that's only in you right so this decomposition the multiscale it's really built for multiscale physics as well in

generaliz Um I'll make some more comments at this at the end uh that that we can talk about because uh sort of part of my story is that none of this stuff really works very well so let me tell you okay it's a negative message but I want to be super clear about it so the early things were super a lot of amount of hyperparameter toing to get anything to work and you know getting you fix up the data it was Just a ton of work to like okay let's just do it because there was

noise in the data you had to really be careful about it so part of the effort was engineering the method and hyperparameter tuning it a ton to make things work okay and that's problematic for generic methods right so I'm being very truthful about it with you up front because I think we have fix UPS now uh in other words you know a lot of these Papers written from the 20 I would say All the before 2021 22 uh until we get to the new pipe bmd package uh are actually not very good with data real

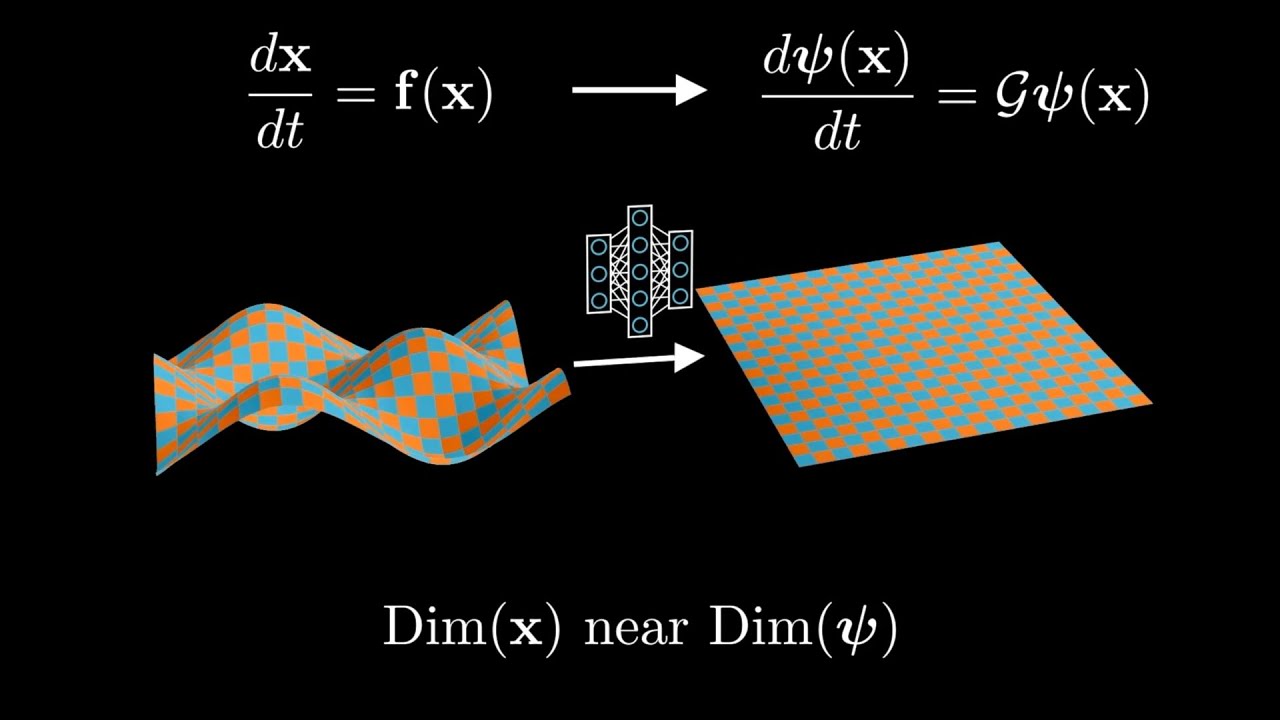

data right they're great with synthetic data but you get real data with noise it's a problem so I'll going to talk about that so I meant to comment about this relationship to cman Theory which was this rally paper so all cman theory is is this idea that Bernard cman posited in 1931 he said if I have and again this is the notation we were using Earlier that James you had asked about you know here's this dynamical system X is some State space Vector so you have a nonlinear finite Dimension nonline dynamical system there exists essentially

an embedding into a set of observables in other words you project up into an infinite dimensional space in which there exists an infinite dimensional space where there's a coupon operator which means as I move forward in time There's a linear operator that acts on that so that's the equivalence now let's talk about practicalities it's 1931 what does cman not have there's no computer so this is his idea and he doesn't tell you how to get observables he doesn't have how to compute any of this it's just like a mathematical fancy that's there and this theorem

in some sense or this definition is equ equivalent to covers theorem uh 1965 Which is sort of the the the under theorem for what was machine learning pre 24 2014 specifically for sport Vector machines but if you project into Infinite dimensional space all your data can be linearly separated can be linearly classified so guarantee right if you go to Infinity of course you don't ever go to Infinity you try to go large and you cut off andless you make mistakes but the theorem says if you get into Infinity linearly separable you go To Infinity you

you could take any dynamical system make it linear so this is an important theorem from the point of view of well we don't have to work directly with our data we can work on observables with our data and so that uh and then do DMD on that right so that's that's some of the ideas here sorry the coupon operator is time independent uh it's it's just a it's a map that takes you from T to t plus T to t plus delta T yeah yeah Yeah all right so by the way here's I'm going to

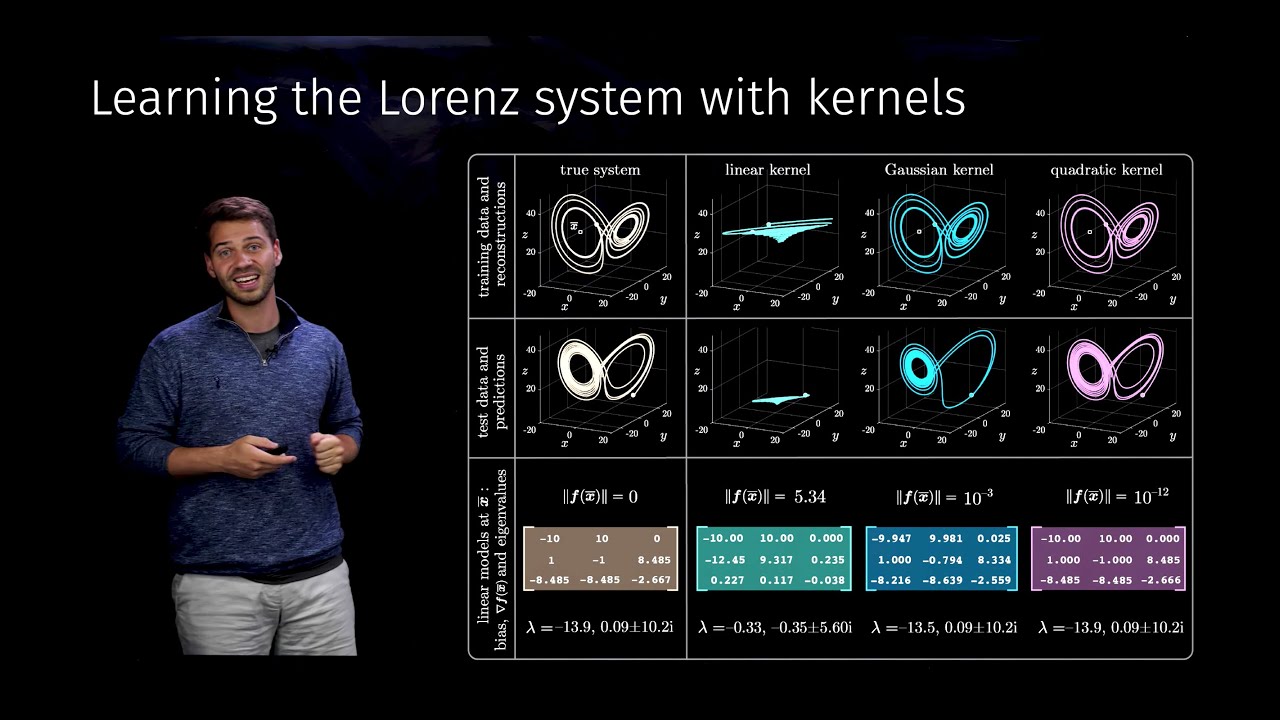

give you two simple examples of cpon operators in some sense or how you might construct it because like it seems a little you don't always have to go to Infinity but here's one for instance when you're looking at top left here top left is a simple 2 by two dynamical system right it's like out of a textbook um and it's nonlinear so normally sketch a phase plane for it you don't necessarily write down an exact Solution for it but I could trade out for these variables y1 Y2 Y3 which are just X1 X2 X12 and

then it's closed under that so now I have a 3X3 system which is perfectly linear so I went from a nonlinear system I have a new coordinate system it's now linear this new coordinate system right so that's the part of the idea of a cment operator is like can I can I find that mapping okay and really When I you know once you're here you say so the or can you find an approximate mapping and then apply DMD algorithm there right so now DMD is GNA even work better in this space because it's meant to

find that variable like squ original so is that a critical part it if you just sort of linearly blow up the yeah this is a special example because a lot of times what happens this doesn't close right so what happens you Get this and which means oh I need now now I need a X1 Cub oh but now that X1 Cub tells me I have to have x14 oh the X1 four says have X1 Fifth and it just goes on forever and then you're like you got to close it at some point so there's most

of the time there's a set of circumstan on which this is exact you can do this but most times you can't do this for for just generic systems can I just remind people to please speak up when you ask questions the question was Well I you got my answer so hopefully yeah yeah just going forward yeah perfect perfect all right here's another example by the way of another system again where you can make a transformation to make it linear this is if you're into PDS or you know partial differential equations I don't know what everybody's

background is but here's a PD uh this is Burger's equation it's nonlinear and 1950 colon of Hoff discovered this Transformation called the co Hoff transform it turned it into a linear system it's exactly what we're looking for in other words part of these are all paradigms of cment they're all suggesting there exists a transformation allowing me to have a linear representation of the Dynamics so you know because one of the criticisms you can make right is like well you know constraining to a linear Dynamics is pretty harsh it is uh on the other hand There

are suggestions that can generically do it if I can just find the right coordinate system for these systems all right uh here's kind of a sketch of what this might look like I take measurements from a system you know uh and so it creates data matrices talked about these data Matrix x and x Prime X is my collection of data from X1 to xn X Prime are corresponding measurements delta T later so X goes to X Prime in delta T so all these snapshots how do they evolve for Delta any time one possibility to build

a model for this is just go directly over here and just say whatever data I collected those are my observables I do DMD I have a linear model okay another possibility is I make up observables of what I collected and then do DMD and so I'm working not on the original measurement space but in some Lifted space um and so if you have good knowledge of your system this is where expert knowledge can be amazing because this is sometimes you know your problem well really well and you can think oh I've got the right variable

and lift that I want to do and boom now you get a much better linear approximation in your new variable set so you don't have to just constrain yourself to what you measure you can make functions of what you measure and then do DMD on that That's that's the whole point of this slide here okay so let me give you one example of this just to show you how this works um and again I don't know everybody's background I come from a lot of PD and your PD background so that's why I'm giving these examples

apologies if it's not your cup of tea that's a perfect London phrase right but here we go uh this is what's called a long Shing equation it's a nonlinear PD and for Instance here's one of their Sol it's the solutions called the two Solon solution this is fully nonlinear Dynamics right and so you say okay well what happens if I try to do DMD with it well actually here it is I just take my measurement X now is my state space which is U which is discretized uh and it's actually not bad right it kind

of getes me some of the right stuff I'll show you the error in a moment here but I could also say well I don't have To just do DMT on X I could do like for instance on X and look what I did here mod x x know the absolute VAR Square XX why did I do it because that's the form of the nonlinearity it looks pretty good in fact I'm going to show you that this error here is almost numerical Precision to that comparison right so this lift up into a very simple enrichment of

the varable space gives me almost a perfect model For the full nonlinear Dynamics but if I lift to the wrong variables this is what I get so lifting to variables is a tricky it's a tricky business okay that's what gandal said when froto went out the door right is that right It's Tricky B okay anyway so let me show you this here's the errors in the modes here's your DMD modes what the I Valu are supposed to do is line up along the imaginary axis which if you just do regular DMD they don't you do

This lift and dang it just Nails it this is like a theory I I actually know how to do this for this specific model exactly right to linearize exactly right and this is where they should be and if you do it wrong terrible things and here's the error the main thing is that for this one here where I got the lift right the error is all the way down to 10 - 4 9 is 5 which was my numerical stepper error so I'm on the I mean I'm getting This linearly down to where the data

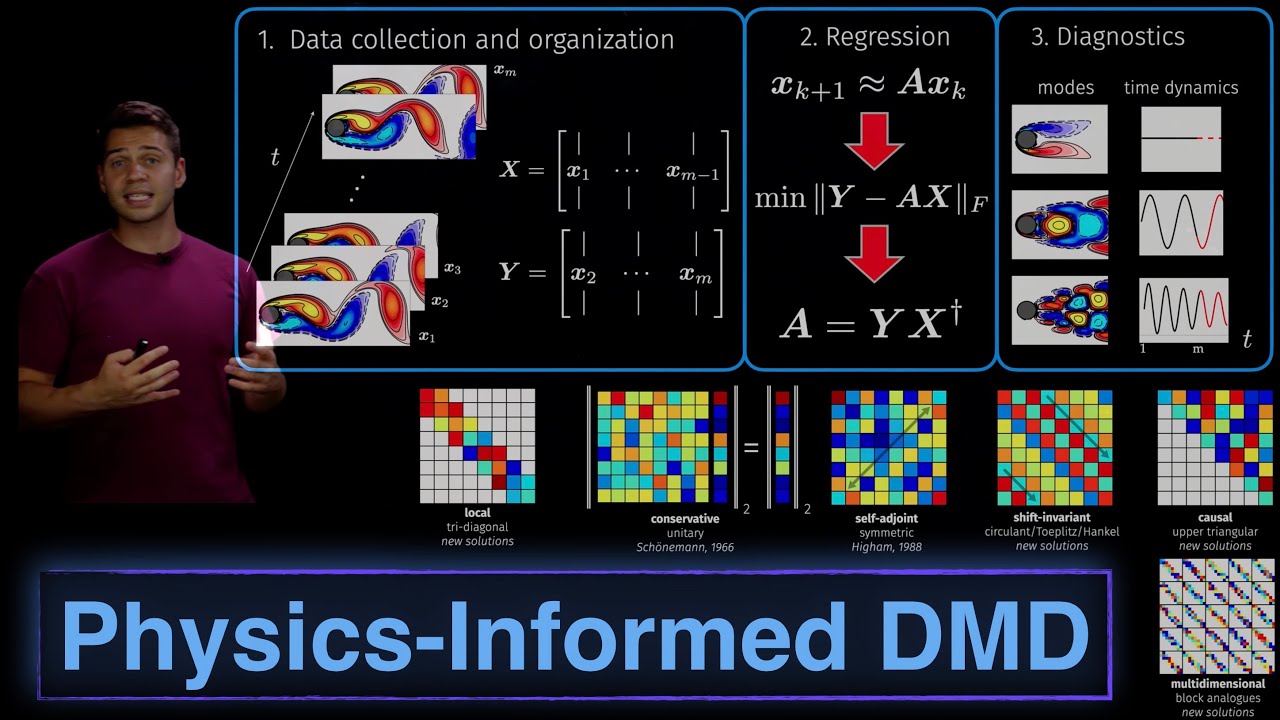

I had the accuracy of the data so these are kind of examples that show okay a clever use of DMD is not to do DMD directly on your data but to think about is there a better set of observables I can create out of the data then do DMD on that okay all right so that's kind of like the front end we're going to build linear models we're going to approximate them they're nice because now I have Linear super position uh very interpretable so let's talk about algorithms and so here's here's one of the algorithms

that was from 2014 it's called exact DMD and this has sort of been the Workhorse out of things I I collect my data so here's snapshot this is fluid flow flow behind the cylinder U this is one of the canonical models to consider so I take these snapshots I organized the data into two Snapshot Matrix matrices X1 X1 minus one X2 to X ofm so really what I want is what's the linear operator that takes me from X to X Prime right that's what I'm looking for because if I can find it that means that

a matrix advances the solution one delta T and it does and of course you train across all these snapshots so it's you're trying to figure out which a is the best to do all these snapshots okay and the idea then is you get these set of modes what comes out of It in fact is the first thing you do with this regression you look for a low rank structure and in fact what you find is here are these three dominant modes and here are their Dynamics remember it's the separation of variable argument so it's giving

you modes and time Dynamics and so what it finds for you is three modes with three time Dynamics you have a model it's a linear model is the first mode the mean yeah basically it pull off the mean the DC component so You don't normally subtract the mean you can if you want yeah so that's you know that's an interesting thing like so PCA the difference between like SVD and PCA for instance is like doing some pre-processing tricks like every every set of strings of the data is mean zero unit variance uh sometimes that makes

sense in a problem here it's a fluid flow so you just we just don't do that I mean you could subtract the whole meaning from it but here you just boom Pop that out and this is your modes and this is how they're oscillating this is what it prod es for you okay so what it is really right is this kind of beautiful marriage between PCA the 4 a transform yeah um the first m is the claim that's actually that by itself is a solution to the equations of the motion here yeah no this one

here that's uh it's it's only part of it right so essentially you have to add all three of these up to get so this is only a Portion of the variance of this what you're seeing in the movie right and then these are the other portions that make up let's say 99 99% of the variance of the movie is three these three modes added together right so if you if you leave off modes you only get you kind of lose a lot of like well I can only I can only explain 30% of that of

that Dynamics let's say something like that yeah does it fit for the number of modes as well uh does it fit so normally What you have to do is you have to pre-pick the modes right so this is a hyperparameter tuning you pick the number of modes and then you you you do that out yes question from the audience Muhammad yeah hi so I've tried dmds before actually on traffic data where you have a Time series on a network uh so I think it didn't work that well at that time maybe it's a different method

here but I think that what we realize is that there's certain constraints that Are required in the data to make this work and I think a lot of the constraints would had to do with stationerity or type of cyclicality and I think if you if you want to do you have any idea of where are the limits of uh I'm about to shoot DMD in the foot head what whatever head sounds a little too violent but I'm American right we have guns everywhere we're we're about to stab DMD that's more like UK style right all

Right so Mohammad just hang on one second you see bels and we're going to stab it so we wrote this whole book on this he what's the number one question I got on DMD hey I read your book download a DMD exact DMD because we provide all this code and data and it's like it didn't work in fact one of the number one comments I've gotten is exactly yours Mohammad which just I tried DMD on some data and it didn't Work and of course it didn't work because exact DMD sucks so that's chapter one of

this book so whatever these authors are pedling you do not buy it okay uh so actually this is a great opportunity because I'm GNA undercut myself here a bit because uh it we need to up update that chapter one of the book because it's it's it's embarrassing at this point at the point that we had 2014 when we wrote the algorithm we didn't know What else right that was the best algorithm we could do but what came out in 2015 16 17 was evidence that soon as you put any noise on the data it fails

you bias the I values you have some real problems and so let me show you some of this and by the way here's the whole algorithm it's really simple um first you look for a low rank structure then essentially you do a similarity transform down into that low dimensional Subspace and you do an i Decomposition and project yourself back out it's actually pretty simple code to write okay but you know again here's the canonical example we go hey what could go wrong it all works right and then Muhammad already said hey it tried it didn't

work and and I think anybody's tried it probably pre so here's here's my hope actually from this event is first of all I told you straight up don't use exact DMD I was an author on that paper by the way so if I'm saying Don't use it you can probably trust like you know most authors are going to oversell their stuff right I mean that's kind of our job right so if I'm telling you don't do it you know I'm serious about it okay uh and so let's talk about what the alternatives are because this

was the picture that finally made me realize oh my gosh we have real problems with this algorithm so what you're looking at here is I thought okay let's I I had this project I was doing with a Guy at Nasa on atmospheric chemistry data so we basically took some lat long elevation data and this is at a certain elevation and what you're looking at is and this is the I guess the latitude you're at and this is the time Dynamics typically during the day for nitrous oxide nice uh okay and so you know it's it's

pretty it's nice it's facilitory Dynamics it's kind of complicated but it's like real data right this is what you got to deal with say oh DMD should Be great on this because what does it do look for spatial structures time Dynamics and you can clearly see a time you know periodic structure maybe you're not going to get it perfect but whatever you should at least get it somewhat what you're looking at next in the middle panel is exact DMD total failure and by the way we're not even extrapolating we're just trying to reconstruct the data

Sample and like you get a day out and your model's awful it's like wait a minute I can't even fit the 30 whatever days that I actually gave you the data for no fails before you mentioned that you take the entire all the SES in your timeline if you want me but I mean would it be an option like to update the operator like a smaller time steps yeah so that like you don't yeah you here you'd have to update it Every single day okay and you could only do one day maybe forecast but even

one day is not very good unfortunately and and partly it's actually a deeper issue with DMD which is the exact many of the DMD variants right no matter what anybody tells you I'm going to tell you this once you have noise on that data including the pi DMD package original version it was meant for noise free computations right and who does Noise free nobody only Mathematicians who can afford to live in a castle and imagine data and their data is perfect like unicorns new right okay by the way I live in a math department too

so but like I also have some engineering focus and so Along Comes Travis and this is 2018 and Travis developed what called optimized DMD now we're going to take a big step forward in performance because instead of doing this regression onto a linear operator In fact there's a theoretical foundations of why you get biasing of the a Val it pulls the a values way into the left half plane where now they pick up an imaginary a real part that's negative and big and so everything just goes to zero okay instead you just regress and you

set up variable projection optimization procedure to directly find modes their loadings of the modes the modes themselves and and the igen values So you're doing a direct fit and you get to pick how many modes you want and so this variable projection routine just says do an exponential fit to the data right so uh you know that Solutions linear so it's exponent fit this is what you do that was 2018 one other B big advancement that came 2021 is Dia you do one other trick and I can't believe it took me this long to learn

this right so I'm like a professional mathematician supposedly and then it's like oh we Should do statistical bagging right like even an undergrad would tell you to maybe do that and of course I need to probably talk to more undergrads and statistics because there's a middle-aged guy who finally figured out oh I should do bagging okay so what is the bagging procedure well the bagging procedure is to take that optimized algorithm but now instead of taking all your data you take subsets of the data you right you build An optimized DMD model and what ends

up happening now is for each bag you do you get a mode of frequency and loadings and then you just collect them all maybe do a thousand trials with different random polls and now instead of just getting an you know you say your a DMD mode as the average you you say my you could take the mean of this as your DMD model but it also gives you the variance so now what you're imbued with is uncertainty metrics so you want to go do a forecast You're like oh wait a minute when I forecast this

I have these I values but here's the distribution of the I Valu so now you can do money Carlo from that distribution you learned right so now you can much better get an estimate of what the spread of the forecast is going to be or even have confidence or unconfident is that a word conference you right uh about your model right the way and by the way I keep putting this down here this new Pi DMD Package pip install has both it in there optimized and bagging optimized okay all right along with the ability to

constraint I values to the left half plane if you want along with the ability to constrain agon values to complex conjugate pairs along with the ability to say I want all the I values on the imaginary access all constraints in the optimization procedure how much user input is required for this or is it Pretty much decided by the algorithm itself yeah so the question is how much user input is required for this basically you get to pick I'm going to show you I'm GNA walk through an example with optimized DMD Bop DMD so you get

to pick how many bags you get to pick what the rank is you want out so you get to pick some things uh but it's uh for most part goes in and you're there all right uh I think gari has a question yeah um I wanted to ask you Said that exact DMD will not work um and one constraint of that is because there's noise on the data so I'm just asking as a possibility like is it not okay like can you do you think like pre-processing the data will like make it better or like

what are your thoughts on that yeah yeah you could certainly pre-process and try to do some things to make it better I and but by the way I've written lots of paper with exact DMD but basically I had to do exactly what You're saying you know do a lot of work and you get this result and they're like okay right I tuned it up to for it to work so thank you if you do have most St would it be preferable to use that I so yeah it's a good question I would say in all

cases and just my this is my own personal opinion all cases just use optimized DMD I'm G I show you it's they're e it's it's it's as easy as any right because once we get to the pi package you're Just like okay can you can you afford three extra character Strokes oh you can just use op DMD okay uh how about uh yes uh thank you thank you Nathan what is f n in your regression that is uh the most difficult part for me to understand when you regressing onto this linear mod what is f

n that's the mode that's that's the actual mode itself when I show you fluid dynamics it's made out of three modes F in is the mode but you have to Parameterize that so what goes in there does a neural network go in there how do you when you do the the regression in the C function start off it can start off with just a guess of zeros and it it the variable projection built it from there it's an iterative procedure to find just the the the mode okay all right thank you you can always put

in a guess which is helpful sometimes right you could say if I want this to go faster take the exact DMD exact DMD Actually doesn't do so bad on the modes it does what it does terribly on is the ion values oh you usually have to flatten your data right so you always flatten the data and vectorize it to come in uh so yeah yeah times time will be columns yeah Mohamed do you have one more one more question yeah yeah uh so have you tried this on like gra graph based data or you have

like some kind of Time series on edges uh and there might Be differently haven't tried it at all on that but we could since we're so yeah yeah um because does really care it's spatial points it's just any collection of data right really like when we did financial data it's just like here's IBM stock next to Microsoft stock like it was just that was what space was for us right so Nathan can you suggest that we answer all the questions at the end oh sure 15 Minutes okay I'm gonna yeah stop bothering me all right

sorry was that rude okay all right so let's come back to this picture which I showed you here's the design disaster exact DMD and optimized DMD boom right fixes your problem gives you a stable model uh and this is the picture for me uh which I think we first produced this in 2020 with one of my students in Travis that was the aha moment for me it's like oh because you know I've been getting all kinds of questions from people hey my DMD doesn't work and I'm like I don't know what they were doing doing

with it and it's like and it's like it's not anybody's fault this this this picture explains why everybody was having trouble they were trying to take DMD onto real data and DMD exact DMD that we talked about in chapter one of our book and walked it through doesn't Actually work on real data I stand by that statement here you can even forecast with it this is quite amazing you take 10 days of data I want to go forecast 20 days out right I mean this is the kind of remarkable thing I think and again the

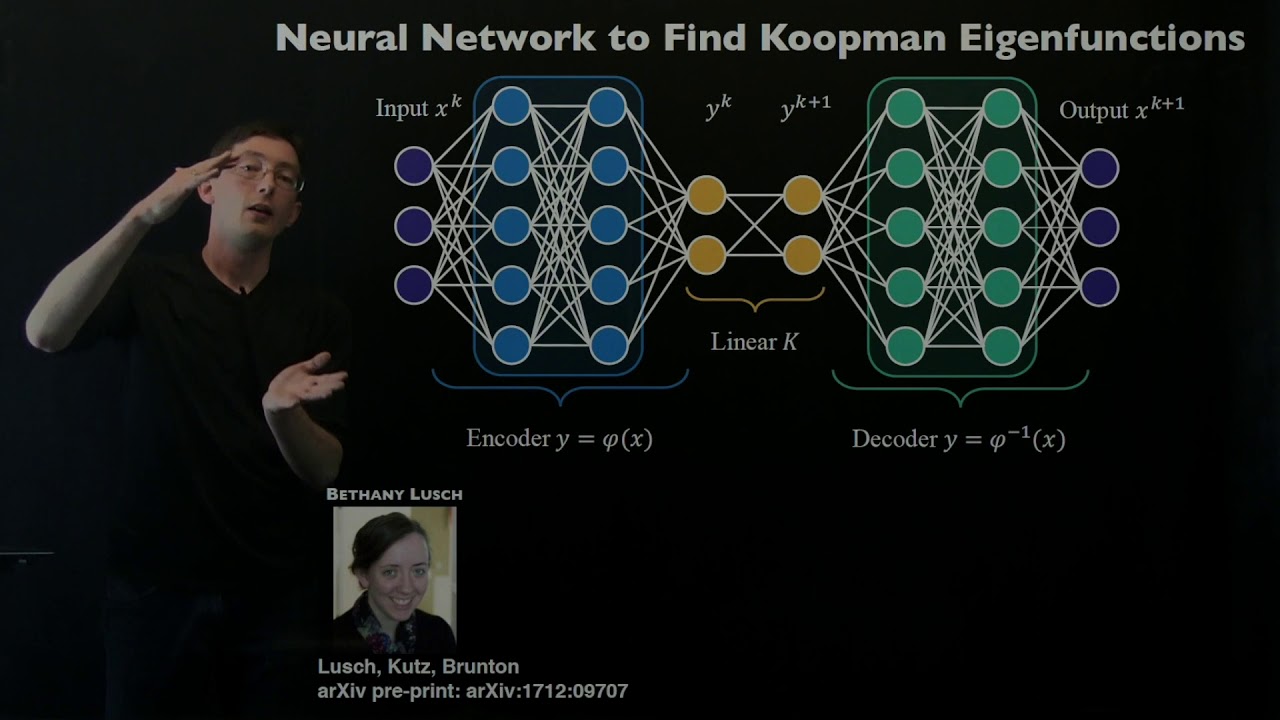

Dynamics of this thing is highly nonlinear uh and yet you're still producing a pretty amazing forecast just by like just do a linear regression I Mean as long as it's optimized DMD right not uh not not the regular one okay I'll just really quick talk here and I'm going to switch over to code you can also think about neuron Nets and DMT and the only reason I think about neuron n is because everybody wants to do neural Nets the other thing I would suggest though is before you get yourself into a system where you have

all this fancy architecture you're going to build with deep learning Baseline and All of it with a DMD model I've seen Papers written with all kinds of fancy fancy deep learning this deep learning that to do like you know DMD gets you just as good an answer in some cases and it's a little bit embarrassing right because the people obviously didn't check like how about does a linear model work that should always be your Baseline the Baseline is there and you say I need to beat the linear model I'm okay with doing sophisticated neural Nets

I do a Bunch but I also sanity check it to say like and what's my target to be DMD is usually your target to be so you can do things like learn and embedding to build a linear model in so put an auto encoder in front of your data push it into a linear into a space and then enforce DMD in that space okay and this is what Bethany did for some of her work okay um and we've done this for much more complicated systems I Just want to walk this one's the most impressive here

is we took something that was spatially temporally chaotic behavior and pushed it into a linear space and so now you have a DMD model in a latent space which I this one always surprises people so anybody knows the kerot shinsky equation it's like you can embed that in a linear space yeah do we understand why no nobody has understands it yet except for that the computer can do It and so we need more Theory here because a lot of people don't there's no way you could do that yeah no codee's there you down GitHub right

download this all right let me show you some code go right here so here's what you do pip install right everybody can do that even me I I can even do pip install which is remarkable so if I can do it anybody can do it all right this is the one I want here all right you guys Seeing my there we go so I'm going to walk through a little uh DMD code so pip install Pi DMD and at your fingertips is the uh following code and I'll walk through so this is a little tutorial

I can send this out afterwards can to the mailing list can we is this mailing list people because this one's a little different than on the yeah so you know it's standard stuff you bring in you know tell them you're G To maybe plot something blah blah blah I'm going to create a function and the function is going to be uh right here so here you can you can kind of see what I'm going to do here this is the function I'm going to create I'm going to make two functions and glue them together now

the reason to made two functions they have a spatial structure which you now know the truth of which is the first one is a set X plus 3 a little bump the other one's a Set tanch and one of them has a frequency 2.3 T 2.3 is right is the frequency and I made of just perfectly oscillatory 2.8 so I already know what I'm trying to Target to get out of this thing right so always good just to as a test case I know what the answer is going to be so I make these functions

on a grid that's what the whole first line of the thing is 129 time points 65 spatial points glue it together and here's kind Of what this thing looks like there they are so here's one of the functions here's the other when I glue them together I get this and the question is now when I do DMD on this am I able to first of all extract back out the two functions that made it and the frequencies correctly right all right you ready for some heavy lifting there it is DMD DMD you talked about what

you have To pick you have to pick that rank okay we'll do two I know it is two because I only put two in and then DMD fit throw the data in and then we built this thing called DMD plotter so from DMD bring in plot summary and here's the plot summary so after you get the model you can start plotting uh things in the data let me just okay oops uh so let me show you what this thing plots so first of all it plots something like the singular value de comp Spectrum in other

words how many Modes matter oh you see two here's what they sit in when you look at the discrete time I values and in continuous time when you look at the real and imaginary axis here they are this one's here at whatever one was it 1.8 the other one's at 2.3 Nails it here's mode one mode two there was no mode three that could be here and here's their time Dynamics right awesome so I got the decomposition perfect okay remember numerically accurate Data and this was exciting right because this is where we worked a lot

in 2014 like hey look we could do this let's write a book and tell everybody like this is real life this is not real life and I'll show you in a minute because uh we'll will'll break it so there's also Pi you know optimize DMD and Bop DMD and here let's just go ahead and use here Bop DMD bring in Bop DMD same thing so few character Strokes extra right bop DMD instead of just Dmdg bagging optimized DMD so I always in my view this is what you should use Bop DMD pick the rank pick

number of Trials if I pick zero trials it's equivalent to optimize DMD but there's also an opt DMD command which then just you don't need a number of Trials the trials in set size of the bags yes yeah 100 bags thousand like the noisier your data the more bags the better you're going to be off right like it's exactly what you expect a Statistician to do right it's like try to do as much as possible because and if especially if you're in a data limit scenario you want to like do as many combinations of limited

data you have to create the statistical proxy you can get there it goes Optum def fit done okay we're done got it so you know you ask the question should I use exact or optimize the point is or Bop with the package now doesn't matter right and and you might as well just use A few extra character Strokes to just get you the best thing right that's kind of my view same thing get the sameeh results here out you got a nice picture Okay so let's come down here and just add a little noise to

it there's some more stuff here so I'm going to noise this up a little there we go okay I added noise not even that much really I just added a bit of noise to it and say now from this can I extract back what I had right and what you're GNA see here Is okay DMD I'm going to do this higher rank one because it's kind of choking a little bit on this but uh here you go two modes it still kind of gets it right there's mod one mode two it still kind of getting

it but here's the big deal look at your mode Dynamics what it did is it pushed those I values into the left half plane so if you're going to try to do any prediction with it or reconstruction all of it's going to zero right uh and that's the big problem With DMD in my view if you just use this DMD fit algorithm so I would say don't use it you're more likely in any real data to have more noise than I just showed you there not even that much noise and the more noise you have

the more it pushes it and you saw that chemistry data just flat lines almost immediately it's the nature of that exact DMD op DMD same procedure super easy there you kind of go get your two modes here you go the First mode it kind of perfect right it's it's actually bang on to that axis right you can actually see it right there the red sitting right on there the blue slightly on the right half plane and look at but look at all these I values it's lining up it's it's actually thinking there's oscillations here it's

not pushing them into the left half plane it's sitting there and saying I'm going to get these things um and and I can get them and you Can even uh I'll have to update this a little bit with with this example file that gets sent out you can even constraint it say look all I want is I value sitting in the left half plane so what that would do is make sure that that blue guy pops back over onto the imaginary axis okay so now you get two stable modes you can also say I want

I to come in conf Compares or I want uh everybody to be on the imaginary axis the on the imaginary axis one is a Little tough like for this data not so bad because it's purely oscillatory but in real data you actually do have decaying pieces and then you try to like put it onto the imaginary axis and says you not like you're not letting me go to zero well then I'm going to show up in your picture right so that's a little bit of a harsh constraint but we found that like just making it

to the left half plane you get it there so these are the The things to be thinking about and part of the reason I wanted to show you this little example code is first of all hopefully move people into a scenario where it's like it's trivial to try DMD right pip install Pi DMD there's three line I mean look it's really one line of code two two lines and a third to plot it okay and you have to import it okay four do we count that as a line wait okay let's count out as a

line but you know what I'm saying like if you're Doing python it's like you can afford six lines of code hit your data it's a baseline see how you did and the interesting thing I would if any of you have tried DMD in the past and said oh yeah DMD doesn't really work because actually have heard that so often uh this algorithm is a GameChanger uh and again on real data like I showed you the atmospheric chemistry Data before that it's like this doesn't work At all soon as you hit it with that it's like

oh my gosh you actually get something really reasonable here and I can forast 20 days with 10 10 days of data in fact I can forecast better than them running their models which are running on supercomputers right so to me I'm like okay I did that with six lines of code and just threw it in and got it and they're like running overnight runs for several days to get the same 20 days and I got it in like few minutes right this is H it's pretty impressive and the fact is often in these systems linear

Waddles work amazingly well and it always in my view should be the Baseline you just say like okay I'm going to try to make a prediction just put a baseline linear model on there and you like there's my target if I can't beat that why am I doing a deep neural net right so all right that's all I have to say this little code will go out to You guys we're still the if you if you download Pi DMD now it's all the stuff's there but we're working on a little bit better tutorial structure so

that tutorial one right away the way it's set up right now is optimize DMD is tutorial 14 you're not going to get there you're GNA like this doesn't work this doesn't work and by tutorial six like none of this works it's like oh did you realize tutorial 14 actually had the stuff you needed and right now it's not set up That way so I'm trying to write a really great tutorial one so like from tutorial one you're using optimized DMD uh versus like you don't even know it exists in the package okay questions I guess

chatus because right back yes yes um and okay I'll you to my oh here uh yes uh let me oh how about this is I can read it right otherwise we're gonna be playing mute worth uh okay Marcus ask in one of the examples doing DMD on the original Variable X worked better than doing uh DMD on X in a poorly chosen lifted variable together why is that um if the variable cheers importantly can't DMD just ignore it yeah so this is a good question so this is about a variable right I said you can

lift into a space but if you pick a bad observable you're just doing a lot worse so yes you could you can obviously test that but now you have to write an algorithm to test your observable spaces So what some people do and this is the extended DMD they actually then will try to play around with the loadings of these observables to try to see which ones do I have to cut out and again it's a heavy lift to do it because now you have to have training data it's almost like you know it's a

neural network trying to train it to remove the wrong ones um so yes so in other words there are unstable directions to project into and if you pick a bad one that's exactly What you're going to do okay sherei next right oh no thank you will oh can you share the lecture link I guess uh yeah so that's something that's different yeah I think the Link's going to be available somewhere there a question William oh okay where's Williams okay how do you preserve temporal drink ah when doing bagging well the I okay oh beautiful thing

about op DMD that I didn't mention so exact DMD requires you to be On a clock you're on a clock of your sensors delta T between time slices and you have to stay on that clock uh okay you have two matrices x and x Prime but the difference has to be exactly delta T between optim DMD does not care op DMD says you can randomly sample you you have to know the time difference between them as long as you have a clock that says when did you you can randomly Sample in time as long as

you know when you sampled that's all you need to know and opmd works with that information because remember it's doing exponential fitting it's just saying what's the exponential fits oh you gave me different time Windows it doesn't matter so it's actually opmd is also really perfect for bagging right because bagging is all about pulling in random snapshots at different times and then reshuffling to different random Snapshots and so the Delta T's get all messed up between them whereas an exact D you can't really do that because you can't like I can't for remove these now

I have I'm not on the clock anymore optimized DMD doesn't care about a clock I mean it cares about knowing when the measurement was made but doesn't have to be any fixed relationship between time snapshots so there's a question from DK Oh okay I just say and why so I the bagging Improvement makes a lot of intuitive sense and I didn't really understand from the quick run through what the optimization Improvement to the op DND so I was wondering if you in like pler language you could sort of explain what's that because that seems really

really important and it seems to sort of prevent all the igen values drifting left why does how does that how does it do that yeah so this a good question so Uh so what op DMD does is it goes so okay what normal DMD methods have done before optimize DMD is to try to construct a matrix a or a similarity transform of the Matrix a right and then do an igen decomposition of that to finally have your DMD model opmd does not try to construct a matrix a at all it just goes directly to what

you wanted which was give me the modes and I values so it's a direct fit to those what ends up happening is when you sample in time By going after that Matrix a that tries to advance you one delta T it actually when you have noise it biases the igen values so for instance one group tried to say debias it by saying how about we go forward in time so how do I go from X of n to X of n plus one there's a matrix a but what if I went backwards in time what's

the The Matrix that goes from X of n plus one to X of n in other words that's that's those should be inverse matrices Right of course they're not but then you say well how about if I average their predictions right I'll take I'll take the inverse of this which is supposed to be what the forward is average it with this one divide by two that's it's called forward backward DMD to try to take away some of the bias and the bias is all due to the fact that you're going forward in time but your

model could also go backwards in time the opmd doesn't care about forward or backward In time right it just says exponentials across the solutions so it's it's sort of not biased towards looking forward in time at all it just is it does the whole fit across the entire time frames all once that that's that's a hand wavy but I I understand we have a question oh uh so if you were to do for classification instead of prediction uh in the future uh would we use it more like a feature transform uh you know and then

do classification you know some sbm Or some other learning method is that how you we would is it possible to do classification would it generalize well that's good question is it possible to the question is about is it possible to do classification using sort of a Ford prediction like this uh I I guess depends exactly how you're going to use it as a classifier right you could you can imagine once you have the DM decomposition there's a couple things you can do with this right like You actually have then a model you can run forward

into the future and then you could say well maybe on different data sets you could look for instance classify by I value distributions for instance maybe that's a more interesting thing you say like hey in this case here what we're seeing is these are the frequencies that are active over here these are the frequencies active that's kind of almost what we do in the multi reses because we're trying to look at Different bins of time it's like oh in this bin of time here's the ACT active physics here's their frequencies but now here in these

bins of time different so they're like kind of in some sense sort of acting in that way yeah has anybody worked with the video real video data is there any work on that yeah we did we did some real video data for just background subtraction so for instance the zero mode so we did is took a video with moving things in it with a Background you say what does e to the0 T mean like if there's an IG value at the origin even zero T it means one nothing's happening that's the background we found this

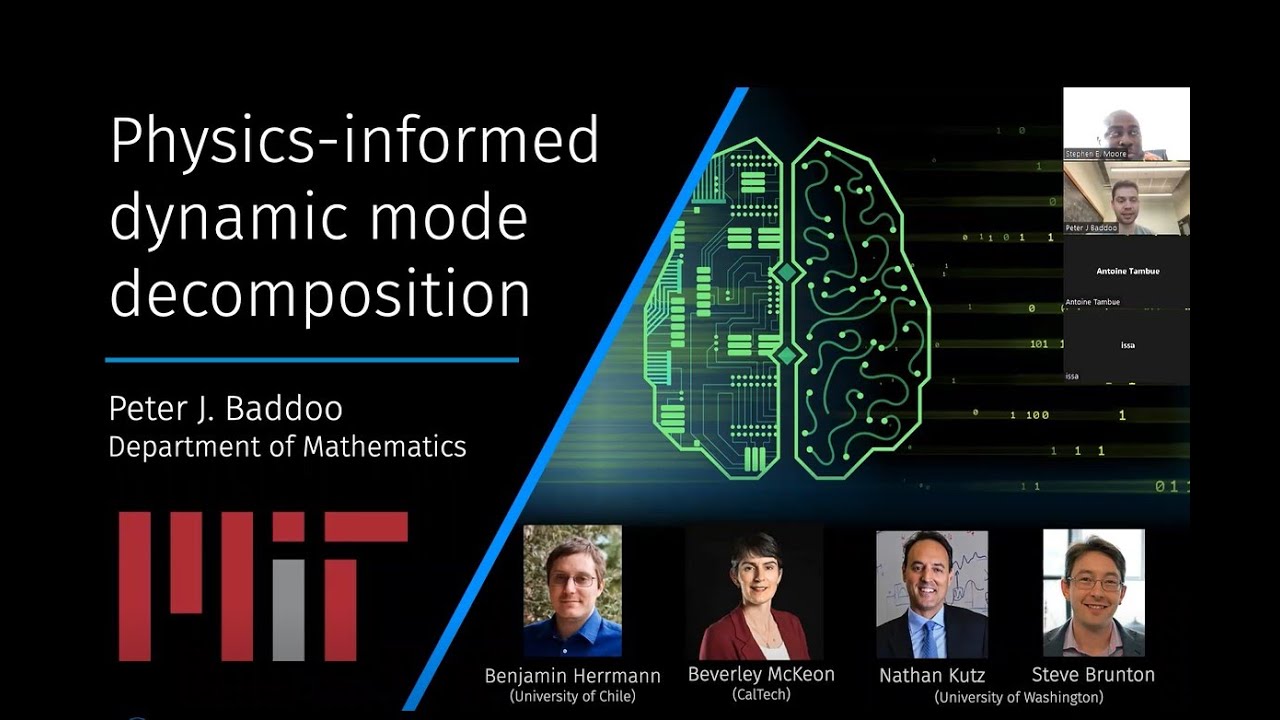

on accident because we got this thing wasn't working and we realized oh if you just look at at the zero modes and pull them out you got the background and the rest of it reconstruct without that zero mode you have the foreground objects and so for real video that's the only thing we've Done so far the only other thing I should mention and I apologize I was going to do more talking about this is there's also a version of this a physics informed DM MD some aspects will be in here which is one of the

areas that this suffer is is supposed I have a traveling wave in my domain you know you see what this thing's going after is low rank structure like so a lot of these things are looking for SVD based method to get it traveling wave with an SVD is Awful because over time it's like it was here and here and here how does this correlate with here well they don't correlation is usually like what's the overlap right and there's no overlap so it looks like a high dimensional system in though it's one mode travel so physics

inform form DMD by Peter bedu who actually uh spent some time actually Imperial here um uh he basically built an algorithm that says how about this we'll we'll think about some of the Symmetries and invariances in your problem and we will put those in first it we like say oh if you have traveling waves there's a circulant matrix that sort of helps take care of that looks for those kind of structures then does DMD it's a really nice tool to go along with the opt some aspects of this are built into the PMD package I

was kind of wondering about the inverse of that so if you run DMD first and then you realize that your result is in great or Like in the chaotic system you had can you then go back and then inform the physics or is it quite hard to do that from the results that you get so that goes actually interesting to a question around classification so if there's a signature let's say in the IG value Spectra would tell you like oh if you see I Val it's clearly suggesting there's a traveling way right from the dist

oh let's bring it back over so it's in an inference that there is a Traveling wave it can come back in now put that in and do it Forward you'd have to have some kind of something that you could go after like if there's a clear signatures on the results that you say oh I values lining up like this mean this let me come backwards and then put that in yeah you do something like that so this um you Daniel hey uh thank you for the talk I was just wondering about how um this optimized

DMD algorithm can pair with Other um ways of doing DMD for example handle DMD using TL embeddings or um using multi resolution DMD yeah so uh in fact a great pairing real quick is is the the hankle DMD so one thing that you can do to enrich your data set to make things more fore like which is perfect for DMD is to take your data and do a time delay embedding most everything I showed here was spatial temporal data you maybe not don't do that as much but for time series data Certainly doing time delay

embedding to build a hankle matrix then use Bop DMD on that that's probably your best way forward so I would definitely integrate it in but the the delay embedding you have to just set up the Matrix yourself that way I don't think we have that set up in pi DMD there's so many features we want to keep building into the pi DMD package that I don't think we've got to that one yet which is just automatically time to lay in beds for you then does OP B DMD then brings you back the results thanks and

thank you very much there's another question from you um hello yeah thank you thank you for the talk Nathan and yeah your book and Brenton's book has inspired me a lot so basically I'm your fans I won't take take too much time so I have a question about optimize D DMD I I saw that in the picture it looks like a feature extraction to find the important terms Uh to build this DMD so my question is what is the physics behind this optimization why this kind of operation can make the DMD uh predicted accurately and

also by doing the dynamic analysis we usually uh pursue the uh multiple stle had prediction but not only the one St have prediction so I'm not quite understand why this kind of optimization can guarantee the multiple steper had prediction so this is my question yeah so good question let me just go and stop Share here and maybe pop up the slides real quick oops let me let me do this here and then do a screen share so uh in some sense when you when you look at DMD here when you when you when you kind

of come after it and sort of do this variable projection onto this form of solution especially for instance and the constraint that this I value here is imaginary by construction you can look as far ahead as you want you'll get a stable model Doesn't mean it's going to be accurate but you've essentially built so for instance if you do a a Time forecaster like with a recurrent neural net or an lstm sometimes when you walk it far out into the future it can go to zero blow up get to a fixed Point there's these interesting

issues that happen with some of these neural Nets that are iterative Maps but we're not really building an IT of map we're just we're basically we're basically saying the time Dynamics is Exponential I can even enforce it to be imaginary which means by Design I can walk it as far into the future as I want without blowing it up and so uh essentially you're it's a constrained regression onto a stable model if that's another way to say it if you want and so for and physics wise like right this is perfect right so I mean

for modes are the the entire basis of most signal processing why because they're awesome and you know what you Can walk for modes out to time as an infinity and they don't blow up they don't go to zero you don't lose your signal it didn't go to Infinity either it's just like and you can also walk backwards to minus infinity same thing and so it's like this 40 modes are sort of like really some of the best ways to represent signals that we've ever come up with with right these objects of signs and cosiness and

essentially that's what we're doing here right is Saying even though I've given it as an as a as an exponential often times what we're doing is really looking for the imaginary components there uh and that's going to give us stable models by Design okay thank you and part of the reason they work is this is a philosophical statement I don't take any uh wait this comment very much maybe but my observation is that most of the world uh most physics on some scale looks pretty Linear with some bursts of nonlinearity and so is it surprisingly

linear model works not really we built this entire phone from linear models essentially right we had quantum mechanics and enm all linear models and we were able to extrapolate out to build this thing it's a remark arable achievement right uh so these are the kind of things we we start thinking about a little bit is that linear models are exceptionally powerful uh and that Most of the world I think is linear and that's why this method Works in many cases not all but you know it does a pretty nice job except for like if you're

data to if you go far onto the future if you're non-stationary statistics that's a hard to handle no matter what you do there's as far as I know like if you read the statistical literature there's step one assume stationary right and then you stay there through Grad school and forever and I was like well I have real data assume stationerity right I mean this is this is a this is an underpinning of problems for all of us right no matter what method you use whether you're going to do a deep neur net statistical ensembling ban

whatever DMD uh uh so it's something we have to think about a little bit too and all these I just have one more question from you don't mind Oh uh so in this uh the uh assumption on the signal input signal would be it has to be oscillatory right so you would have to cover would you have to always necessarily cover a large enough time to capture all the different uh you know illation modes that you want to capture so yeah yeah so in fact yeah so in these ver variants that I'm showing you certainly

in other words if you're on the rising edge of a cosign and you just see that what this will do is say that Looks like exponential growth even though it's going to come up here and turn around now there is a method by Henning longa who does something different than DMD but it's very DMD inspired and what Henning does is he he this is his method what Henning did is said look I'm just going to fit it to cosiness and signs with as few frequencies as possible and what he does he allows us thing if

I see this I'm not Allowing you to fit it to an exponent with a real part you're you have to fit it to a cosine and he doesn't constrain it to periodic boundary condition so what he says is like well if I see this it's going to either be a sign or cosine fit to that and it's going to give you a frequency so I didn't see the whole thing I saw a portion of it but I'm still going to fit it to that so there are methods like this one here uh that actually get

to that this is again very Closely related to DMD and cman Theory with what we're doing but trying to get at this issue the other thing that you can't see is like you know if you sample a certain rate you can't construct frequencies that are faster than that right so you have always this issue of the aliasing and things like that we have another question hi there um so I was wondering especially when when you were talking about the basically like building new Observables uh like is it possible directly in PMD um to do something

like the Cindy approach I have a dictionary of like possible um yeah possible uplifting terms and and use that to kind of like be a put some kind of like regul yeah regulating term to to make it as spse and and try to find what are actually good projections of your observables yeah we don't have anything like that yet in pi DMD you know maybe maybe in V3 when it comes out uh we'll Have something like that right now there's a higher order SVD in there uh and that kind of projects it just to a

larger space so like mostly quadratics cubics but it's like just projects to all of it doesn't do any kind of down selection um so so that's one thing that's there you know I mean I think the big the big thing we wanted to push through with this new version of Pi DMD is just get an actual stable algorithm in there that can handle noise right so These years of me getting emails or talking to people and say hey I tried DMD it doesn't work um I I imagine 90% of what I heard would have been

fixed immediately with optimized DMD and it's unfortunate right because they tried it gave up walked away uh and the same thing happens with Cindy for instance right so Cindy the new version of Cindy now has Ensemble Cindy so in fact we did bagging optimized DMD it works so well I went to Talk to Urban fuzel who's now at Imperial and then Urban at that time was a postdoc with Stephen I said look this works so well on DMD how about we tried on Cindy and that led to directly to Ensemble Cindy and then there was

weak form on weak form Cindy so weak Ensemble Cindy all of a sudden can get into a game where people would say it doesn't work but now and it's all about noise anytime you go to real data I always think that the entry point is if you Can't handle 25 or 30% noise there's no way you can get in the game if you get in 25 or 30% noise you can handle that level of noise you have a shot there's a lot of problems have a lot more noise than that but like I I find

very few real problems where you're not going to have to deal with at least that much noise um and so the ensemble and bagging optimized DMD are now in that point where I can look people straight in the face and say you could Try this and I think you have a shot at it making it work uh without having to like tune it for five weeks to oh if I just do it right and I do all this clean up of the data beforehand maybe I get something out like this is like more just a

stable way to get at it so I have question for to ask this question no I I until 4M when people in Seattle [Music] start thank you uh I have a I'll be very very telegraphic so when we're looking at the cost function again and this is where we discover our feature embedding uh we have this function f n there of X I understand that you discover it on the same mesh as your data yeah now let's imagine that our data comes to us and it's given to us on different meeses so what I want

to do Naively here I want to put a comma after this X and I want to say Theta and then I want to optimize with respect to this Theta so then my f n could be a basis expansion or they could be neural networks my hope would be that I could then do begging on the spatial coordinate and get a mesh independent version of this and my bigger question is if we think about it really deeply we can relate this potentially to the green function view of dynamical systems here We're staying within the cman view

where we are looking at a linear uh dependence in time but we can do the spatial dependence with a green function does this make sense what I'm asking yes yes and I think I think that's a fantastic way to go it wouldn't probably work under variable projection which did this but one of the things that we're looking at is to try to use all the power in Jackson torch of optimization algorithms and how you can come back to This problem and say Hey you know let's do deep DMD right which is just you're right so

right now the fitting procedure is sort of more of this iterative walk down to this thing it's not a deep neural net at all but you can start measuring imagining exactly what you're talking about like I have we have all of us at are disposal right once you do pip install jacks or pip install torch yeah like you have the power of the world of optimization at Your fingertips and so if you can frame the right loss functions and the things you want there's a lot more you can get out of this than I think

we currently have and so I think that would be amazing actually you know I'm the guy who didn't make it to yesterday's meeting with Muhammad I'm kind of working along these lines with a green function what I said maybe maybe I can look you up sometimes if you're around well I'm here I'm here by the way in Terms of green function I skipped one slide but we did do one thing like this we did a we did a deep green idea which was you know this idea of embedding in a linear space and then have

everything in a linear space this is what this guy Dan Shay did so it's shades of what you want to go after which is you know Green function fell out of you know whatever popularity in the early 90s because everybody said well why would I constrain myself to solving a linear Problem because I have this nonlinear problem I just simulate it right and so everybody just started doing nonlinear simulations and nobody used greens functions anymore because they were only a linear for linear problems but imagine well I just need to learn a transform put it

in a new coordinate system now all the all the greens function stuff holds and get a fundamental solution out uh you know and but I like this idea of like a meshless version of this would be Amazing too right meshless maybe even a parameterized meshless version like a neural operator combined with a Time dependence of exponents like thank you very thank you for the Fantastic talk I'm around so we might see hopefully I'll see you on Thursdays here or something yeah okay so just one last note on on the noise so so you you mention

a lot of exactly inde it not work because noise is present in the data but maybe can comment on the Distribution of the noise so the example show the noise was homogeneous but in transient situations the noise is not like that and most real situations are composed of transing behavior so I'm a big fan of dmdc because in the MCC if you have known sources of foring of the system the the coupling happens really well yeah and especially like example in non periodic works very there's periodicity if you have nonod perod Behavior DD suffs and

I wonder What does in those situations but dmdc works nicely because again if you have U we have exposure have availability of that control signal it's work even non periodic Behavior so I wondering like how Bob DMD deals with transient really transi situations I've had good experiences with the her DMD situations but I wonder DD yeah so the question is around how well does B DMD do on on Transit behaviors right so that's the sort of in some sense the the Workhorse Of dmdc which actually accounts for actuation in the system and this ability to

handle this so uh Bop DMD currently doesn't really handle that very well but this is actually an active area of research we're doing right now is to try to figure out can we do not dmdc but Bop dmdc other there all these massive improvements that we got from going from exact DMD to bop DMD currently dmdc is only formulated in Terms of exact DMD it has this huge advantage of being able to hand handle you know things that are forcings in the system but what we really want to do is bring that over to bop

DND and then if we could have a Bop dmdc then you imagine now I get this much more accurate model that handles this there uh but it's a hard problem to get sorted so we're trying to like figure out how To do that and hopefully we can get into V3 of Pi Pi PMD the wish list goes it just keeps getting bigger and bigger for M3 we have question yeah um so um in this there is a natural ordering so it's a Time series data that we've been focusing on is it possible to work on

data which does not necessarily have for example a mesh so in an image so you don't just an image not a video so there's no natural ordering of the points of the point so Is there a wave that you see we haven't done that I mean you could I mean if you had an ordering of points and you wanted to do a spatial DMD model you could walk it from left to right so that you get spatial frequencies but you'd still have it ordered by columns like okay I you know my picture here's the ones